🚀 InstructCLIP:利用對比學習進行自動數據精煉改進指令引導的圖像編輯 (CVPR 2025)

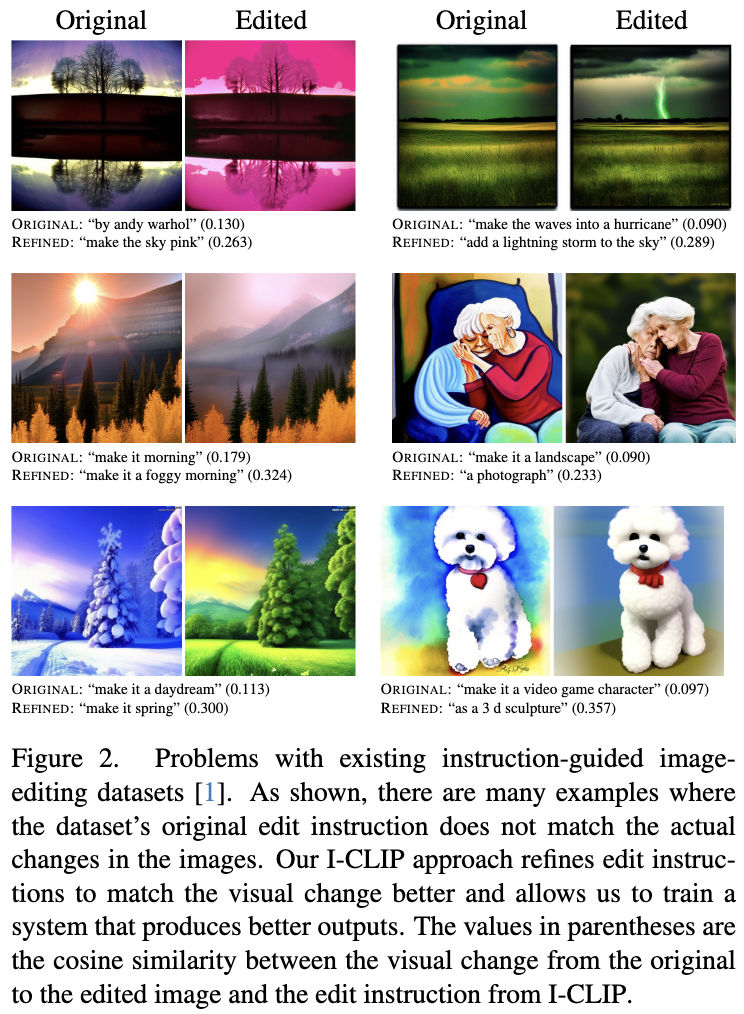

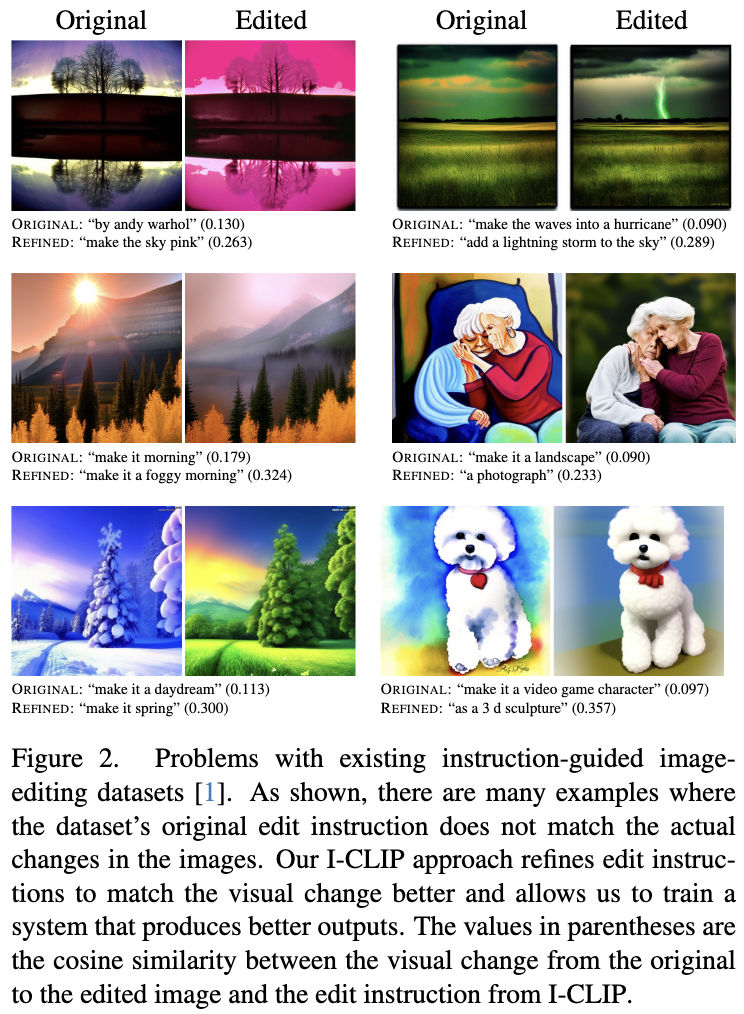

本項目基於對比學習實現自動數據精煉,改進了指令引導的圖像編輯技術,有效提升了圖像編輯的準確性和效率。

模型信息

| 屬性 |

詳情 |

| 基礎模型 |

SherryXTChen/LatentDiffusionDINOv2 |

| 訓練數據集 |

timbrooks/instructpix2pix - clip - filtered、SherryXTChen/InstructCLIP - InstructPix2Pix - Data |

| 模型類型 |

image - to - image |

| 庫名稱 |

diffusers |

| 標籤 |

model_hub_mixin、pytorch_model_hub_mixin |

| 許可證 |

apache - 2.0 |

相關鏈接

Arxiv | 圖像編輯模型 | 數據精煉模型 | 數據

🚀 快速開始

本模型已使用 PytorchModelHubMixin 集成推送到模型中心。該模型基於論文 Instruct - CLIP: Improving Instruction - Guided Image Editing with Automated Data Refinement Using Contrastive Learning。

✨ 主要特性

📦 安裝指南

pip install -r requirements.txt

💻 使用示例

基礎用法

from PIL import Image

import torch

from torchvision import transforms

from model import InstructCLIP

from utils import get_sd_components, normalize

parser = argparse.ArgumentParser(description="Simple example of estimating edit instruction from image pair")

parser.add_argument(

"--pretrained_instructclip_name_or_path",

type=str,

default="SherryXTChen/Instruct-CLIP",

help=(

"instructclip pretrained checkpoints"

),

)

parser.add_argument(

"--pretrained_model_name_or_path",

type=str,

default="runwayml/stable-diffusion-v1-5",

help=(

"sd pretrained checkpoints"

),

)

parser.add_argument(

"--input_path",

type=str,

default="assets/1_input.jpg",

help=(

"Input image path"

)

)

parser.add_argument(

"--output_path",

type=str,

default="assets/1_output.jpg",

help=(

"Output image path"

)

)

args = parser.parse_args()

device = "cuda"

model = InstructCLIP.from_pretrained("SherryXTChen/Instruct-CLIP")

model = model.to(device).eval()

tokenizer, _, vae, _, _ = get_sd_components(args, device, torch.float32)

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize(mean=[0.5], std=[0.5]),

])

image_list = [args.input_path, args.output_path]

image_list = [

transform(Image.open(f).resize((512, 512))).unsqueeze(0).to(device)

for f in image_list

]

with torch.no_grad():

image_list = [vae.encode(x).latent_dist.sample() * vae.config.scaling_factor for x in image_list]

zero_timesteps = torch.zeros_like(torch.tensor([0])).to(device)

img_feat = model.get_image_features(

inp=image_list[0], out=image_list[1], inp_t=zero_timesteps, out_t=zero_timesteps)

img_feat = normalize(img_feat)

pred_instruct_input_ids = model.text_decoder.infer(img_feat[:1])[0]

pred_instruct = tokenizer.decode(pred_instruct_input_ids, skip_special_tokens=True)

print(pred_instruct)

📄 許可證

本項目採用 apache - 2.0 許可證。

📚 引用

@misc{chen2025instructclipimprovinginstructionguidedimage,

title={Instruct-CLIP: Improving Instruction-Guided Image Editing with Automated Data Refinement Using Contrastive Learning},

author={Sherry X. Chen and Misha Sra and Pradeep Sen},

year={2025},

eprint={2503.18406},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2503.18406},

}

Transformers 支持多種語言

Transformers 支持多種語言 Transformers 支持多種語言

Transformers 支持多種語言 Transformers 英語

Transformers 英語 Transformers 英語

Transformers 英語