模型概述

模型特點

模型能力

使用案例

🚀 鯨豚獸模型OpenOrca - Platypus2 - 13B

OpenOrca - Platypus2 - 13B是一款強大的語言模型,它融合了不同數據集的優勢,在多個評估指標上表現出色。該模型結合了garage - bAInd/Platypus2 - 13B和Open - Orca/OpenOrcaxOpenChat - Preview2 - 13B的特性,為自然語言處理任務提供了更高效、準確的解決方案。

🚀 快速開始

我們正在訓練更多的模型,敬請關注我們組織即將與令人興奮的合作伙伴共同發佈的新模型。我們也會在Discord上提前發佈預告,你可以在這裡找到我們的Discord鏈接: https://AlignmentLab.ai

如果你想可視化我們完整的(預過濾)數據集,可以查看我們的 Nomic Atlas Map。

✨ 主要特性

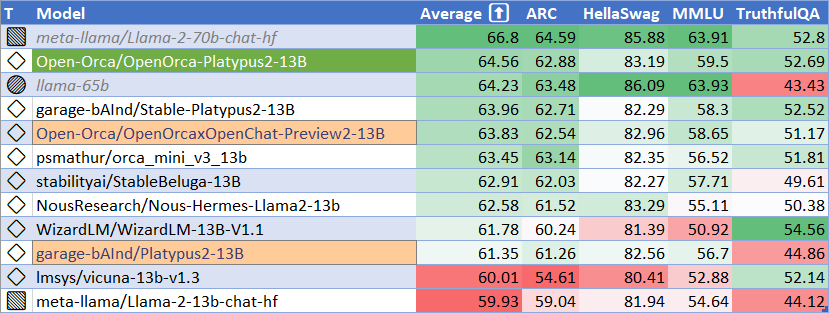

評估表現

HuggingFace排行榜表現

| 指標 | 值 |

|---|---|

| MMLU (5 - shot) | 59.5 |

| ARC (25 - shot) | 62.88 |

| HellaSwag (10 - shot) | 83.19 |

| TruthfulQA (0 - shot) | 52.69 |

| 平均值 | 64.56 |

我們使用 [Language Model Evaluation Harness](https://github.com/EleutherAI/lm - evaluation - harness) 來運行上述基準測試,使用的版本與HuggingFace大語言模型排行榜相同。

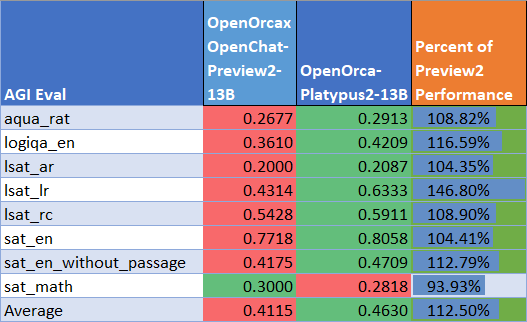

AGIEval表現

我們將結果與基礎Preview2模型進行了比較(使用LM Evaluation Harness)。我們發現該模型在AGI Eval上的性能達到了基礎模型的 112%,平均得分為 0.463。這種提升很大程度上得益於LSAT邏輯推理性能的顯著提高。

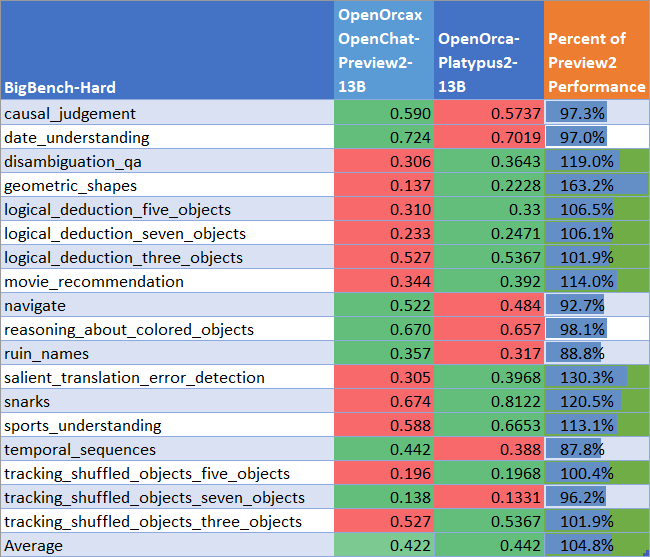

BigBench - Hard表現

我們將結果與基礎Preview2模型進行了比較(使用LM Evaluation Harness)。我們發現該模型在BigBench - Hard上的性能達到了基礎模型的 105%,平均得分為 0.442。

📦 安裝指南

復現評估結果(用於HuggingFace排行榜評估)

安裝LM Evaluation Harness:

# 克隆倉庫

git clone https://github.com/EleutherAI/lm-evaluation-harness.git

# 進入倉庫目錄

cd lm-evaluation-harness

# 檢出正確的提交

git checkout b281b0921b636bc36ad05c0b0b0763bd6dd43463

# 安裝

pip install -e .

每個任務都在單個A100 - 80GB GPU上進行評估。

ARC:

python main.py --model hf-causal-experimental --model_args pretrained=Open-Orca/OpenOrca-Platypus2-13B --tasks arc_challenge --batch_size 1 --no_cache --write_out --output_path results/OpenOrca-Platypus2-13B/arc_challenge_25shot.json --device cuda --num_fewshot 25

HellaSwag:

python main.py --model hf-causal-experimental --model_args pretrained=Open-Orca/OpenOrca-Platypus2-13B --tasks hellaswag --batch_size 1 --no_cache --write_out --output_path results/OpenOrca-Platypus2-13B/hellaswag_10shot.json --device cuda --num_fewshot 10

MMLU:

python main.py --model hf-causal-experimental --model_args pretrained=Open-Orca/OpenOrca-Platypus2-13B --tasks hendrycksTest-* --batch_size 1 --no_cache --write_out --output_path results/OpenOrca-Platypus2-13B/mmlu_5shot.json --device cuda --num_fewshot 5

TruthfulQA:

python main.py --model hf-causal-experimental --model_args pretrained=Open-Orca/OpenOrca-Platypus2-13B --tasks truthfulqa_mc --batch_size 1 --no_cache --write_out --output_path results/OpenOrca-Platypus2-13B/truthfulqa_0shot.json --device cuda

📚 詳細文檔

模型詳情

| 屬性 | 詳情 |

|---|---|

| 訓練者 | Platypus2 - 13B 由Cole Hunter和Ariel Lee訓練;OpenOrcaxOpenChat - Preview2 - 13B 由Open - Orca訓練 |

| 模型類型 | OpenOrca - Platypus2 - 13B 是基於Llama 2 Transformer架構的自迴歸語言模型 |

| 語言 | 英語 |

| Platypus2 - 13B基礎權重許可證 | 非商業知識共享許可證 ([CC BY - NC - 4.0](https://creativecommons.org/licenses/by - nc/4.0/)) |

| OpenOrcaxOpenChat - Preview2 - 13B基礎權重許可證 | Llama 2商業許可證 |

提示模板

Platypus2 - 13B基礎提示模板

### 指令:

<prompt> (不包含<>)

### 回覆:

OpenOrcaxOpenChat - Preview2 - 13B基礎提示模板

OpenChat Llama2 V1:有關更多信息,請參閱 OpenOrcaxOpenChat - Preview2 - 13B。

訓練詳情

訓練數據集

garage - bAInd/Platypus2 - 13B 使用基於STEM和邏輯的數據集 [garage - bAInd/Open - Platypus](https://huggingface.co/datasets/garage - bAInd/Open - Platypus) 進行訓練。

有關更多信息,請參閱我們的 論文 和 [項目網頁](https://platypus - llm.github.io)。

Open - Orca/OpenOrcaxOpenChat - Preview2 - 13B 使用 [OpenOrca數據集](https://huggingface.co/datasets/Open - Orca/OpenOrca) 中大部分GPT - 4數據的精煉子集進行訓練。

訓練過程

Open - Orca/Platypus2 - 13B 使用LoRA在1x A100 - 80GB上進行指令微調。有關訓練細節和推理說明,請參閱 Platypus GitHub倉庫。

侷限性和偏差

Llama 2及其微調變體是一項新技術,使用時存在風險。到目前為止進行的測試均使用英語,且無法涵蓋所有場景。因此,與所有大語言模型一樣,Llama 2及其任何微調變體的潛在輸出無法提前預測,在某些情況下,模型可能會對用戶提示產生不準確、有偏差或其他令人反感的響應。因此,在部署Llama 2變體的任何應用程序之前,開發人員應針對其特定應用對模型進行安全測試和調整。

請參閱 [負責任使用指南](https://ai.meta.com/llama/responsible - use - guide/)。

🔧 技術細節

引用

@software{hunterlee2023orcaplaty1

title = {OpenOrcaPlatypus: Llama2-13B Model Instruct-tuned on Filtered OpenOrcaV1 GPT-4 Dataset and Merged with divergent STEM and Logic Dataset Model},

author = {Ariel N. Lee and Cole J. Hunter and Nataniel Ruiz and Bleys Goodson and Wing Lian and Guan Wang and Eugene Pentland and Austin Cook and Chanvichet Vong and "Teknium"},

year = {2023},

publisher = {HuggingFace},

journal = {HuggingFace repository},

howpublished = {\url{https://huggingface.co/Open-Orca/OpenOrca-Platypus2-13B},

}

@article{platypus2023,

title={Platypus: Quick, Cheap, and Powerful Refinement of LLMs},

author={Ariel N. Lee and Cole J. Hunter and Nataniel Ruiz},

booktitle={arXiv preprint arxiv:2308.07317},

year={2023}

}

@software{OpenOrcaxOpenChatPreview2,

title = {OpenOrcaxOpenChatPreview2: Llama2-13B Model Instruct-tuned on Filtered OpenOrcaV1 GPT-4 Dataset},

author = {Guan Wang and Bleys Goodson and Wing Lian and Eugene Pentland and Austin Cook and Chanvichet Vong and "Teknium"},

year = {2023},

publisher = {HuggingFace},

journal = {HuggingFace repository},

howpublished = {\url{https://https://huggingface.co/Open-Orca/OpenOrcaxOpenChat-Preview2-13B},

}

@software{openchat,

title = {{OpenChat: Advancing Open-source Language Models with Imperfect Data}},

author = {Wang, Guan and Cheng, Sijie and Yu, Qiying and Liu, Changling},

doi = {10.5281/zenodo.8105775},

url = {https://github.com/imoneoi/openchat},

version = {pre-release},

year = {2023},

month = {7},

}

@misc{mukherjee2023orca,

title={Orca: Progressive Learning from Complex Explanation Traces of GPT-4},

author={Subhabrata Mukherjee and Arindam Mitra and Ganesh Jawahar and Sahaj Agarwal and Hamid Palangi and Ahmed Awadallah},

year={2023},

eprint={2306.02707},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

@misc{touvron2023llama,

title={Llama 2: Open Foundation and Fine-Tuned Chat Models},

author={Hugo Touvron and Louis Martin and Kevin Stone and Peter Albert and Amjad Almahairi and Yasmine Babaei and Nikolay Bashlykov and Soumya Batra and Prajjwal Bhargava and Shruti Bhosale and Dan Bikel and Lukas Blecher and Cristian Canton Ferrer and Moya Chen and Guillem Cucurull and David Esiobu and Jude Fernandes and Jeremy Fu and Wenyin Fu and Brian Fuller and Cynthia Gao and Vedanuj Goswami and Naman Goyal and Anthony Hartshorn and Saghar Hosseini and Rui Hou and Hakan Inan and Marcin Kardas and Viktor Kerkez and Madian Khabsa and Isabel Kloumann and Artem Korenev and Punit Singh Koura and Marie-Anne Lachaux and Thibaut Lavril and Jenya Lee and Diana Liskovich and Yinghai Lu and Yuning Mao and Xavier Martinet and Todor Mihaylov and Pushkar Mishra and Igor Molybog and Yixin Nie and Andrew Poulton and Jeremy Reizenstein and Rashi Rungta and Kalyan Saladi and Alan Schelten and Ruan Silva and Eric Michael Smith and Ranjan Subramanian and Xiaoqing Ellen Tan and Binh Tang and Ross Taylor and Adina Williams and Jian Xiang Kuan and Puxin Xu and Zheng Yan and Iliyan Zarov and Yuchen Zhang and Angela Fan and Melanie Kambadur and Sharan Narang and Aurelien Rodriguez and Robert Stojnic and Sergey Edunov and Thomas Scialom},

year={2023},

eprint= arXiv 2307.09288

}

@misc{longpre2023flan,

title={The Flan Collection: Designing Data and Methods for Effective Instruction Tuning},

author={Shayne Longpre and Le Hou and Tu Vu and Albert Webson and Hyung Won Chung and Yi Tay and Denny Zhou and Quoc V. Le and Barret Zoph and Jason Wei and Adam Roberts},

year={2023},

eprint={2301.13688},

archivePrefix={arXiv},

primaryClass={cs.AI}

}

@article{hu2021lora,

title={LoRA: Low-Rank Adaptation of Large Language Models},

author={Hu, Edward J. and Shen, Yelong and Wallis, Phillip and Allen-Zhu, Zeyuan and Li, Yuanzhi and Wang, Shean and Chen, Weizhu},

journal={CoRR},

year={2021}

}

📄 許可證

本項目採用CC BY - NC - 4.0許可證。

Transformers

Transformers Transformers 支持多種語言

Transformers 支持多種語言 Transformers 支持多種語言

Transformers 支持多種語言 Transformers 英語

Transformers 英語