🚀 Depth Anything V2 (Fine-tuned for Metric Depth Estimation) - Transformers Version

This model is a fine-tuned variant of Depth Anything V2, specifically designed for indoor metric depth estimation using the synthetic Hypersim datasets. It's compatible with the transformers library.

Depth Anything V2, introduced in the paper of the same name by Lihe Yang et al., shares the architecture of the original Depth Anything. However, it uses synthetic data and a larger-capacity teacher model to achieve more precise and robust depth predictions. This fine-tuned version for metric depth estimation was first released in this repository.

✨ Features

Model Variants

Six metric depth models of three scales for indoor and outdoor scenes are available:

📚 Documentation

Model description

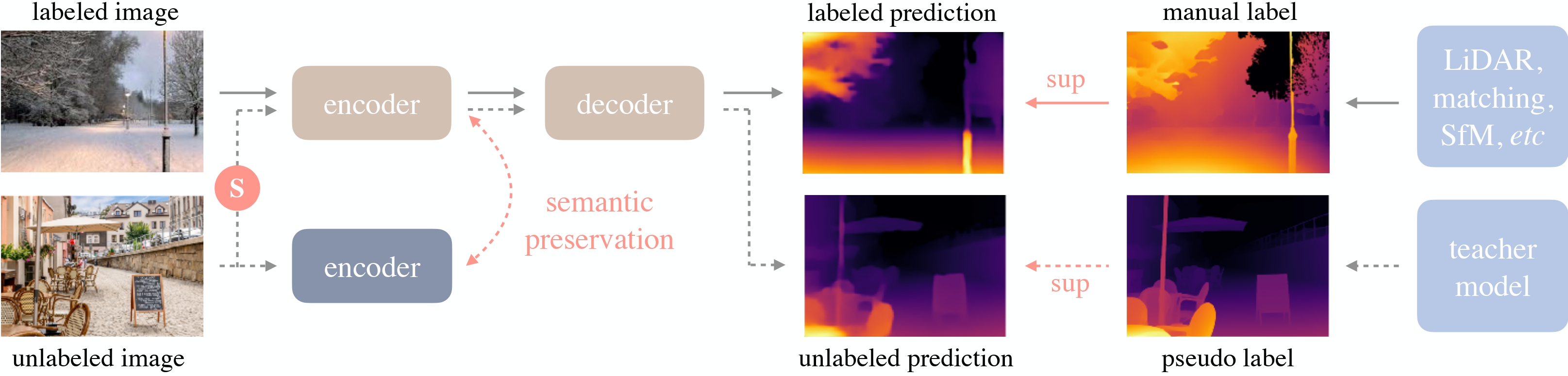

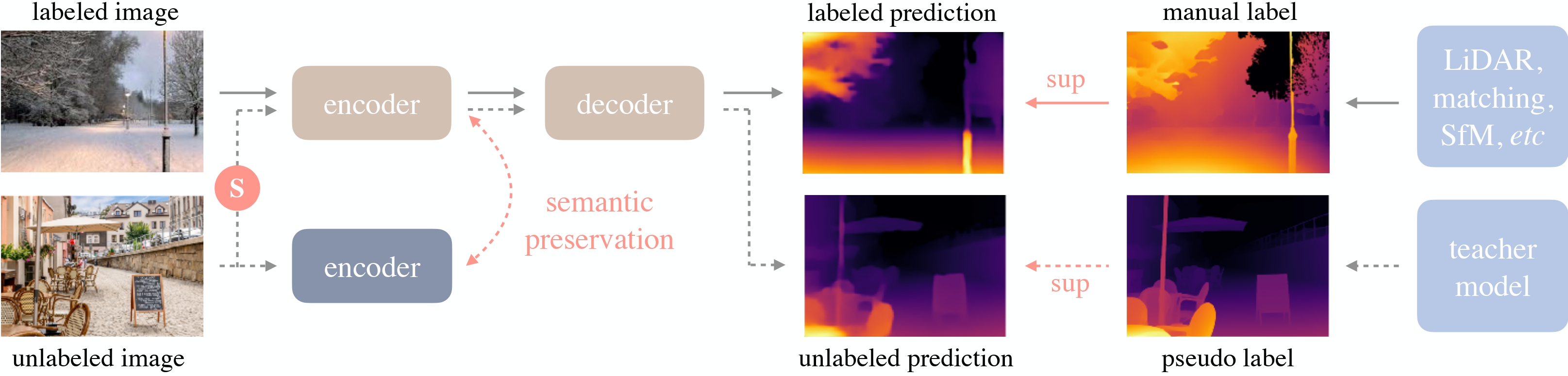

Depth Anything V2 utilizes the DPT architecture with a DINOv2 backbone. Trained on ~600K synthetic labeled images and ~62 million real unlabeled images, it achieves state-of-the-art results in both relative and absolute depth estimation.

Depth Anything overview. Taken from the original paper.

Intended uses & limitations

You can use the raw model for zero-shot depth estimation tasks. Check the model hub for other versions relevant to your task.

Requirements

transformers>=4.45.0

Alternatively, install the latest transformers version from the source:

pip install git+https://github.com/huggingface/transformers

💻 Usage Examples

Basic Usage

Here's how to perform zero-shot depth estimation with this model:

from transformers import pipeline

from PIL import Image

import requests

pipe = pipeline(task="depth-estimation", model="depth-anything/Depth-Anything-V2-Metric-Indoor-Large-hf")

url = 'http://images.cocodataset.org/val2017/000000039769.jpg'

image = Image.open(requests.get(url, stream=True).raw)

depth = pipe(image)["depth"]

Advanced Usage

You can also use the model and processor classes:

from transformers import AutoImageProcessor, AutoModelForDepthEstimation

import torch

import numpy as np

from PIL import Image

import requests

url = "http://images.cocodataset.org/val2017/000000039769.jpg"

image = Image.open(requests.get(url, stream=True).raw)

image_processor = AutoImageProcessor.from_pretrained("depth-anything/Depth-Anything-V2-Metric-Indoor-Large-hf")

model = AutoModelForDepthEstimation.from_pretrained("depth-anything/Depth-Anything-V2-Metric-Indoor-Large-hf")

inputs = image_processor(images=image, return_tensors="pt")

with torch.no_grad():

outputs = model(**inputs)

predicted_depth = outputs.predicted_depth

prediction = torch.nn.functional.interpolate(

predicted_depth.unsqueeze(1),

size=image.size[::-1],

mode="bicubic",

align_corners=False,

)

For more code examples, refer to the documentation.

📄 License

Citation

@article{depth_anything_v2,

title={Depth Anything V2},

author={Yang, Lihe and Kang, Bingyi and Huang, Zilong and Zhao, Zhen and Xu, Xiaogang and Feng, Jiashi and Zhao, Hengshuang},

journal={arXiv:2406.09414},

year={2024}

}

@inproceedings{depth_anything_v1,

title={Depth Anything: Unleashing the Power of Large-Scale Unlabeled Data},

author={Yang, Lihe and Kang, Bingyi and Huang, Zilong and Xu, Xiaogang and Feng, Jiashi and Zhao, Hengshuang},

booktitle={CVPR},

year={2024}

}