Vit Gpt2 Image Captioning

這是一個基於ViT和GPT2架構的圖像描述生成模型,能夠為輸入圖像生成自然語言描述。

下載量 939.88k

發布時間 : 3/2/2022

模型概述

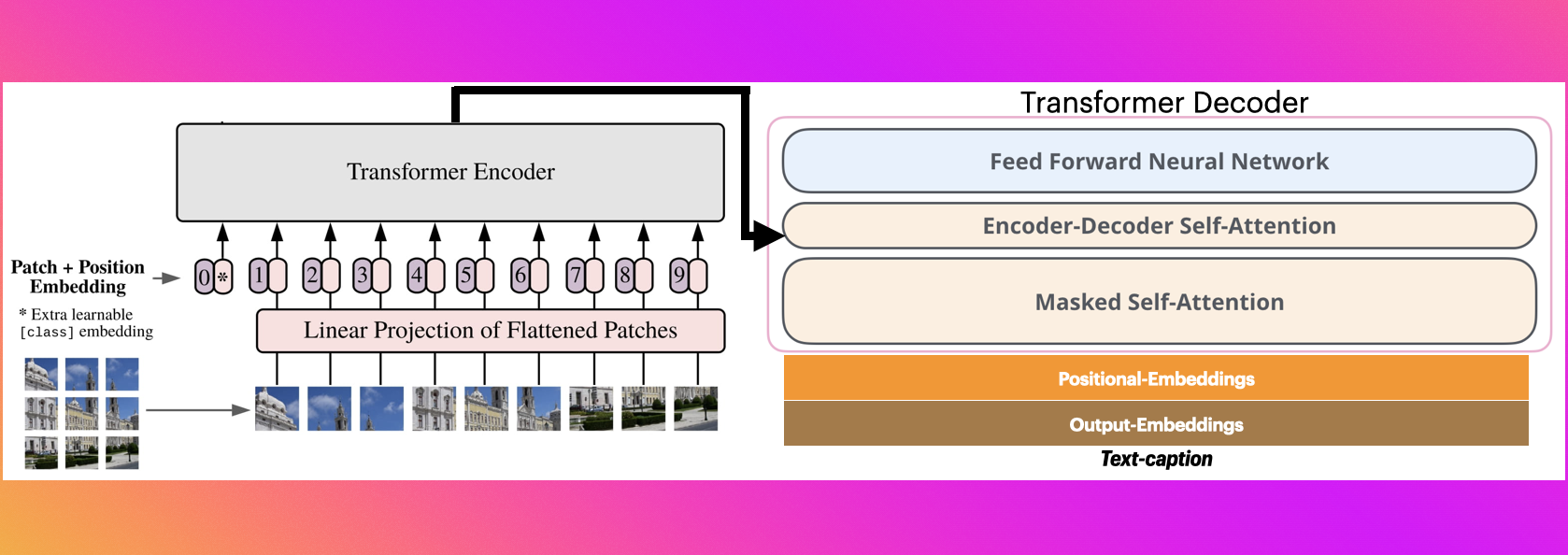

該模型結合了視覺編碼器(ViT)和文本解碼器(GPT2),能夠將圖像內容轉換為自然語言描述。適用於自動圖像標註、輔助視覺障礙人士等場景。

模型特點

視覺-語言聯合模型

結合了視覺Transformer編碼器和GPT2文本解碼器,實現圖像到文本的轉換

多場景適用

能夠處理各種常見場景的圖像描述生成

預訓練模型

基於大規模數據集預訓練,可直接用於推理

模型能力

圖像內容理解

自然語言生成

自動圖像標註

使用案例

輔助技術

視覺障礙輔助

為視覺障礙人士描述圖像內容

生成準確描述幫助理解圖像

內容管理

自動圖像標註

為大量圖像自動生成描述標籤

提高圖像檢索和管理效率

精選推薦AI模型

Llama 3 Typhoon V1.5x 8b Instruct

專為泰語設計的80億參數指令模型,性能媲美GPT-3.5-turbo,優化了應用場景、檢索增強生成、受限生成和推理任務

大型語言模型 Transformers 支持多種語言

Transformers 支持多種語言

Transformers 支持多種語言

Transformers 支持多種語言L

scb10x

3,269

16

Cadet Tiny

Openrail

Cadet-Tiny是一個基於SODA數據集訓練的超小型對話模型,專為邊緣設備推理設計,體積僅為Cosmo-3B模型的2%左右。

對話系統 Transformers 英語

Transformers 英語

Transformers 英語

Transformers 英語C

ToddGoldfarb

2,691

6

Roberta Base Chinese Extractive Qa

基於RoBERTa架構的中文抽取式問答模型,適用於從給定文本中提取答案的任務。

問答系統 中文

R

uer

2,694

98