Model Overview

Model Features

Model Capabilities

Use Cases

🚀 Yi: Next Generation of Open-Source LLMs

The Yi series models are the next generation of open - source large language models, offering powerful language understanding and reasoning capabilities. Trained on a vast multilingual corpus, they stand out among global LLMs, suitable for various personal, academic, and commercial use cases.

🚀 Quick Start

💡 Tip: If you want to get started with the Yi model and explore different methods for inference, check out the Yi Cookbook.

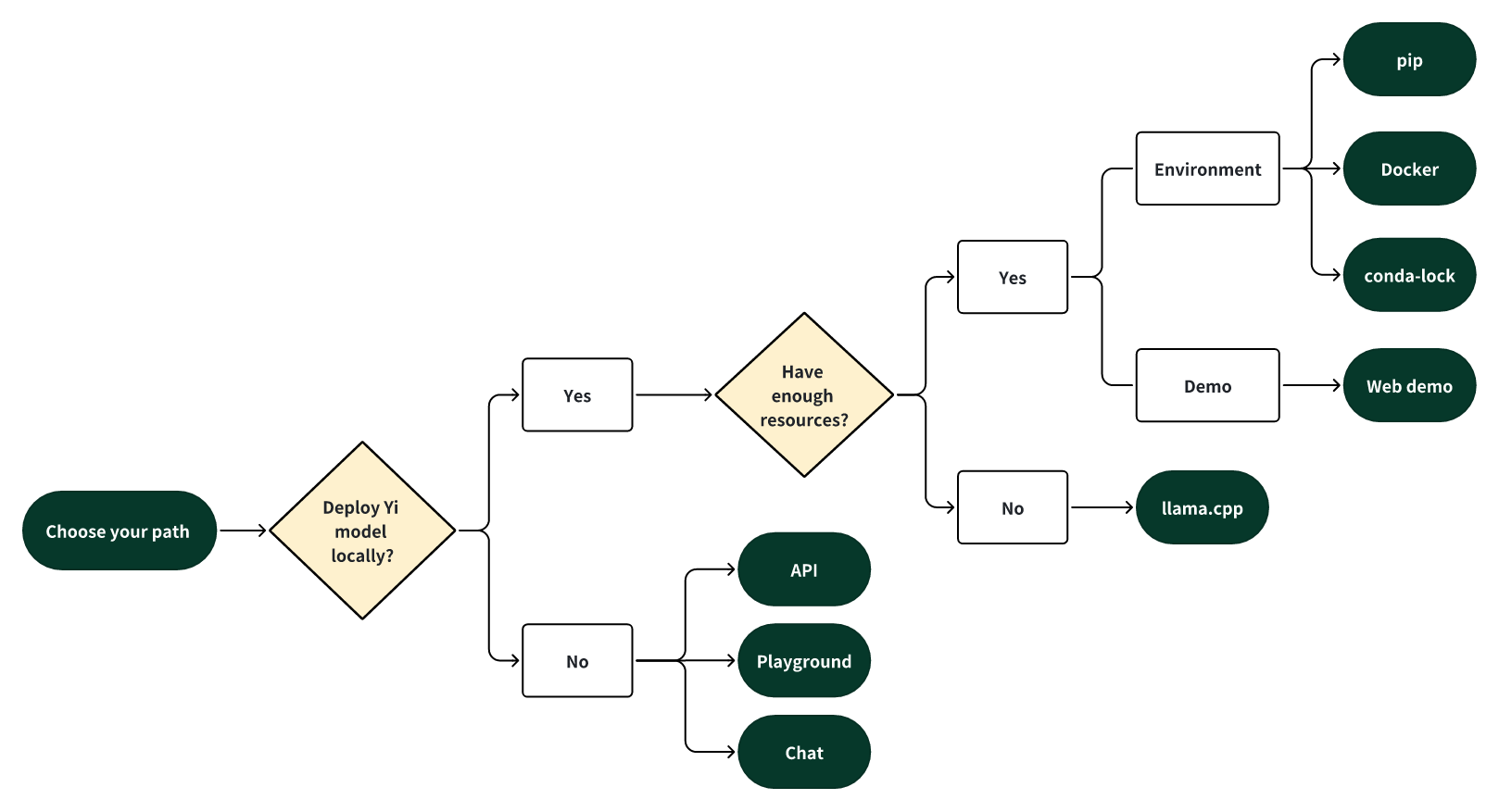

Choose your path

Select one of the following paths to begin your journey with Yi!

🎯 Deploy Yi locally

If you prefer to deploy Yi models locally,

- 🙋♀️ and you have sufficient resources (for example, NVIDIA A800 80GB), you can follow the steps below.

Quick start - pip

Quick start - docker

Quick start - llama.cpp

Quick start - conda - lock

Web demo

✨ Features

Introduction

- 🤖 The Yi series models are the next generation of open - source large language models trained from scratch by 01.AI.

- 🙌 Targeted as a bilingual language model and trained on 3T multilingual corpus, the Yi series models become one of the strongest LLM worldwide, showing promise in language understanding, commonsense reasoning, reading comprehension, and more. For example,

- Yi - 34B - Chat model landed in second place (following GPT - 4 Turbo), outperforming other LLMs (such as GPT - 4, Mixtral, Claude) on the AlpacaEval Leaderboard (based on data available up to January 2024).

- Yi - 34B model ranked first among all existing open - source models (such as Falcon - 180B, Llama - 70B, Claude) in both English and Chinese on various benchmarks, including Hugging Face Open LLM Leaderboard (pre - trained) and C - Eval (based on data available up to November 2023).

- 🙏 (Credits to Llama) Thanks to the Transformer and Llama open - source communities, as they reduce the efforts required to build from scratch and enable the utilization of the same tools within the AI ecosystem.

If you're interested in Yi's adoption of Llama architecture and license usage policy, see Yi's relation with Llama. ⬇️

> 💡 TL;DR > > The Yi series models adopt the same model architecture as Llama but are **NOT** derivatives of Llama. - Both Yi and Llama are based on the Transformer structure, which has been the standard architecture for large language models since 2018. - Grounded in the Transformer architecture, Llama has become a new cornerstone for the majority of state - of - the - art open - source models due to its excellent stability, reliable convergence, and robust compatibility. This positions Llama as the recognized foundational framework for models including Yi. - Thanks to the Transformer and Llama architectures, other models can leverage their power, reducing the effort required to build from scratch and enabling the utilization of the same tools within their ecosystems. - However, the Yi series models are NOT derivatives of Llama, as they do not use Llama's weights. - As Llama's structure is employed by the majority of open - source models, the key factors of determining model performance are training datasets, training pipelines, and training infrastructure. - Developing in a unique and proprietary way, Yi has independently created its own high - quality training datasets, efficient training pipelines, and robust training infrastructure entirely from the ground up. This effort has led to excellent performance with Yi series models ranking just behind GPT4 and surpassing Llama on the [Alpaca Leaderboard in Dec 2023](https://tatsu - lab.github.io/alpaca_eval/).

News

🔥 2024 - 07 - 29: The Yi Cookbook 1.0 is released, featuring tutorials and examples in both Chinese and English.

🎯 2024 - 05 - 13: The Yi - 1.5 series models are open - sourced, further improving coding, math, reasoning, and instruction - following abilities.

🎯 2024 - 03 - 16: The Yi - 9B - 200K is open - sourced and available to the public.

🎯 2024 - 03 - 08: Yi Tech Report is published!

🔔 2024 - 03 - 07: The long text capability of the Yi - 34B - 200K has been enhanced.

In the "Needle - in - a - Haystack" test, the Yi - 34B - 200K's performance is improved by 10.5%, rising from 89.3% to an impressive 99.8%. We continue to pre - train the model on 5B tokens long - context data mixture and demonstrate a near - all - green performance.

🎯 2024 - 03 - 06: The Yi - 9B is open - sourced and available to the public.

Yi - 9B stands out as the top performer among a range of similar - sized open - source models (including Mistral - 7B, SOLAR - 10.7B, Gemma - 7B, DeepSeek - Coder - 7B - Base - v1.5 and more), particularly excelling in code, math, common - sense reasoning, and reading comprehension.

🎯 2024 - 01 - 23: The Yi - VL models, Yi - VL - 34B and Yi - VL - 6B, are open - sourced and available to the public.

Yi - VL - 34B has ranked first among all existing open - source models in the latest benchmarks, including MMMU and CMMMU (based on data available up to January 2024).

🎯 2023 - 11 - 23: Chat models are open - sourced and available to the public.

This release contains two chat models based on previously released base models, two 8 - bit models quantized by GPTQ, and two 4 - bit models quantized by AWQ. - `Yi - 34B - Chat` - `Yi - 34B - Chat - 4bits` - `Yi - 34B - Chat - 8bits` - `Yi - 6B - Chat` - `Yi - 6B - Chat - 4bits` - `Yi - 6B - Chat - 8bits`

You can try some of them interactively at:

- [Hugging Face](https://huggingface.co/spaces/01-ai/Yi - 34B - Chat)

- Replicate

🔔 2023 - 11 - 23: The Yi Series Models Community License Agreement is updated to v2.1.

🔥 2023 - 11 - 08: Invited test of Yi - 34B chat model.

Application form: - [English](https://cn.mikecrm.com/l91ODJf) - [Chinese](https://cn.mikecrm.com/gnEZjiQ)

🎯 2023 - 11 - 05: The base models, Yi - 6B - 200K and Yi - 34B - 200K, are open - sourced and available to the public.

This release contains two base models with the same parameter sizes as the previous release, except that the context window is extended to 200K.

🎯 2023 - 11 - 02: The base models, Yi - 6B and Yi - 34B, are open - sourced and available to the public.

The first public release contains two bilingual (English/Chinese) base models with the parameter sizes of 6B and 34B. Both of them are trained with 4K sequence length and can be extended to 32K during inference time.

Models

Yi models come in multiple sizes and cater to different use cases. You can also fine - tune Yi models to meet your specific requirements. If you want to deploy Yi models, make sure you meet the software and hardware requirements.

Chat models

| Model | Download |

|---|---|

| Yi - 34B - Chat | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi - 34B - Chat) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi - 34B - Chat/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi - 34B - Chat) |

| Yi - 34B - Chat - 4bits | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi - 34B - Chat - 4bits) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi - 34B - Chat - 4bits/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi - 34B - Chat - 4bits) |

| Yi - 34B - Chat - 8bits | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi - 34B - Chat - 8bits) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi - 34B - Chat - 8bits/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi - 34B - Chat - 8bits) |

| Yi - 6B - Chat | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi - 6B - Chat) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi - 6B - Chat/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi - 6B - Chat) |

| Yi - 6B - Chat - 4bits | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi - 6B - Chat - 4bits) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi - 6B - Chat - 4bits/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi - 6B - Chat - 4bits) |

| Yi - 6B - Chat - 8bits | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi - 6B - Chat - 8bits) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi - 6B - Chat - 8bits/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi - 6B - Chat - 8bits) |

- 4 - bit series models are quantized by AWQ.

- 8 - bit series models are quantized by GPTQ

- All quantized models have a low barrier to use since they can be deployed on consumer - grade GPUs (e.g., 3090, 4090).

Base models

| Model | Download |

|---|---|

| Yi - 34B | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi - 34B) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi - 34B/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi - 6B - Chat - 8bits) |

| Yi - 34B - 200K | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi - 34B - 200K) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi - 34B - 200K/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi - 6B - Chat - 8bits) |

| Yi - 9B | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi - 9B) • [🤖 ModelScope](https://wisemodel.cn/models/01.AI/Yi - 6B - Chat - 8bits) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi - 9B) |

| Yi - 9B - 200K | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi - 9B - 200K) • [🤖 ModelScope](https://wisemodel.cn/models/01.AI/Yi - 9B - 200K) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi - 6B - Chat - 8bits) |

| Yi - 6B | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi - 6B) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi - 6B/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi - 6B - Chat - 8bits) |

| Yi - 6B - 200K | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi - 6B - 200K) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi - 6B - 200K/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi - 6B - Chat - 8bits) |

- 200k is roughly equivalent to 400,000 Chinese characters.

- If you want to use the previous version of the Yi - 34B - 200K (released on Nov 5, 2023), run git checkout 069cd341d60f4ce4b07ec394e82b79e94f656cf to download the weight.

Model info

- For chat and base models

| Model | Intro | Default context window | Pretrained tokens | Training Data Date |

|---|---|---|---|---|

| 6B series models | They are suitable for personal and academic use. | 4K | 3T | Up to June 2023 |

| 9B series models | It is the best at coding and math in the Yi series models. | 4K | Yi - 9B is continuously trained based on Yi - 6B, using 0.8T tokens. | Up to June 2023 |

| 34B series models | They are suitable for personal, academic, and commercial (particularly for small and medium - sized enterprises) purposes. It's a cost - effective solution that's affordable and equipped with emergent ability. | 4K | 3T | Up to June 2023 |

-

For chat models

For chat model limitations, see the explanations below. ⬇️

- Hallucination: This refers to the model generating factually incorrect or nonsensical information. With the model's responses being more varied, there's a higher chance of hallucination that are not based on accurate data or logical reasoning.

- Non - determinism in re - generation: When attempting to regenerate or sample responses, inconsistencies in the outcomes may occur. The increased diversity can lead to varying results even under similar input conditions.

- Cumulative Error: This occurs when errors in the model's responses compound over time. As the model generates more diverse responses, the likelihood of small inaccuracies building up into larger errors increases, especially in complex tasks like extended reasoning, mathematical problem - solving, etc.

- To achieve more coherent and consistent responses, it is advisable to adjust generation configuration parameters such as temperature, top_p, or top_k. These adjustments can help in the balance between creativity and coherence in the model's outputs.

The released chat model has undergone exclusive training using Supervised Fine - Tuning (SFT). Compared to other standard chat models, our model produces more diverse responses, making it suitable for various downstream tasks, such as creative scenarios. Furthermore, this diversity is expected to enhance the likelihood of generating higher quality responses, which will be advantageous for subsequent Reinforcement Learning (RL) training.

However, this higher diversity might amplify certain existing issues, including:

📚 Documentation

Fine - tuning

Quantization

Deployment

FAQ

Learning hub

🔧 Technical Details

Ecosystem

Upstream

Downstream

Serving

Quantization

Fine - tuning

API

Benchmarks

Base model performance

Chat model performance

Tech report

Citation

📄 License

The Yi series models are released under the Apache - 2.0 license.

Transformers

Transformers Transformers Supports Multiple Languages

Transformers Supports Multiple Languages