🚀 Stable Diffusion x2 latent upscaler model card

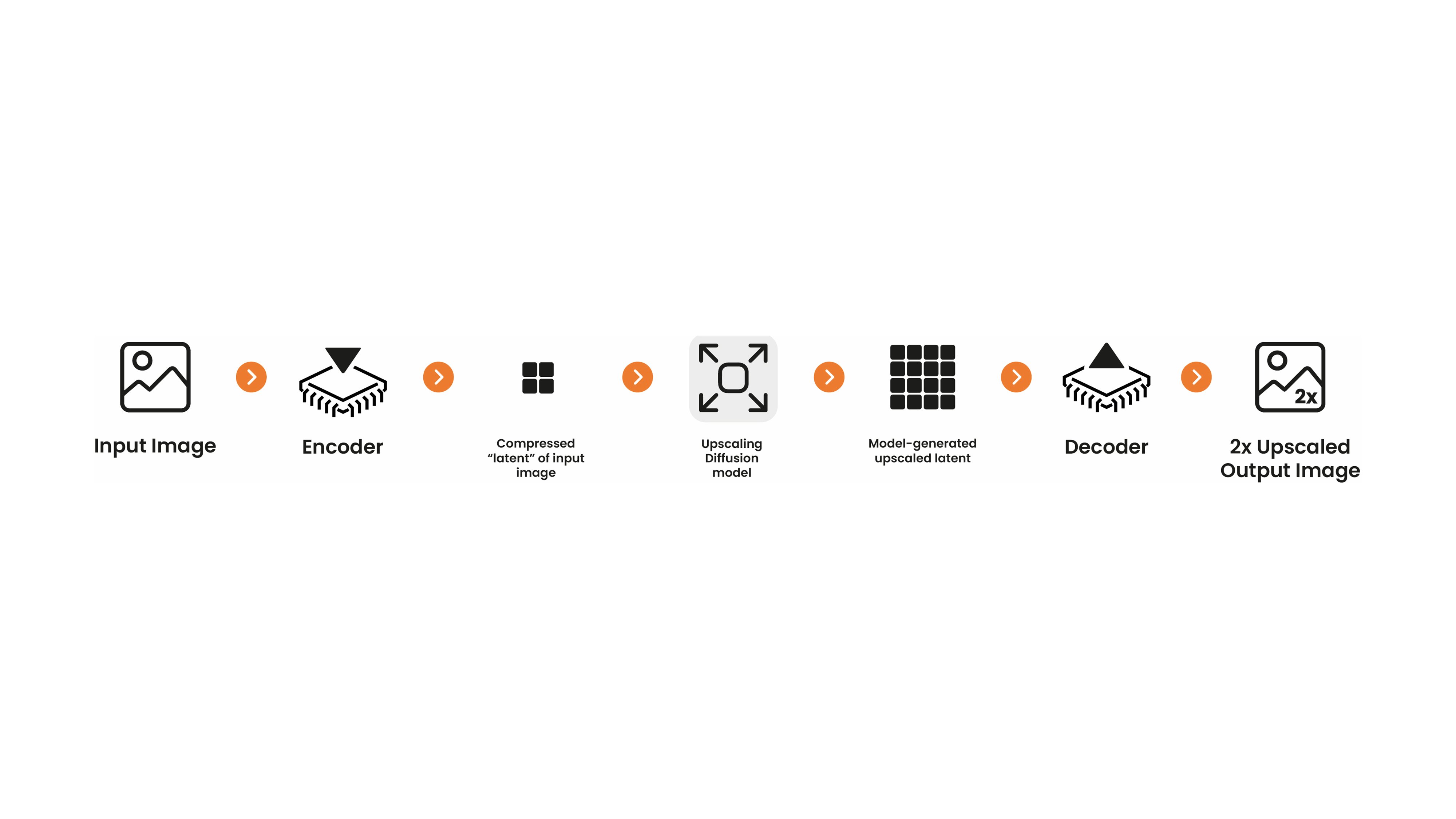

This model card focuses on a latent diffusion-based upscaler developed by Katherine Crowson in collaboration with Stability AI. It was trained on a high-resolution subset of the LAION-2B dataset. This diffusion model operates in the same latent space as the Stable Diffusion model and decodes into a full-resolution image. You can use it with Stable Diffusion by taking the generated latent from Stable Diffusion and passing it into the upscaler before decoding with your standard VAE. Alternatively, you can take any image, encode it into the latent space, use the upscaler, and then decode it.

Note: This upscaling model is specifically designed for Stable Diffusion as it can upscale Stable Diffusion's latent denoised image embeddings. This enables very fast text-to-image + upscaling pipelines as all intermediate states can be kept on the GPU. For more information, see the example below. This model works on all Stable Diffusion checkpoints.

| Original output image |

2x upscaled output image |

|

|

✨ Features

- Developed by Katherine Crowson in collaboration with Stability AI.

- A diffusion-based latent upscaler operating in the same latent space as Stable Diffusion.

- Trained on a high - resolution subset of the LAION - 2B dataset.

- Enables fast text - to - image + upscaling pipelines by keeping all intermediate states on the GPU.

- Works on all Stable Diffusion checkpoints.

📦 Installation

pip install git+https://github.com/huggingface/diffusers.git

pip install transformers accelerate scipy safetensors

💻 Usage Examples

Basic Usage

from diffusers import StableDiffusionLatentUpscalePipeline, StableDiffusionPipeline

import torch

pipeline = StableDiffusionPipeline.from_pretrained("CompVis/stable-diffusion-v1-4", torch_dtype=torch.float16)

pipeline.to("cuda")

upscaler = StableDiffusionLatentUpscalePipeline.from_pretrained("stabilityai/sd-x2-latent-upscaler", torch_dtype=torch.float16)

upscaler.to("cuda")

prompt = "a photo of an astronaut high resolution, unreal engine, ultra realistic"

generator = torch.manual_seed(33)

low_res_latents = pipeline(prompt, generator=generator, output_type="latent").images

upscaled_image = upscaler(

prompt=prompt,

image=low_res_latents,

num_inference_steps=20,

guidance_scale=0,

generator=generator,

).images[0]

upscaled_image.save("astronaut_1024.png")

with torch.no_grad():

image = pipeline.decode_latents(low_res_latents)

image = pipeline.numpy_to_pil(image)[0]

image.save("astronaut_512.png")

Result:

512 - res Astronaut

1024 - res Astronaut

Advanced Usage

⚠️ Important Note

Despite not being a dependency, we highly recommend you to install xformers for memory efficient attention (better performance). If you have low GPU RAM available, make sure to add a pipe.enable_attention_slicing() after sending it to cuda for less VRAM usage (to the cost of speed).

📚 Documentation

Model Details

Uses

Direct Use

The model is intended for research purposes only. Possible research areas and tasks include:

- Safe deployment of models which have the potential to generate harmful content.

- Probing and understanding the limitations and biases of generative models.

- Generation of artworks and use in design and other artistic processes.

- Applications in educational or creative tools.

- Research on generative models.

Excluded uses are described below.

Misuse, Malicious Use, and Out-of-Scope Use

Note: This section is originally taken from the DALLE - MINI model card, was used for Stable Diffusion v1, but applies in the same way to Stable Diffusion v2.

The model should not be used to intentionally create or disseminate images that create hostile or alienating environments for people. This includes generating images that people would foreseeably find disturbing, distressing, or offensive; or content that propagates historical or current stereotypes.

Out-of-Scope Use

The model was not trained to be factual or true representations of people or events, and therefore using the model to generate such content is out-of-scope for the abilities of this model.

Misuse and Malicious Use

Using the model to generate content that is cruel to individuals is a misuse of this model. This includes, but is not limited to:

- Generating demeaning, dehumanizing, or otherwise harmful representations of people or their environments, cultures, religions, etc.

- Intentionally promoting or propagating discriminatory content or harmful stereotypes.

- Impersonating individuals without their consent.

- Sexual content without consent of the people who might see it.

- Mis - and disinformation

- Representations of egregious violence and gore

- Sharing of copyrighted or licensed material in violation of its terms of use.

- Sharing content that is an alteration of copyrighted or licensed material in violation of its terms of use.

Limitations and Bias

Limitations

- The model does not achieve perfect photorealism.

- The model cannot render legible text.

- The model does not perform well on more difficult tasks which involve compositionality, such as rendering an image corresponding to “A red cube on top of a blue sphere”.

- Faces and people in general may not be generated properly.

- The model was trained mainly with English captions and will not work as well in other languages.

- The autoencoding part of the model is lossy.

- The model was trained on a subset of the large - scale dataset LAION - 5B, which contains adult, violent and sexual content. To partially mitigate this, we have filtered the dataset using LAION's NFSW detector (see Training section).

Bias

While the capabilities of image generation models are impressive, they can also reinforce or exacerbate social biases. Stable Diffusion v2 was primarily trained on subsets of LAION - 2B(en), which consists of images that are limited to English descriptions. Texts and images from communities and cultures that use other languages are likely to be insufficiently accounted for. This affects the overall output of the model, as white and western cultures are often set as the default. Further, the ability of the model to generate content with non - English prompts is significantly worse than with English - language prompts. Stable Diffusion v2 mirrors and exacerbates biases to such a degree that viewer discretion must be advised irrespective of the input or its intent.

📄 License

This model is released under the CreativeML Open RAIL++-M License.

Transformers

Transformers