Model Overview

Model Features

Model Capabilities

Use Cases

🚀 [MaziyarPanahi/WizardLM-2-7B-GGUF]

This repository MaziyarPanahi/WizardLM-2-7B-GGUF provides GGUF format model files for the microsoft/WizardLM-2-7B model, offering efficient and flexible text generation capabilities.

🚀 Quick Start

Model Information

- Model creator: microsoft

- Original model: microsoft/WizardLM-2-7B

Prompt Template

{system_prompt}

USER: {prompt}

ASSISTANT: </s>

or

A chat between a curious user and an artificial intelligence assistant. The assistant gives helpful,

detailed, and polite answers to the user's questions. USER: Hi ASSISTANT: Hello.</s>

USER: {prompt} ASSISTANT: </s>......

This prompt template is taken from the original README.

✨ Features

News 🔥🔥🔥 [2024/04/15]

We introduce and open-source WizardLM-2, our next-generation state-of-the-art large language models. These models have improved performance in complex chat, multilingual, reasoning, and agent tasks. The new family includes three cutting-edge models: WizardLM-2 8x22B, WizardLM-2 70B, and WizardLM-2 7B.

- WizardLM-2 8x22B is our most advanced model, demonstrating highly competitive performance compared to leading proprietary works and consistently outperforming all existing state-of-the-art open-source models.

- WizardLM-2 70B reaches top-tier reasoning capabilities and is the first choice in the same size.

- WizardLM-2 7B is the fastest and achieves comparable performance with existing 10x larger open-source leading models.

For more details of WizardLM-2, please read our release blog post and the upcoming paper.

Model Details

| Property | Details |

|---|---|

| Model name | WizardLM-2 7B |

| Developed by | WizardLM@Microsoft AI |

| Base model | mistralai/Mistral-7B-v0.1 |

| Parameters | 7B |

| Language(s) | Multilingual |

| Blog | Introducing WizardLM-2 |

| Repository | https://github.com/nlpxucan/WizardLM |

| Paper | WizardLM-2 (Upcoming) |

| License | Apache2.0 |

Model Capacities

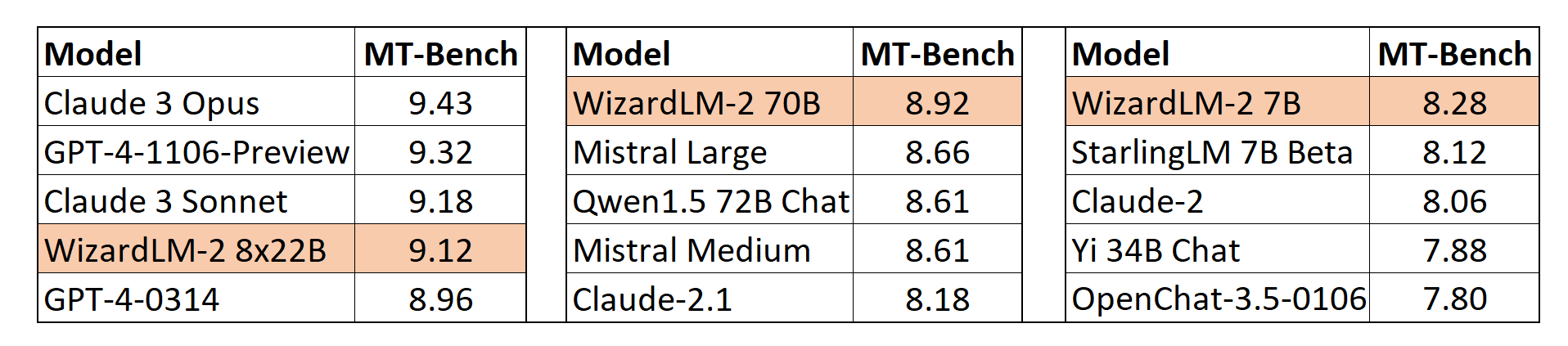

MT-Bench

We adopt the automatic MT-Bench evaluation framework based on GPT-4 proposed by lmsys to assess the performance of models. The WizardLM-2 8x22B demonstrates highly competitive performance compared to the most advanced proprietary models. Meanwhile, WizardLM-2 7B and WizardLM-2 70B are top-performing models among other leading baselines at 7B to 70B model scales.

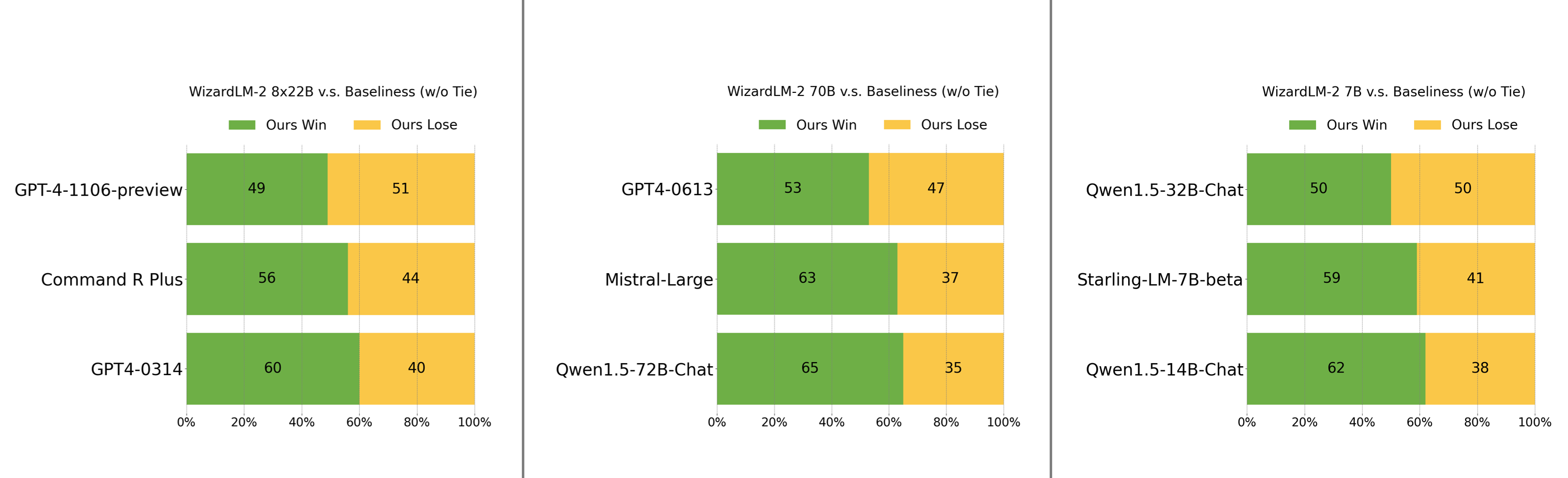

Human Preferences Evaluation

We collected a complex and challenging set of real-world instructions, including main requirements such as writing, coding, math, reasoning, agent, and multilingual tasks. We report the win:loss rate without tie:

- WizardLM-2 8x22B is slightly behind GPT-4-1106-preview and significantly stronger than Command R Plus and GPT4-0314.

- WizardLM-2 70B is better than GPT4-0613, Mistral-Large, and Qwen1.5-72B-Chat.

- WizardLM-2 7B is comparable with Qwen1.5-32B-Chat and surpasses Qwen1.5-14B-Chat and Starling-LM-7B-beta.

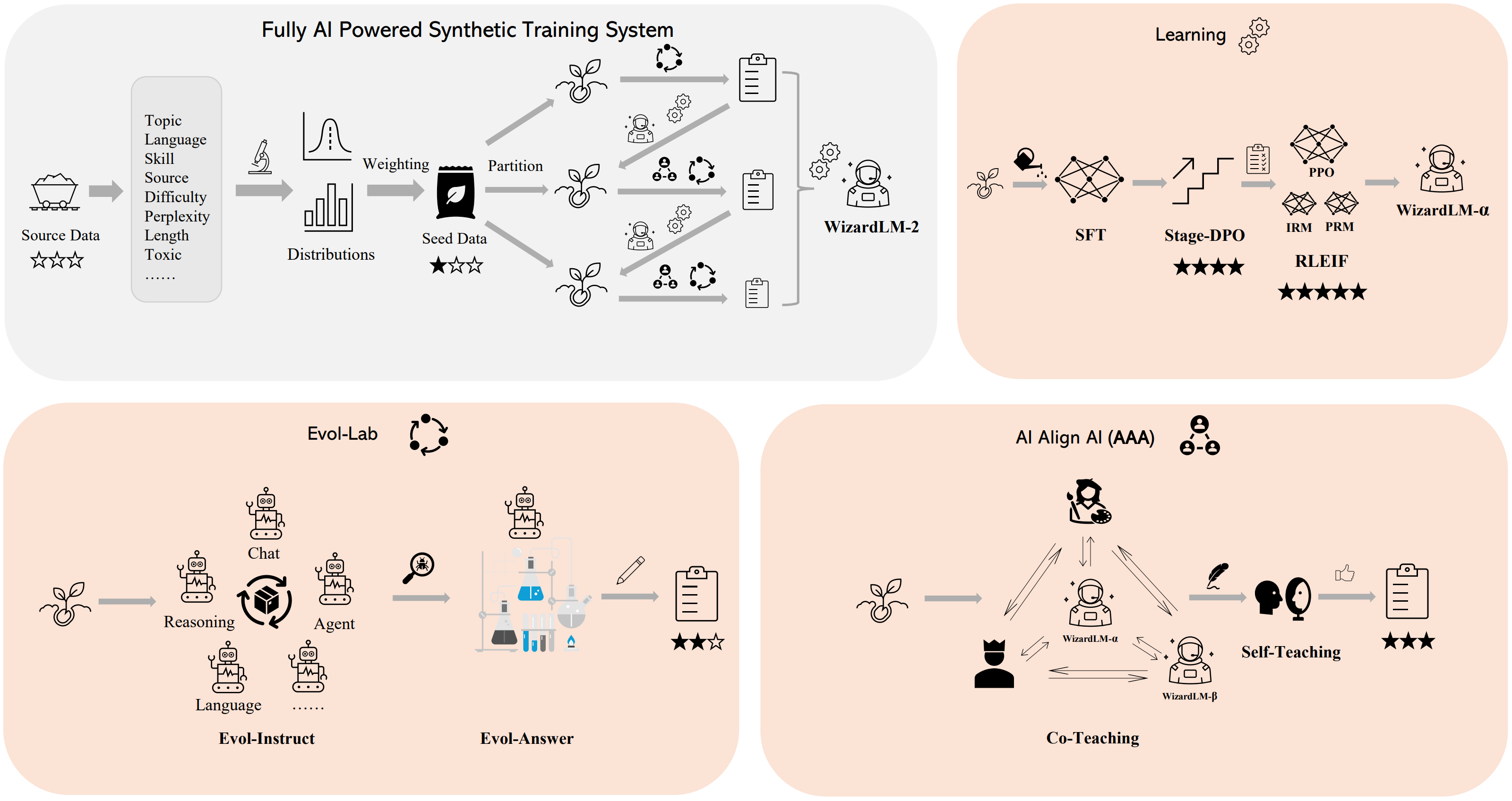

Method Overview

We built a fully AI-powered synthetic training system to train WizardLM-2 models. For more details of this system, please refer to our blog.

📦 Installation

About GGUF

GGUF is a new format introduced by the llama.cpp team on August 21st, 2023. It is a replacement for GGML, which is no longer supported by llama.cpp.

Here is an incomplete list of clients and libraries that are known to support GGUF:

- llama.cpp. The source project for GGUF. Offers a CLI and a server option.

- text-generation-webui, the most widely used web UI, with many features and powerful extensions. Supports GPU acceleration.

- KoboldCpp, a fully featured web UI, with GPU accel across all platforms and GPU architectures. Especially good for story telling.

- GPT4All, a free and open-source local running GUI, supporting Windows, Linux, and macOS with full GPU accel.

- LM Studio, an easy-to-use and powerful local GUI for Windows and macOS (Silicon), with GPU acceleration. Linux available, in beta as of 27/11/2023.

- LoLLMS Web UI, a great web UI with many interesting and unique features, including a full model library for easy model selection.

- Faraday.dev, an attractive and easy-to-use character-based chat GUI for Windows and macOS (both Silicon and Intel), with GPU acceleration.

- llama-cpp-python, a Python library with GPU accel, LangChain support, and OpenAI-compatible API server.

- candle, a Rust ML framework with a focus on performance, including GPU support, and ease of use.

- ctransformers, a Python library with GPU accel, LangChain support, and OpenAI-compatible AI server. Note, as of time of writing (November 27th, 2023), ctransformers has not been updated in a long time and does not support many recent models.

Explanation of Quantisation Methods

Click to see details

The new methods available are: * GGML_TYPE_Q2_K - "type-1" 2-bit quantization in super-blocks containing 16 blocks, each block having 16 weight. Block scales and mins are quantized with 4 bits. This ends up effectively using 2.5625 bits per weight (bpw). * GGML_TYPE_Q3_K - "type-0" 3-bit quantization in super-blocks containing 16 blocks, each block having 16 weights. Scales are quantized with 6 bits. This end up using 3.4375 bpw. * GGML_TYPE_Q4_K - "type-1" 4-bit quantization in super-blocks containing 8 blocks, each block having 32 weights. Scales and mins are quantized with 6 bits. This ends up using 4.5 bpw. * GGML_TYPE_Q5_K - "type-1" 5-bit quantization. Same super-block structure as GGML_TYPE_Q4_K resulting in 5.5 bpw. * GGML_TYPE_Q6_K - "type-0" 6-bit quantization. Super-blocks with 16 blocks, each block having 16 weights. Scales are quantized with 8 bits. This ends up using 6.5625 bpw.How to Download GGUF Files

⚠️ Important Note

You almost never want to clone the entire repo! Multiple different quantisation formats are provided, and most users only want to pick and download a single file.

The following clients/libraries will automatically download models for you, providing a list of available models to choose from:

- LM Studio

- LoLLMS Web UI

- Faraday.dev

In text-generation-webui

Under Download Model, you can enter the model repo: MaziyarPanahi/WizardLM-2-7B-GGUF and below it, a specific filename to download, such as: WizardLM-2-7B-GGUF.Q4_K_M.gguf. Then click Download.

On the command line, including multiple files at once

I recommend using the huggingface-hub Python library:

pip3 install huggingface-hub

Then you can download any individual model file to the current directory, at high speed, with a command like this:

huggingface-cli download MaziyarPanahi/WizardLM-2-7B-GGUF WizardLM-2-7B.Q4_K_M.gguf --local-dir . --local-dir-use-symlinks False

More advanced huggingface-cli download usage (click to read)

You can also download multiple files at once with a pattern: ```shell huggingface-cli download [MaziyarPanahi/WizardLM-2-7B-GGUF](https://huggingface.co/MaziyarPanahi/WizardLM-2-7B-GGUF) --local-dir . --local-dir-use-symlinks False --include='*Q4_K*gguf' ``` For more documentation on downloading with `huggingface-cli`, please see: [HF -> Hub Python Library -> Download files -> Download from the CLI](https://huggingface.co/docs/huggingface_hub/guides/download#download-from-the-cli).To accelerate downloads on fast connections (1Gbit/s or higher), install hf_transfer:

pip3 install hf_transfer

And set environment variable HF_HUB_ENABLE_HF_TRANSFER to 1:

HF_HUB_ENABLE_HF_TRANSFER=1 huggingface-cli download MaziyarPanahi/WizardLM-2-7B-GGUF WizardLM-2-7B.Q4_K_M.gguf --local-dir . --local-dir-use-symlinks False

Windows Command Line users: You can set the environment variable by running set HF_HUB_ENABLE_HF_TRANSFER=1 before the download command.

💻 Usage Examples

Example llama.cpp command

Make sure you are using llama.cpp from commit d0cee0d or later.

./main -ngl 35 -m WizardLM-2-7B.Q4_K_M.gguf --color -c 32768 --temp 0.7 --repeat_penalty 1.1 -n -1 -p "<|im_start|>system

{system_message}<|im_end|>

<|im_start|>user

{prompt}<|im_end|>

<|im_start|>assistant"

💡 Usage Tip

- Change

-ngl 32to the number of layers to offload to GPU. Remove it if you don't have GPU acceleration.- Change

-c 32768to the desired sequence length. For extended sequence models - eg 8K, 16K, 32K - the necessary RoPE scaling parameters are read from the GGUF file and set by llama.cpp automatically. Note that longer sequence lengths require much more resources, so you may need to reduce this value.- If you want to have a chat-style conversation, replace the

-p <PROMPT>argument with-i -ins.

For other parameters and how to use them, please refer to the llama.cpp documentation.

How to run in text-generation-webui

Further instructions can be found in the text-generation-webui documentation, here: text-generation-webui/docs/04 ‐ Model Tab.md.

How to run from Python code

You can use GGUF models from Python using the llama-cpp-python or ctransformers libraries. Note that at the time of writing (Nov 27th, 2023), ctransformers has not been updated for some time.

📚 Documentation

Usage of Model System Prompts

⚠️ Important Note

WizardLM-2 adopts the prompt format from Vicuna and supports multi-turn conversation. The prompt should be as follows:

A chat between a curious user and an artificial intelligence assistant. The assistant gives helpful,

detailed, and polite answers to the user's questions. USER: Hi ASSISTANT: Hello.</s>

USER: Who are you? ASSISTANT: I am WizardLM.</s>......

Inference WizardLM-2 Demo Script

We provide a WizardLM-2 inference demo code on our GitHub.

📄 License

This model is licensed under the Apache 2.0 license.

Transformers

Transformers Transformers Supports Multiple Languages

Transformers Supports Multiple Languages