🚀 Model Card for FLAN-T5 XXL

FLAN-T5 XXL is a powerful text2text-generation model. It has been fine - tuned on over 1000 additional tasks across multiple languages, offering better performance compared to the base T5 model.

📦 Installation

Since this is a Hugging Face model, you can install the necessary dependencies using pip:

pip install transformers accelerate bitsandbytes

💻 Usage Examples

Basic Usage

Here are some examples of using the model in different scenarios:

from transformers import T5Tokenizer, T5ForConditionalGeneration

tokenizer = T5Tokenizer.from_pretrained("google/flan-t5-xxl")

model = T5ForConditionalGeneration.from_pretrained("google/flan-t5-xxl")

input_text = "translate English to German: How old are you?"

input_ids = tokenizer(input_text, return_tensors="pt").input_ids

outputs = model.generate(input_ids)

print(tokenizer.decode(outputs[0]))

Advanced Usage

Running on GPU

from transformers import T5Tokenizer, T5ForConditionalGeneration

tokenizer = T5Tokenizer.from_pretrained("google/flan-t5-xxl")

model = T5ForConditionalGeneration.from_pretrained("google/flan-t5-xxl", device_map="auto")

input_text = "translate English to German: How old are you?"

input_ids = tokenizer(input_text, return_tensors="pt").input_ids.to("cuda")

outputs = model.generate(input_ids)

print(tokenizer.decode(outputs[0]))

Using Different Precisions

FP16

import torch

from transformers import T5Tokenizer, T5ForConditionalGeneration

tokenizer = T5Tokenizer.from_pretrained("google/flan-t5-xxl")

model = T5ForConditionalGeneration.from_pretrained("google/flan-t5-xxl", device_map="auto", torch_dtype=torch.float16)

input_text = "translate English to German: How old are you?"

input_ids = tokenizer(input_text, return_tensors="pt").input_ids.to("cuda")

outputs = model.generate(input_ids)

print(tokenizer.decode(outputs[0]))

INT8

from transformers import T5Tokenizer, T5ForConditionalGeneration

tokenizer = T5Tokenizer.from_pretrained("google/flan-t5-xxl")

model = T5ForConditionalGeneration.from_pretrained("google/flan-t5-xxl", device_map="auto", load_in_8bit=True)

input_text = "translate English to German: How old are you?"

input_ids = tokenizer(input_text, return_tensors="pt").input_ids.to("cuda")

outputs = model.generate(input_ids)

print(tokenizer.decode(outputs[0]))

✨ Features

- Multilingual Support: Supports languages such as English, German, French, Romanian, and more.

- Diverse Task Handling: Capable of handling various tasks including translation, question answering, logical reasoning, scientific knowledge retrieval, and more.

Example Tasks

| Task Type |

Example |

| Translation |

"Translate to German: My name is Arthur" |

| Question Answering |

"Please answer to the following question. Who is going to be the next Ballon d'or?" |

| Logical reasoning |

"Q: Can Geoffrey Hinton have a conversation with George Washington? Give the rationale before answering." |

| Scientific knowledge |

"Please answer the following question. What is the boiling point of Nitrogen?" |

| Yes/no question |

"Answer the following yes/no question. Can you write a whole Haiku in a single tweet?" |

| Reasoning task |

"Answer the following yes/no question by reasoning step - by - step. Can you write a whole Haiku in a single tweet?" |

| Boolean Expressions |

"Q: ( False or not False or False ) is? A: Let's think step by step" |

| Math reasoning |

"The square root of x is the cube root of y. What is y to the power of 2, if x = 4?" |

| Premise and hypothesis |

"Premise: At my age you will probably have learnt one lesson. Hypothesis: It's not certain how many lessons you'll learn by your thirties. Does the premise entail the hypothesis?" |

📚 Documentation

Model Details

Uses

Direct Use and Downstream Use

The primary use is research on language models, including research on zero - shot NLP tasks and in - context few - shot learning NLP tasks, such as reasoning and question answering; advancing fairness and safety research, and understanding limitations of current large language models. See the research paper for further details.

Out - of - Scope Use

More information needed.

Bias, Risks, and Limitations

⚠️ Important Note

Language models, including Flan - T5, can potentially be used for language generation in a harmful way, according to Rae et al. (2021). Flan - T5 should not be used directly in any application, without a prior assessment of safety and fairness concerns specific to the application.

Ethical considerations and risks

Flan - T5 is fine - tuned on a large corpus of text data that was not filtered for explicit content or assessed for existing biases. As a result, the model itself is potentially vulnerable to generating equivalently inappropriate content or replicating inherent biases in the underlying data.

Known Limitations

Flan - T5 has not been tested in real - world applications.

Sensitive Use

Flan - T5 should not be applied for any unacceptable use cases, e.g., generation of abusive speech.

Training Details

Training Data

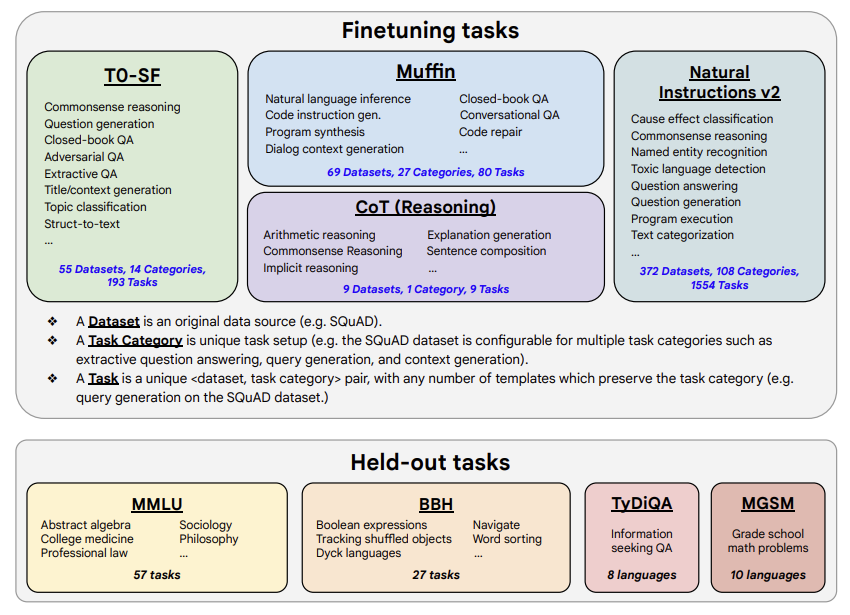

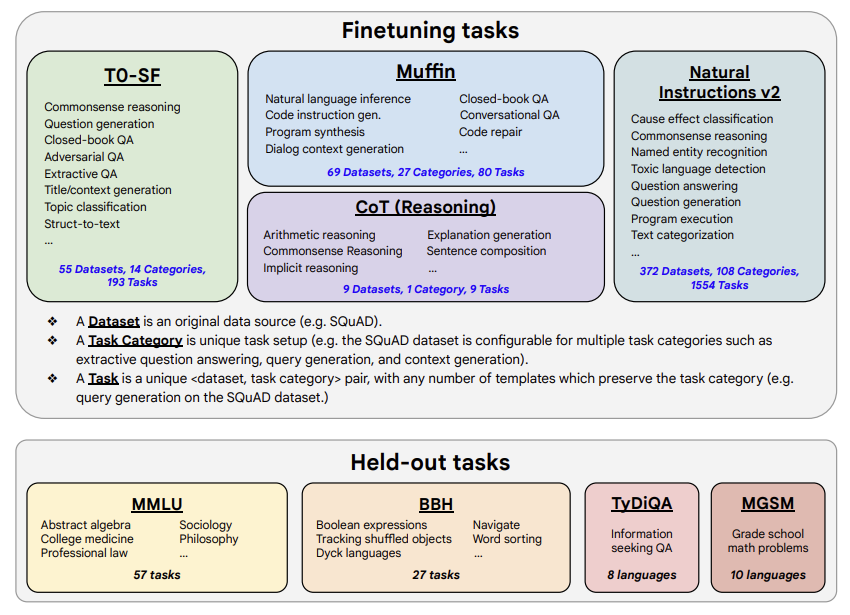

The model was trained on a mixture of tasks, that includes the tasks described in the table below (from the original paper, figure 2):

Training Procedure

These models are based on pretrained T5 (Raffel et al., 2020) and fine - tuned with instructions for better zero - shot and few - shot performance. There is one fine - tuned Flan model per T5 model size. The model has been trained on TPU v3 or TPU v4 pods, using t5x codebase together with jax.

Evaluation

Testing Data, Factors & Metrics

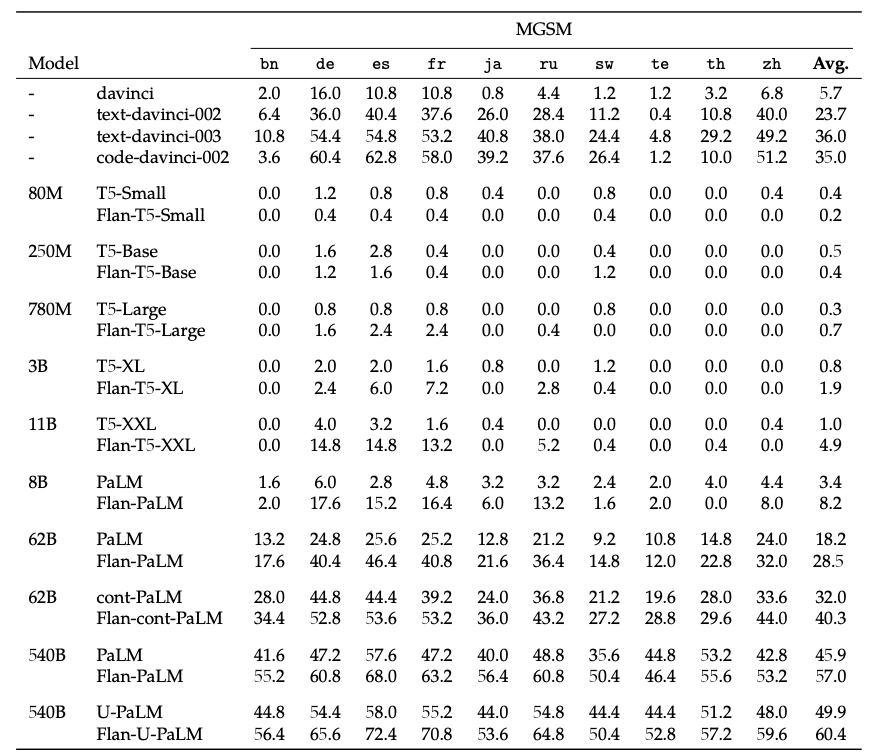

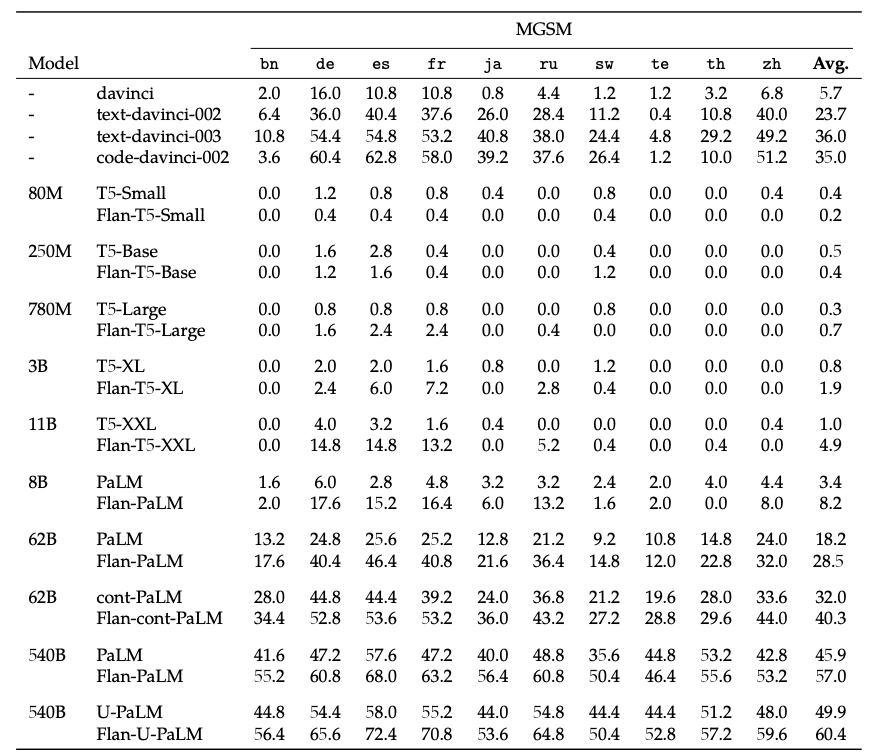

The authors evaluated the model on various tasks covering several languages (1836 in total). See the table below for some quantitative evaluation:

For full details, please check the research paper.

For full details, please check the research paper.

Results

For full results for FLAN - T5 XXL, see the research paper, Table 3.

Environmental Impact

Carbon emissions can be estimated using the Machine Learning Impact calculator presented in Lacoste et al. (2019).

| Property |

Details |

| Hardware Type |

Google Cloud TPU Pods - TPU v3 or TPU v4 |

| Hours used |

More information needed |

| Cloud Provider |

GCP |

| Compute Region |

More information needed |

| Carbon Emitted |

More information needed |

Citation

BibTeX:

@misc{https://doi.org/10.48550/arxiv.2210.11416,

doi = {10.48550/ARXIV.2210.11416},

url = {https://arxiv.org/abs/2210.11416},

author = {Chung, Hyung Won and Hou, Le and Longpre, Shayne and Zoph, Barret and Tay, Yi and Fedus, William and Li, Eric and Wang, Xuezhi and Dehghani, Mostafa and Brahma, Siddhartha and Webson, Albert and Gu, Shixiang Shane and Dai, Zhuyun and Suzgun, Mirac and Chen, Xinyun and Chowdhery, Aakanksha and Narang, Sharan and Mishra, Gaurav and Yu, Adams and Zhao, Vincent and Huang, Yanping and Dai, Andrew and Yu, Hongkun and Petrov, Slav and Chi, Ed H. and Dean, Jeff and Devlin, Jacob and Roberts, Adam and Zhou, Denny and Le, Quoc V. and Wei, Jason},

keywords = {Machine Learning (cs.LG), Computation and Language (cs.CL), FOS: Computer and information sciences, FOS: Computer and information sciences},

title = {Scaling Instruction-Finetuned Language Models},

publisher = {arXiv},

year = {2022},

copyright = {Creative Commons Attribution 4.0 International}

}

📄 License

This model is licensed under the Apache 2.0 license.

For full details, please check the

For full details, please check the  Transformers

Transformers