🚀 VAGO solutions Llama-3.1-SauerkrautLM-70b-Instruct

Fine-tuned Model - Showcasing the potential of resource-efficient fine-tuning of large language models using Spectrum Fine-Tuning

Introducing Llama-3.1-SauerkrautLM-70b-Instruct – our Sauerkraut version of the powerful meta-llama/Meta-Llama-3.1-70B-Instruct! This model is fine-tuned on German-English data, leveraging unique datasets and a precision-engineered approach to enhance multilingual capabilities and achieve better performance across multiple languages.

🚀 Quick Start

This section provides a high - level overview of the Llama-3.1-SauerkrautLM-70b-Instruct model. For detailed information, please refer to the subsequent sections.

✨ Features

- Efficient Fine - Tuning: Fine-tuning on German-English data with Spectrum Fine-Tuning, targeting 15% of the layers.

- Unique Dataset: Utilized the unique German-English Sauerkraut Mix v2 dataset for efficient cross-lingual transfer learning.

- Precision Approach: Implemented a bespoke, precision-engineered fine-tuning approach to enhance multilingual capabilities.

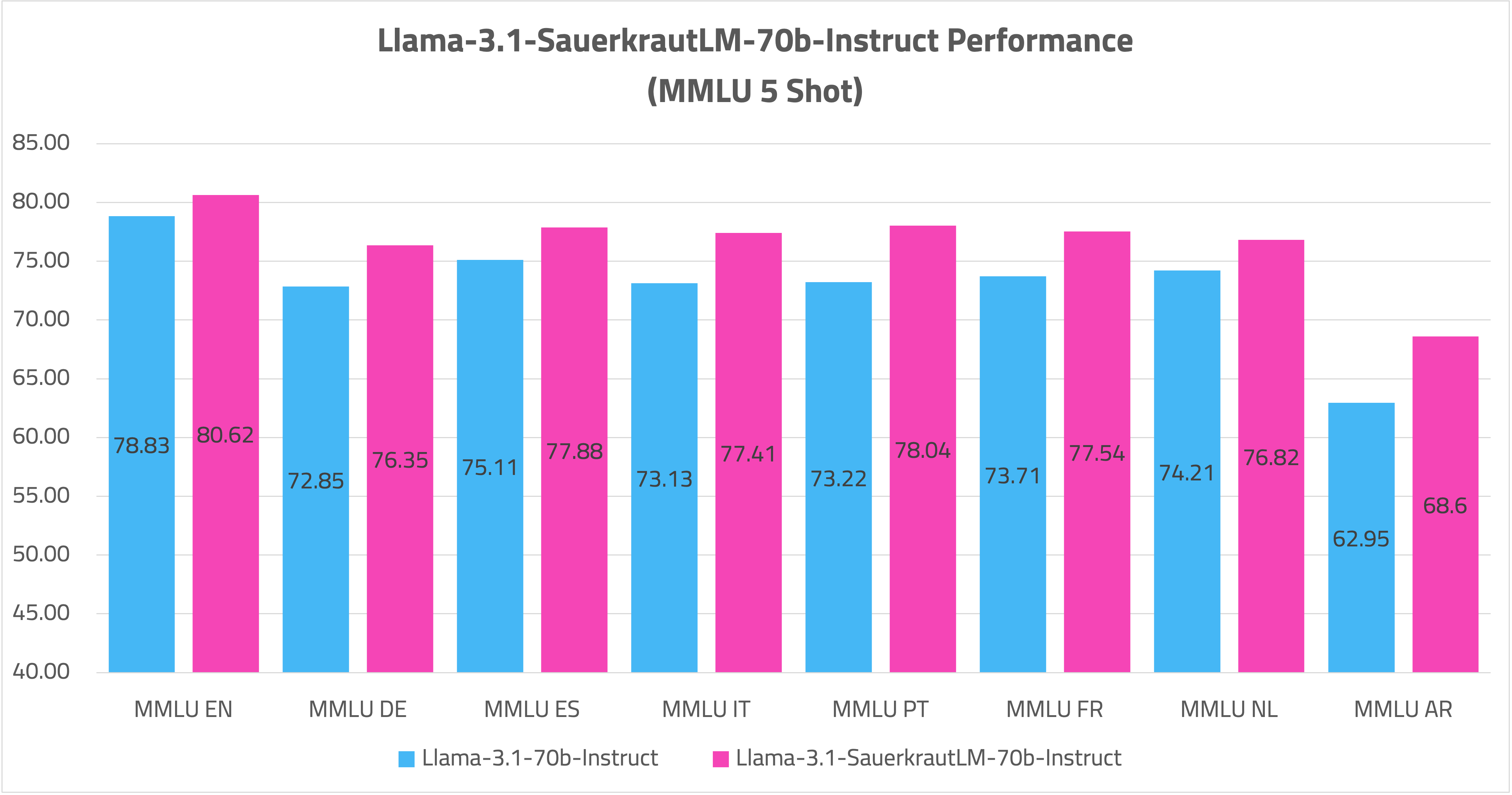

- Cross - Lingual Transfer: Achieved improved performance in multiple languages (including Arabic, Italian, French, Spanish, Dutch, Portuguese) through cross-lingual knowledge transfer.

📚 Documentation

🔍 Overview of all Llama-3.1-SauerkrautLM-70b-Instruct

| Model |

HF |

EXL2 |

GGUF |

AWQ |

| Llama-3.1-SauerkrautLM-70b-Instruct |

Link |

coming soon |

coming soon |

coming soon |

📋 Model Details

🔧 Training Procedure

This model showcases the potential of resource-efficient fine-tuning of large language models using Spectrum Fine-Tuning. Here's a detailed look at the procedure:

🎯 Fine-tuning on German-English Data

- Utilized Spectrum Fine-Tuning, targeting 15% of the model's layers.

- Introduced the model to a unique German-English Sauerkraut Mix v2.

- Implemented a bespoke, precision-engineered fine-tuning approach.

🌐 Cross-lingual Transfer Learning using Sauerkraut Mix v2

- Leveraged the Sauerkraut Mix v2 dataset as the foundation for cross-lingual transfer.

- This unique dataset, primarily focused on German and English, enabled the model to transfer knowledge to other languages.

- Improved capabilities in Arabic, Italian, French, Spanish, Dutch, and Portuguese without extensive training data in each language.

- Demonstrated the effectiveness of using a bilingual dataset for multilingual improvement.

📦 Sauerkraut Mix v2

- Premium Dataset for Language Models, focusing on German and English.

- Meticulously selected, high-quality dataset combinations.

- Cutting-edge synthetic datasets created using proprietary, high-precision generation techniques.

- Serves as the core resource for both fine-tuning and cross-lingual transfer.

🎯 Objective and Results

The primary goal of this training was twofold:

- To demonstrate that Spectrum Fine-Tuning, targeting just 15% of the layers, can significantly enhance a 70 - billion parameter model's capabilities while using only a fraction of the resources required by classic fine-tuning approaches.

- To showcase the effectiveness of cross-lingual transfer learning using the Sauerkraut Mix v2 dataset, enabling multilingual improvement without extensive language-specific training data.

The results have been remarkable:

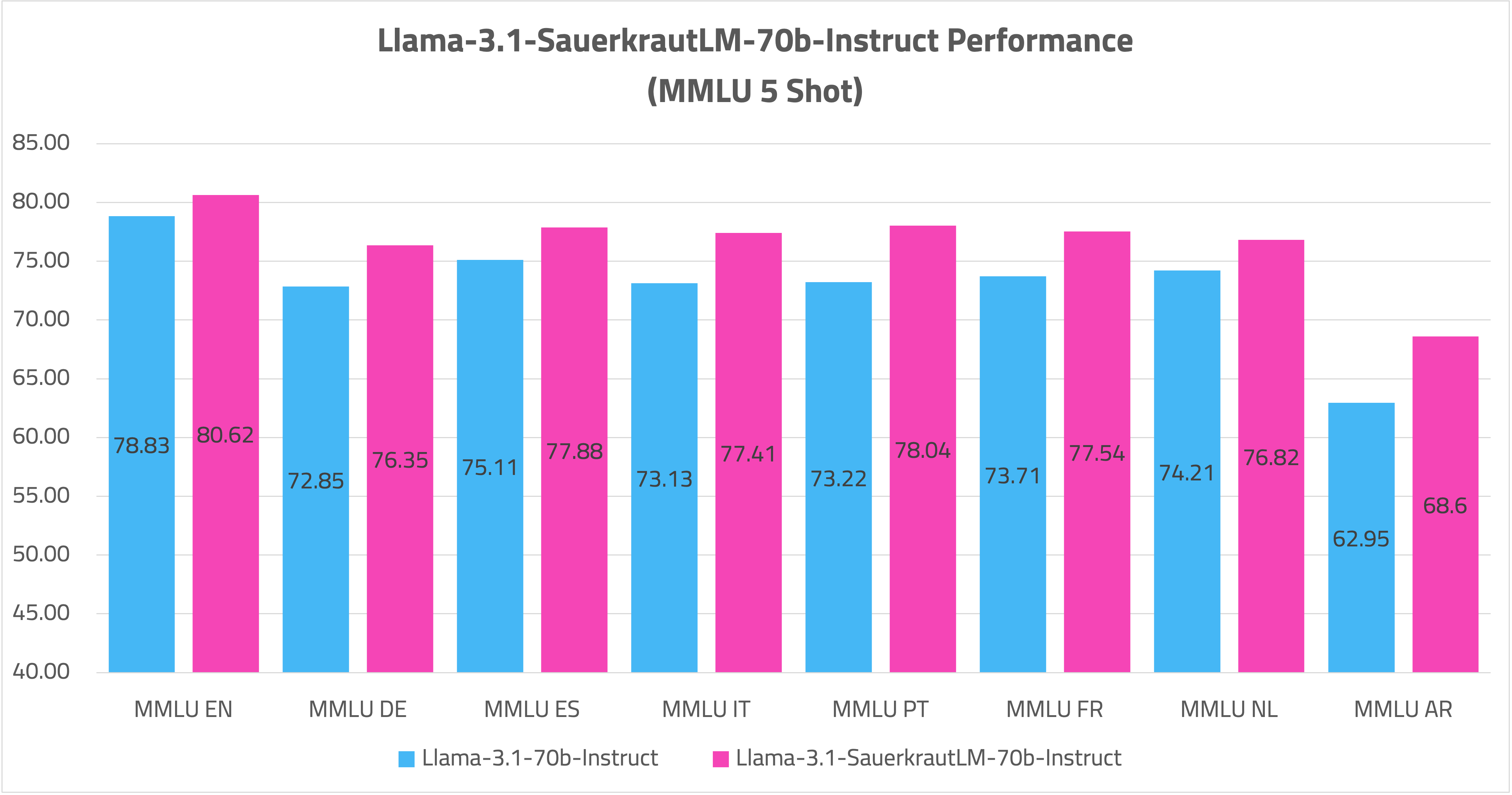

- The model has substantially improved its multilingual skills, as demonstrated by impressive benchmarks on MMLU Multilingual.

Key Findings:

- Spectrum Fine-Tuning can efficiently enhance a large language model's capabilities in multiple languages while preserving the majority of its previously acquired knowledge.

- The Sauerkraut Mix v2 dataset proves to be an effective foundation for cross-lingual transfer, allowing for multilingual improvements from a bilingual base.

- This approach demonstrates a resource-efficient method for creating powerful multilingual models without the need for extensive training data in each target language.

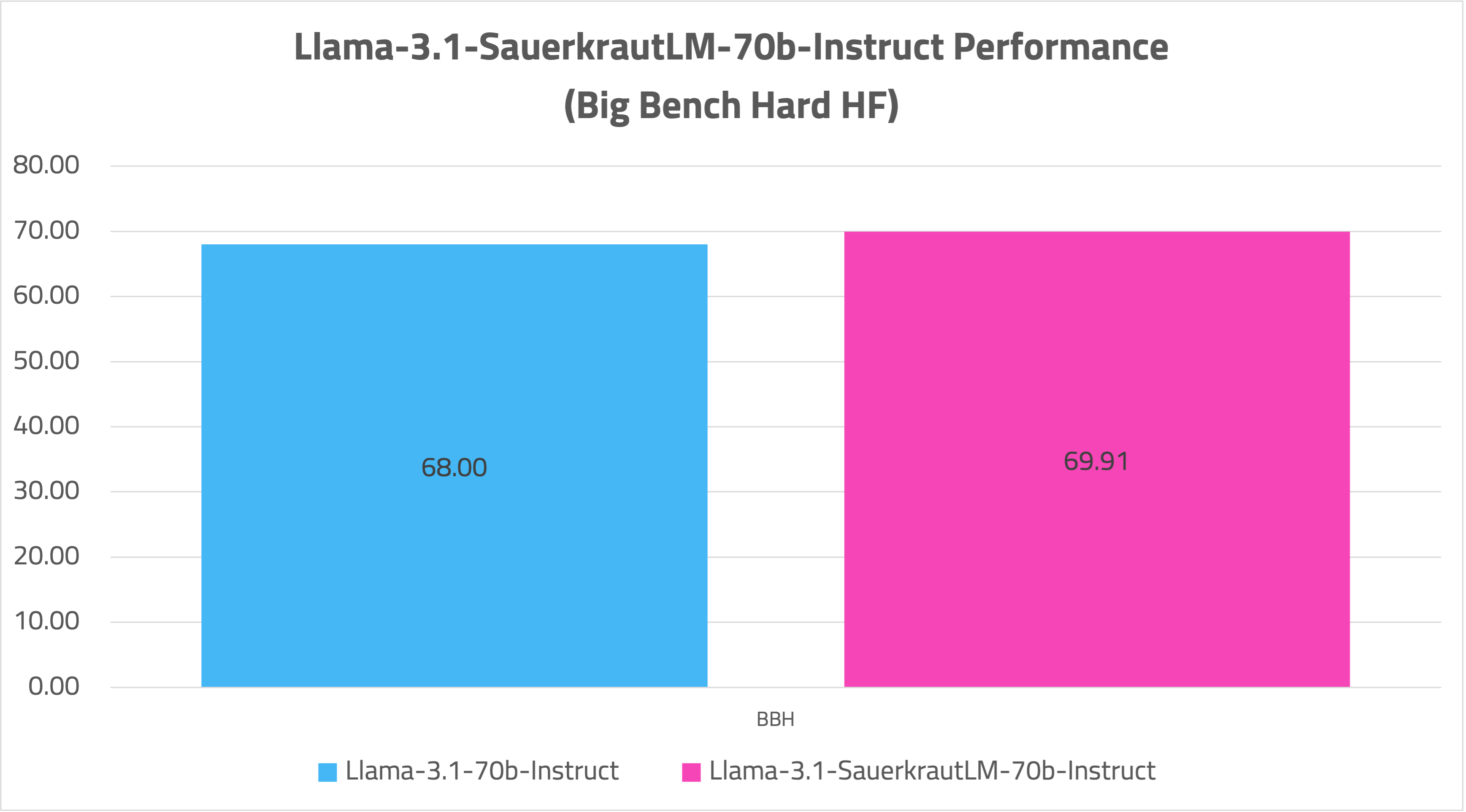

📊 Evaluation

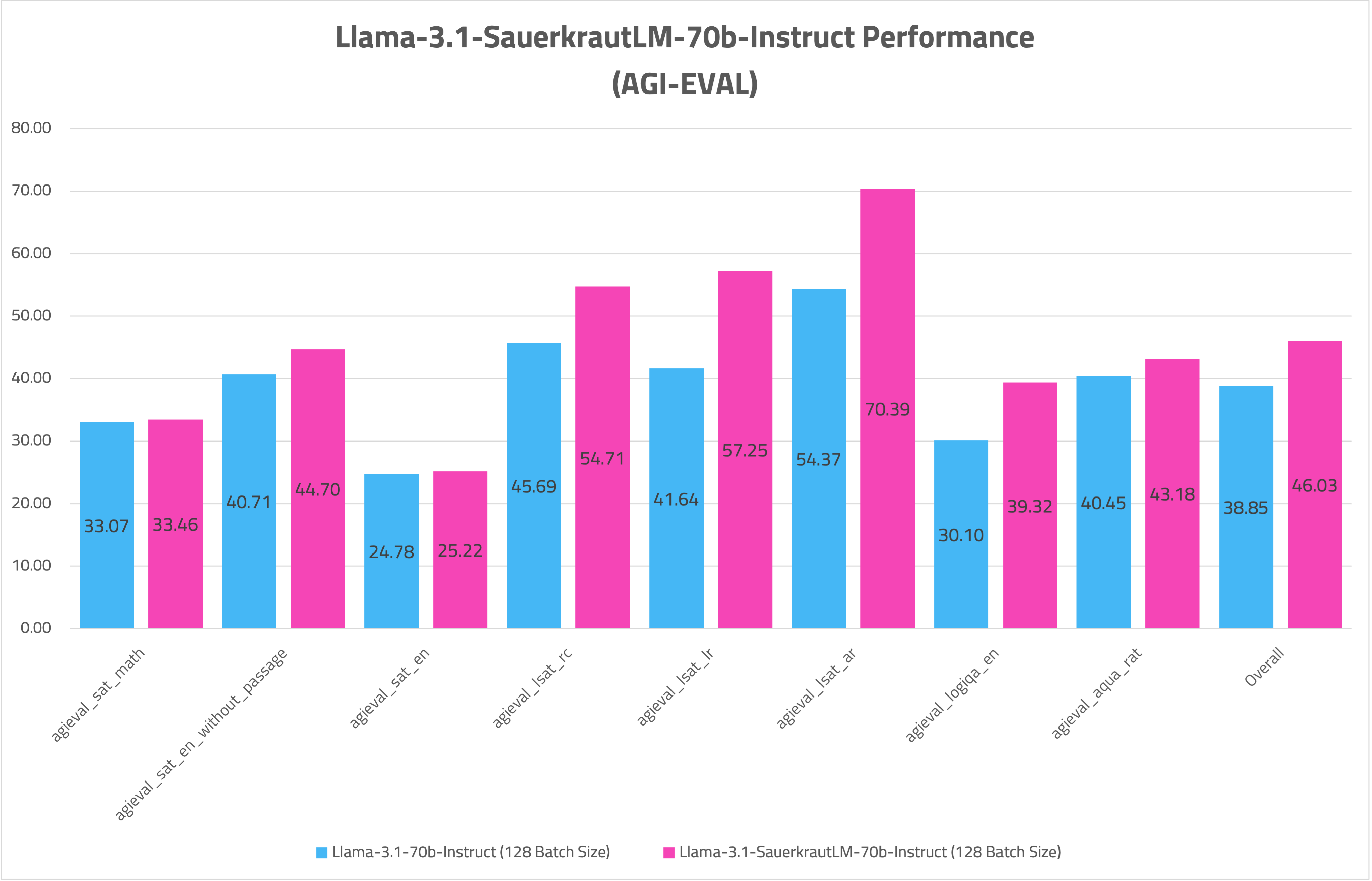

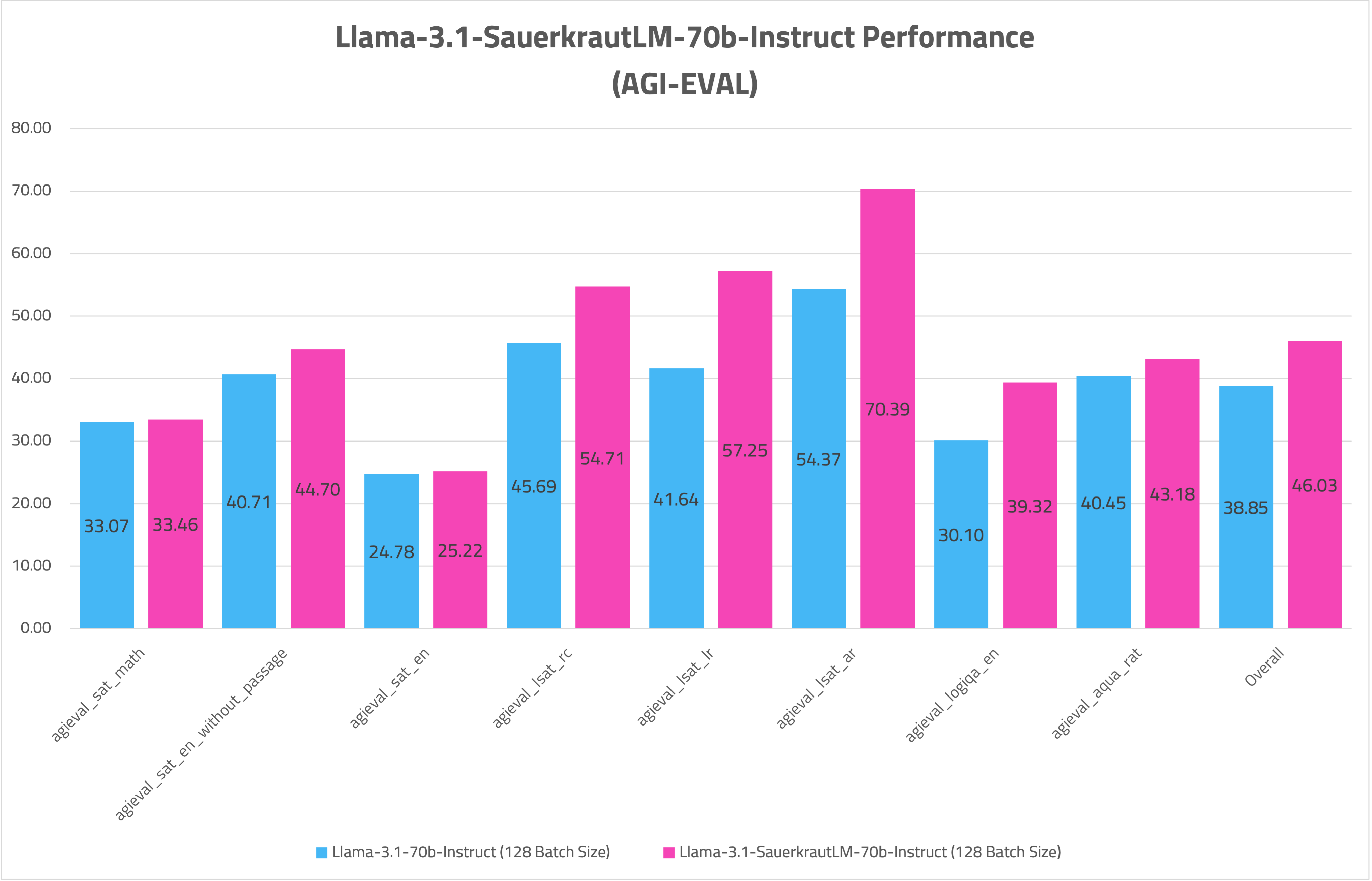

- AGIEVAL

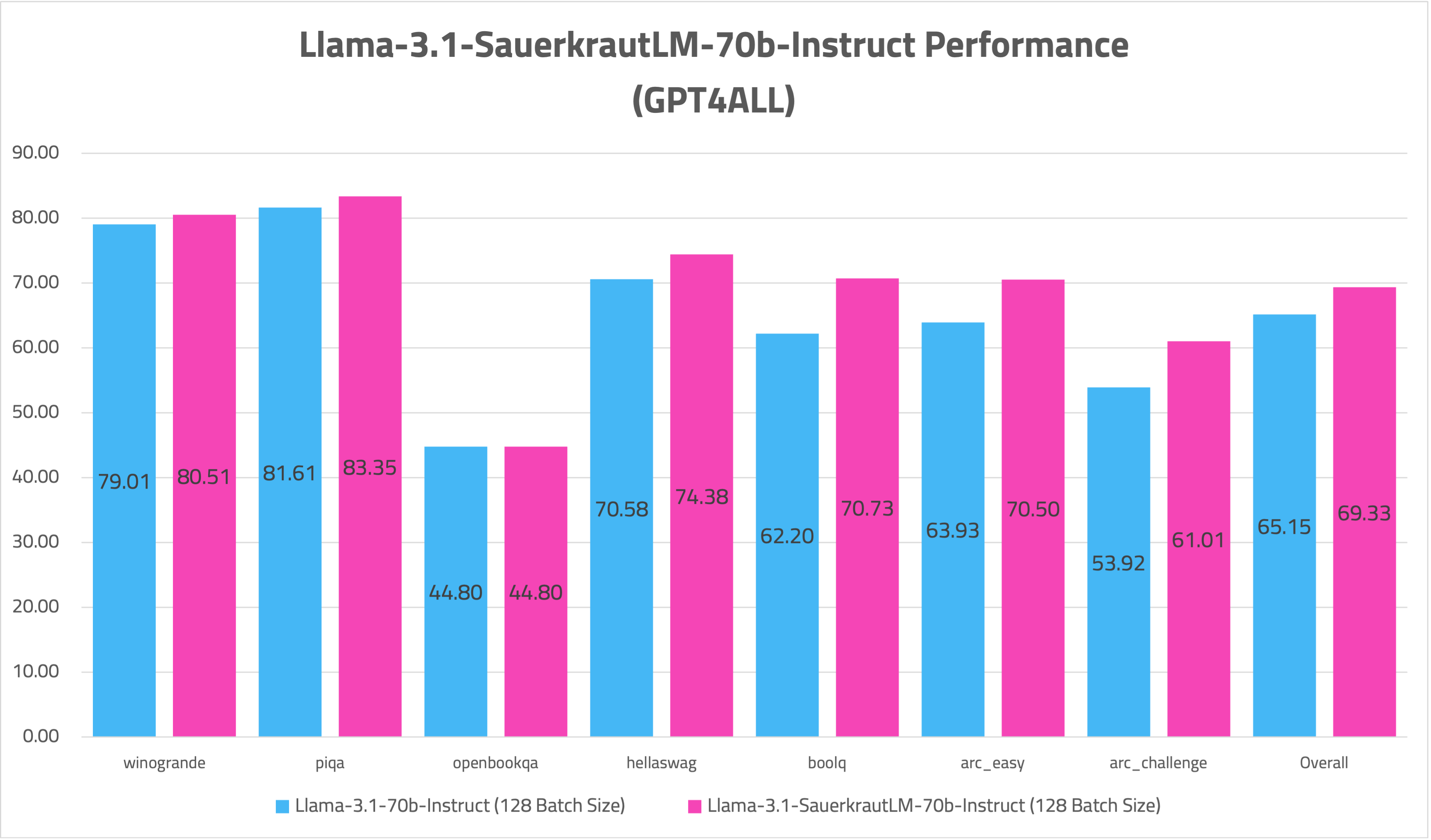

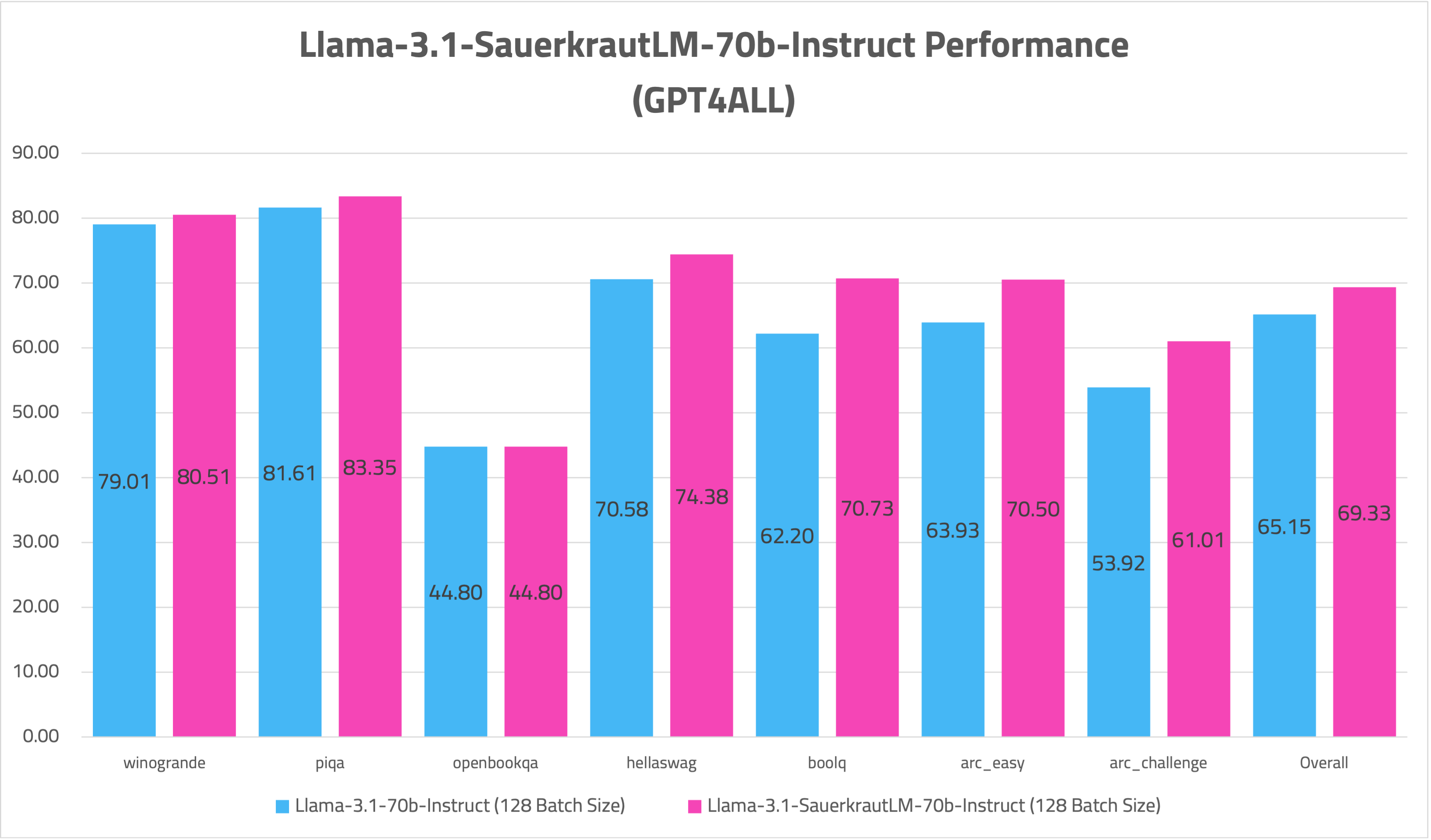

- GPT4ALL

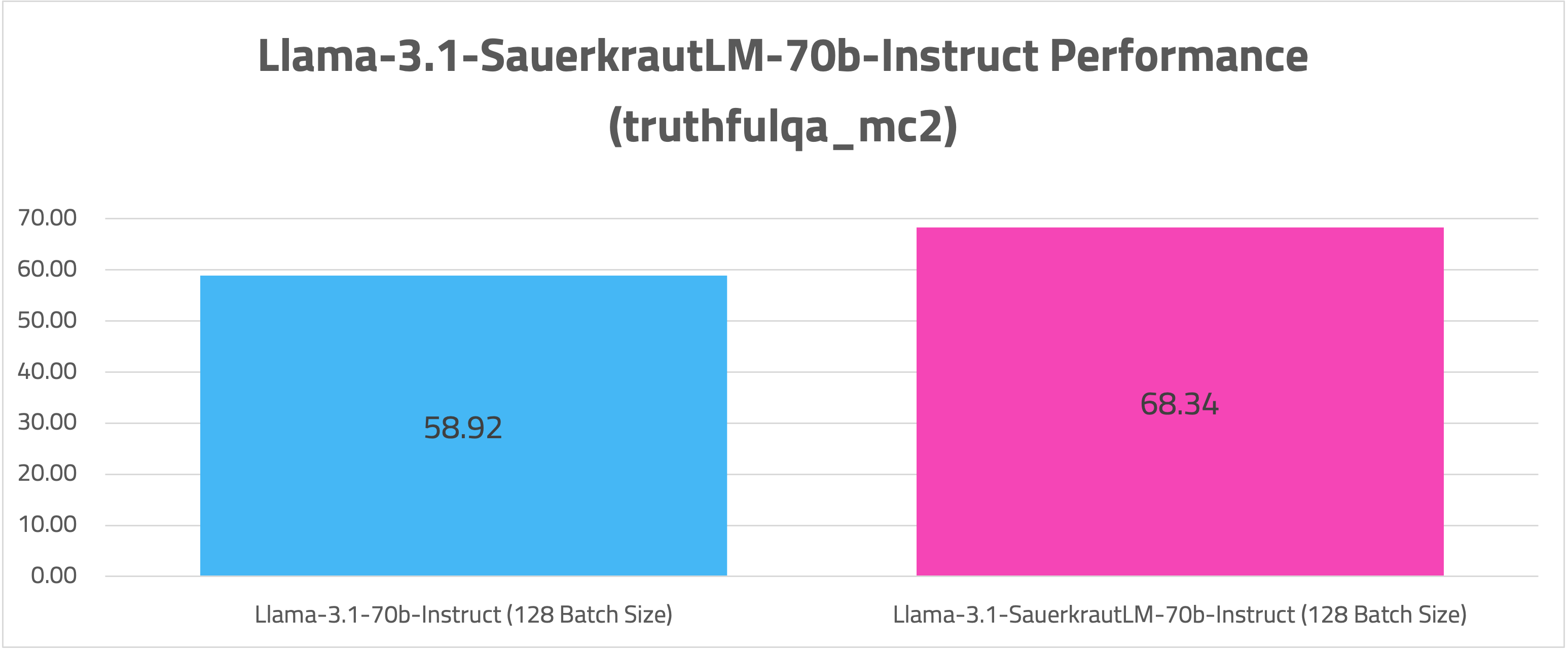

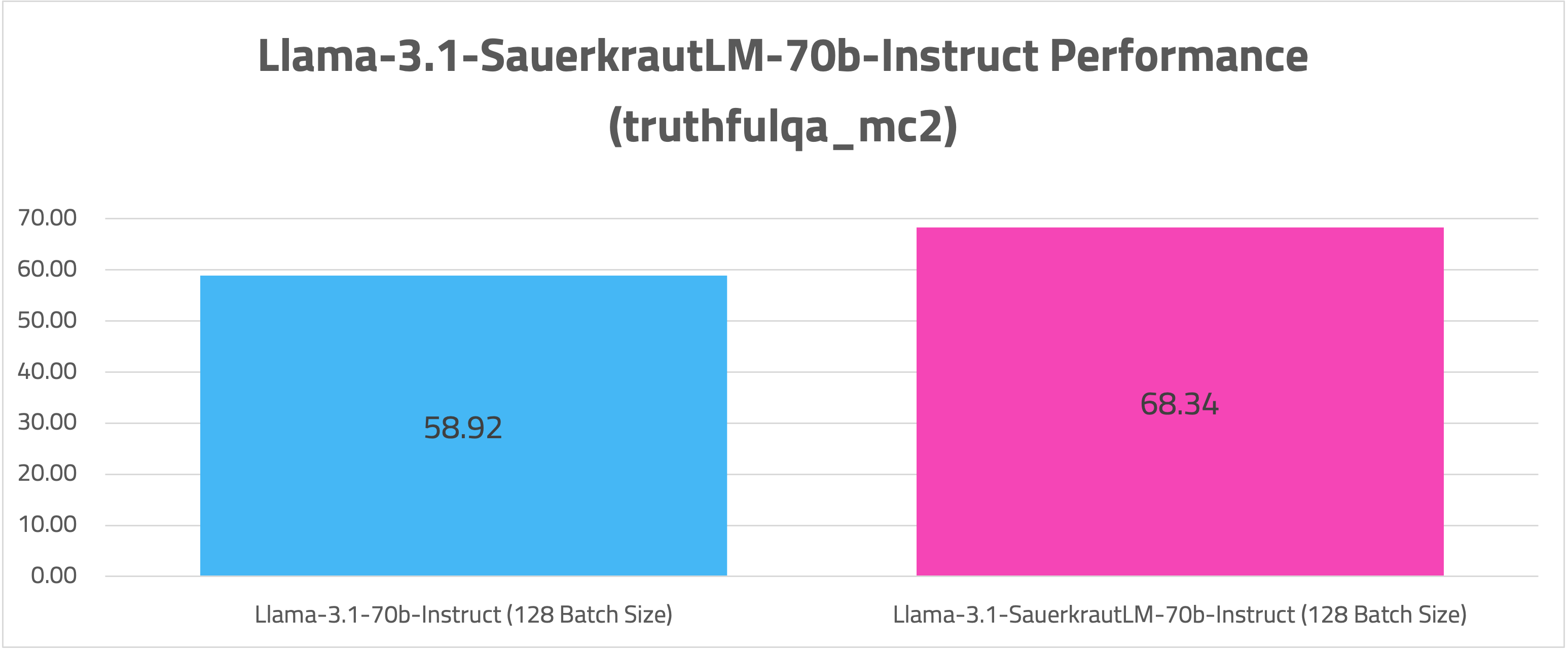

- TRUTHFULQA

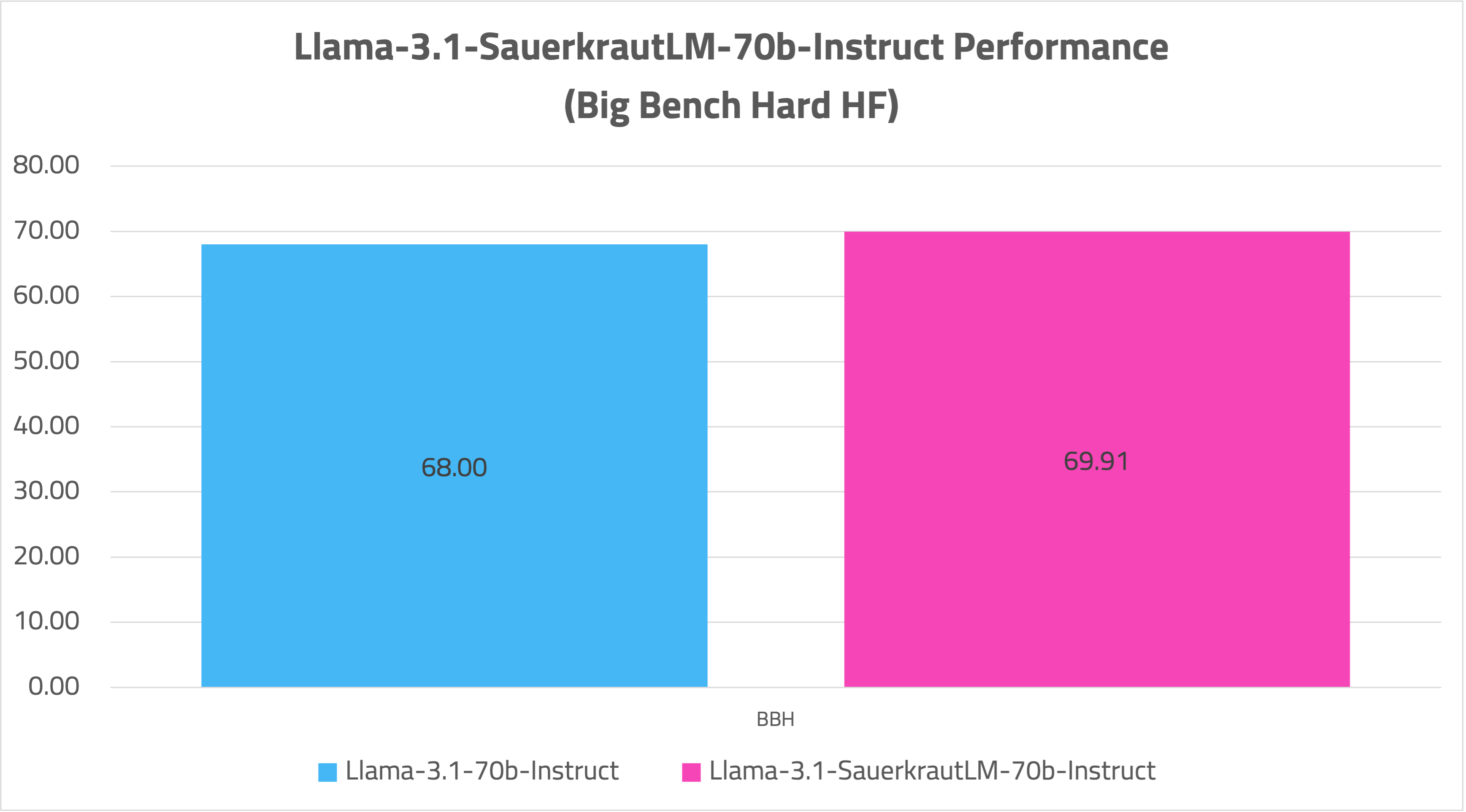

- BBH-HF

- MMLU-Multilingual

⚠️ Disclaimer

⚠️ Important Note

Despite our best efforts in data cleansing, the possibility of uncensored content slipping through cannot be entirely ruled out. However, we cannot guarantee consistently appropriate behavior. Therefore, if you encounter any issues or come across inappropriate content, we kindly request that you inform us through the contact information provided. Additionally, it is essential to understand that the licensing of these models does not constitute legal advice. We are not held responsible for the actions of third parties who utilize our models.

📞 Contact

💡 Usage Tip

If you are interested in customized LLMs for business applications, please get in contact with us via our website. We are also grateful for your feedback and suggestions.

🤝 Collaborations

We are also keenly seeking support and investment for our startup, VAGO solutions where we continuously advance the development of robust language models designed to address a diverse range of purposes and requirements. If the prospect of collaboratively navigating future challenges excites you, we warmly invite you to reach out to us at VAGO solutions

🙏 Acknowledgement

Many thanks to meta-llama for providing such a valuable model to the Open-Source community.