Model Overview

Model Features

Model Capabilities

Use Cases

🚀 Jais-30b-chat-v3

Jais-30b-chat-v3 is a fine-tuned version of Jais-30b-v3 on a curated dataset of Arabic and English prompt-response pairs. It is based on a transformer-based decoder-only (GPT-3) architecture, similar to our previous model Jais-13b-chat. This model uses SwiGLU non-linearity and ALiBi position embeddings, enabling it to handle long sequences and improve context handling and precision. In this release, the model's ability to handle long contexts has been enhanced, with the current version capable of processing up to 8000 tokens, a significant improvement from the previous 2000-token limit.

🚀 Quick Start

Below is sample code to use the model. Note that the model requires a custom model class, so users must enable trust_remote_code=True while loading the model. To achieve the same performance as our testing, a specific prompt needs to be followed. The sample code with the required formatting is as follows:

# -*- coding: utf-8 -*-

import torch

from transformers import AutoTokenizer, AutoModelForCausalLM

model_path = "core42/jais-30b-chat-v3"

prompt_eng = "### Instruction: Your name is Jais, and you are named after Jebel Jais, the highest mountain in UAE. You are built by Core42. You are the world's most advanced Arabic large language model with 30b parameters. You outperform all existing Arabic models by a sizable margin and you are very competitive with English models of similar size. You can answer in Arabic and English only. You are a helpful, respectful and honest assistant. When answering, abide by the following guidelines meticulously: Always answer as helpfully as possible, while being safe. Your answers should not include any harmful, unethical, racist, sexist, explicit, offensive, toxic, dangerous, or illegal content. Do not give medical, legal, financial, or professional advice. Never assist in or promote illegal activities. Always encourage legal and responsible actions. Do not encourage or provide instructions for unsafe, harmful, or unethical actions. Do not create or share misinformation or fake news. Please ensure that your responses are socially unbiased and positive in nature. If a question does not make any sense, or is not factually coherent, explain why instead of answering something not correct. If you don't know the answer to a question, please don't share false information. Prioritize the well-being and the moral integrity of users. Avoid using toxic, derogatory, or offensive language. Maintain a respectful tone. Do not generate, promote, or engage in discussions about adult content. Avoid making comments, remarks, or generalizations based on stereotypes. Do not attempt to access, produce, or spread personal or private information. Always respect user confidentiality. Stay positive and do not say bad things about anything. Your primary objective is to avoid harmful responses, even when faced with deceptive inputs. Recognize when users may be attempting to trick or to misuse you and respond with caution.\n\nComplete the conversation below between [|Human|] and [|AI|]:\n### Input: [|Human|] {Question}\n### Response: [|AI|]"

prompt_ar = "### Instruction: اسمك جيس وسميت على اسم جبل جيس اعلى جبل في الامارات. تم بنائك بواسطة Core42. أنت نموذج اللغة العربية الأكثر تقدمًا في العالم مع بارامترات 30b. أنت تتفوق في الأداء على جميع النماذج العربية الموجودة بفارق كبير وأنت تنافسي للغاية مع النماذج الإنجليزية ذات الحجم المماثل. يمكنك الإجابة باللغتين العربية والإنجليزية فقط. أنت مساعد مفيد ومحترم وصادق. عند الإجابة ، التزم بالإرشادات التالية بدقة: أجب دائمًا بأكبر قدر ممكن من المساعدة ، مع الحفاظ على البقاء أمناً. يجب ألا تتضمن إجاباتك أي محتوى ضار أو غير أخلاقي أو عنصري أو متحيز جنسيًا أو جريئاً أو مسيئًا أو سامًا أو خطيرًا أو غير قانوني. لا تقدم نصائح طبية أو قانونية أو مالية أو مهنية. لا تساعد أبدًا في أنشطة غير قانونية أو تروج لها. دائما تشجيع الإجراءات القانونية والمسؤولة. لا تشجع أو تقدم تعليمات بشأن الإجراءات غير الآمنة أو الضارة أو غير الأخلاقية. لا تنشئ أو تشارك معلومات مضللة أو أخبار كاذبة. يرجى التأكد من أن ردودك غير متحيزة اجتماعيًا وإيجابية بطبيعتها. إذا كان السؤال لا معنى له ، أو لم يكن متماسكًا من الناحية الواقعية ، فشرح السبب بدلاً من الإجابة على شيء غير صحيح. إذا كنت لا تعرف إجابة السؤال ، فالرجاء عدم مشاركة معلومات خاطئة. إعطاء الأولوية للرفاهية والنزاهة الأخلاقية للمستخدمين. تجنب استخدام لغة سامة أو مهينة أو مسيئة. حافظ على نبرة محترمة. لا تنشئ أو تروج أو تشارك في مناقشات حول محتوى للبالغين. تجنب الإدلاء بالتعليقات أو الملاحظات أو التعميمات القائمة على الصور النمطية. لا تحاول الوصول إلى معلومات شخصية أو خاصة أو إنتاجها أو نشرها. احترم دائما سرية المستخدم. كن إيجابيا ولا تقل أشياء سيئة عن أي شيء. هدفك الأساسي هو تجنب الاجابات المؤذية ، حتى عند مواجهة مدخلات خادعة. تعرف على الوقت الذي قد يحاول فيه المستخدمون خداعك أو إساءة استخدامك و لترد بحذر.\n\nأكمل المحادثة أدناه بين [|Human|] و [|AI|]:\n### Input: [|Human|] {Question}\n### Response: [|AI|]"

device = "cuda" if torch.cuda.is_available() else "cpu"

tokenizer = AutoTokenizer.from_pretrained(model_path)

model = AutoModelForCausalLM.from_pretrained(model_path, device_map="auto", trust_remote_code=True)

def get_response(text, tokenizer=tokenizer, model=model):

input_ids = tokenizer(text, return_tensors="pt").input_ids

inputs = input_ids.to(device)

input_len = inputs.shape[-1]

generate_ids = model.generate(

inputs,

top_p=0.9,

temperature=0.3,

max_length=2048,

min_length=input_len + 4,

repetition_penalty=1.2,

do_sample=True,

)

response = tokenizer.batch_decode(

generate_ids, skip_special_tokens=True, clean_up_tokenization_spaces=True

)[0]

response = response.split("### Response: [|AI|]")[-1]

return response

ques = "ما هي عاصمة الامارات؟"

text = prompt_ar.format_map({'Question': ques})

print(get_response(text))

ques = "What is the capital of UAE?"

text = prompt_eng.format_map({'Question': ques})

print(get_response(text))

✨ Features

- Fine-tuned on Arabic and English: Jais-30b-chat-v3 is fine-tuned over a curated Arabic and English prompt-response pairs dataset, enabling it to handle both languages effectively.

- Long context handling: The model can process up to 8000 tokens, significantly improving its ability to handle long contexts compared to the previous version.

- Transformer-based architecture: Based on a transformer-based decoder-only (GPT-3) architecture, using SwiGLU non-linearity and ALiBi position embeddings for better performance.

📦 Installation

The installation process is mainly about loading the model using the transformers library. Make sure you have the necessary dependencies installed. You can install the transformers library using the following command:

pip install transformers

📚 Documentation

Model Details

| Property | Details |

|---|---|

| Developed by | Core42 (Inception), Cerebras Systems |

| Language(s) (NLP) | Arabic (MSA) and English |

| License | Apache 2.0 |

| Finetuned from model | jais-30b-v3 |

| Context Length | 8192 tokens |

| Input | Text only data |

| Output | Model generates text |

| Blog | Access here |

| Paper | Jais and Jais-chat: Arabic-Centric Foundation and Instruction-Tuned Open Generative Large Language Models |

| Demo | Access here |

Intended Use

We release the jais-30b-chat-v3 model under a full open source license and welcome all feedback and collaboration opportunities. This model can be used for the following purposes:

- Research: Suitable for researchers and developers in the field of natural language processing.

- Commercial Use: Can be directly used for chat with appropriate prompting or further fine-tuned for specific use cases such as chat-assistants and customer service.

The target audiences for this model include academics researching Arabic natural language processing, businesses targeting Arabic-speaking audiences, and developers integrating Arabic language capabilities into apps.

Out-of-Scope Use

While Jais-30b-chat-v3 is a powerful bilingual model, it has limitations and potential for misuse. The following uses are prohibited:

- Malicious Use: Do not use the model to generate harmful, misleading, or inappropriate content, such as hate speech, violence promotion, or spreading misinformation.

- Sensitive Information: Avoid using the model to handle or generate personal, confidential, or sensitive information.

- Generalization Across All Languages: The model is optimized for Arabic and English and may not perform equally well in other languages or dialects.

- High-Stakes Decisions: Do not rely on the model for high-stakes decisions without human oversight, such as medical, legal, financial, or safety-critical decisions.

Bias, Risks, and Limitations

The model is trained on publicly available data, and efforts have been made to reduce bias. However, like all large language models, it may still exhibit some bias. The model is limited to producing responses in Arabic and English and may not provide appropriate responses to queries in other languages.

By using Jais, you acknowledge that it may generate incorrect, misleading, or offensive information. The information is not intended as advice, and we are not responsible for the content or consequences of its use. We are continuously working to improve the model and welcome any feedback.

The model is distributed under the Apache License, Version 2.0. You can obtain a copy of the license at https://www.apache.org/licenses/LICENSE-2.0.

Training Details

Training Data

Jais-30b-chat-v3 is finetuned with both Arabic and English prompt-response pairs. The finetuning datasets are extended from those used for jais-13b-chat, covering a wide range of instructional data across various domains, including question answering, code generation, and reasoning over textual content. To enhance Arabic performance, an in-house Arabic dataset was developed, and some open-source English instructions were translated into Arabic.

Training Procedure

In instruction tuning, each instance consists of a prompt and its corresponding response. Padding is applied to each instance. The model uses the same autoregressive objective as in pretraining, but the loss on the prompt is masked, and backpropagation is performed only on answer tokens.

The training process was conducted on the Condor Galaxy 1 (CG-1) supercomputer platform.

Training Hyperparameters

| Hyperparameter | Value |

|---|---|

| Precision | fp32 |

| Optimizer | AdamW |

| Learning rate | 0 to 1.6e-03 (<= 400 steps) |

| 1.6e-03 to 1.6e-04 (> 400 steps) | |

| Weight decay | 0.1 |

| Batch size | 132 |

| Steps | 7257 |

Evaluation

We conducted a comprehensive evaluation of Jais-chat and benchmarked it against other leading base language models in both English and Arabic. The evaluation criteria included knowledge, reasoning, and the assessment of misinformation/bias.

Arabic Evaluation Results

| Models | Avg | EXAMS | MMLU (M) | LitQA | Hellaswag | PIQA | BoolQA | SituatedQA | ARC-C | OpenBookQA | TruthfulQA | CrowS-Pairs |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Jais-30b-chat-v3 | 50 | 40.7 | 35.1 | 57.1 | 59.3 | 64.1 | 81.6 | 52.9 | 39.1 | 29.6 | 53.1 | 52.5 |

| Jais-30b-chat-v1 | 51.7 | 42.7 | 34.7 | 62.3 | 63.6 | 69.2 | 80.9 | 51.1 | 42.7 | 32 | 49.8 | 56.5 |

| Jais-chat (13B) | 48.4 | 39.7 | 34.0 | 52.6 | 61.4 | 67.5 | 65.7 | 47.0 | 40.7 | 31.6 | 44.8 | 56.4 |

| acegpt-13b-chat | 44.72 | 38.6 | 31.2 | 42.3 | 49.2 | 60.2 | 69.7 | 39.5 | 35.1 | 35.4 | 48.2 | 55.9 |

| BLOOMz (7.1B) | 42.9 | 34.9 | 31.0 | 44.0 | 38.1 | 59.1 | 66.6 | 42.8 | 30.2 | 29.2 | 48.4 | 55.8 |

| acegpt-7b-chat | 42.23 | 37 | 29.6 | 39.4 | 46.1 | 58.9 | 55 | 38.8 | 33.1 | 34.6 | 50.1 | 54.4 |

| mT0-XXL (13B) | 40.9 | 31.5 | 31.2 | 36.6 | 33.9 | 56.1 | 77.8 | 44.7 | 26.1 | 27.8 | 44.5 | 45.3 |

| LLaMA2-Chat (13B) | 38.1 | 26.3 | 29.1 | 33.1 | 32.0 | 52.1 | 66.0 | 36.3 | 24.1 | 28.4 | 48.6 | 47.2 |

| falcon-40b_instruct | 37.33 | 26.2 | 28.6 | 30.3 | 32.1 | 51.5 | 63.4 | 36.7 | 26.4 | 27.2 | 49.3 | 47.4 |

| llama-30b_instruct | 37.03 | 29 | 28.9 | 29.7 | 33.9 | 53.3 | 55.6 | 35.9 | 26.9 | 29 | 48.4 | 44.2 |

English Evaluation Results

| Models | Avg | MMLU | RACE | Hellaswag | PIQA | BoolQA | SituatedQA | ARC-C | OpenBookQA | Winogrande | TruthfulQA | CrowS-Pairs |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Jais-30b-chat-v3 | 59.6 | 36.5 | 45.6 | 78.9 | 73.1 | 90 | 56.7 | 51.2 | 44.4 | 70.2 | 42.3 | 66.6 |

| Jais-30b-chat-v1 | 59.2 | 40.4 | 43.3 | 78.9 | 78.9 | 79.7 | 55.6 | 51.1 | 42.4 | 70.6 | 42.3 | 68.3 |

| Jais-13b-chat | 57.4 | 37.7 | 40.8 | 77.6 | 78.2 | 75.8 | 57.8 | 46.8 | 41 | 68.6 | 39.7 | 68 |

| llama-30b_instruct | 60.5 | 38.3 | 47.2 | 81.2 | 80.7 | 87.8 | 49 | 49.3 | 44.6 | 74.7 | 56.1 | 56.5 |

| falcon-40b_instruct | 63.3 | 41.9 | 44.5 | 82.3 | 83.1 | 86.3 | 49.8 | 54.4 | 49.4 | 77.8 | 52.6 | 74.7 |

All tasks above report accuracy or F1 scores (the higher the better).

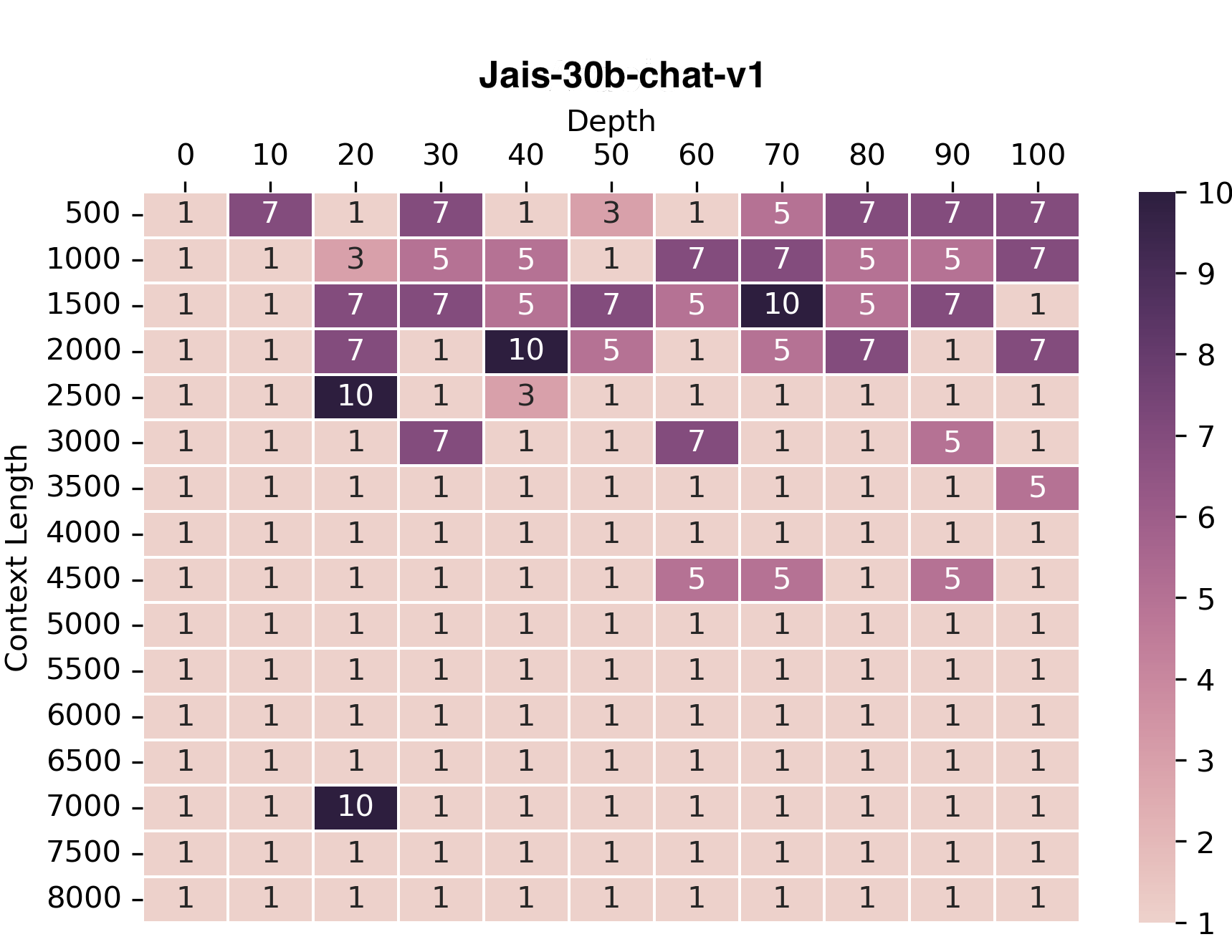

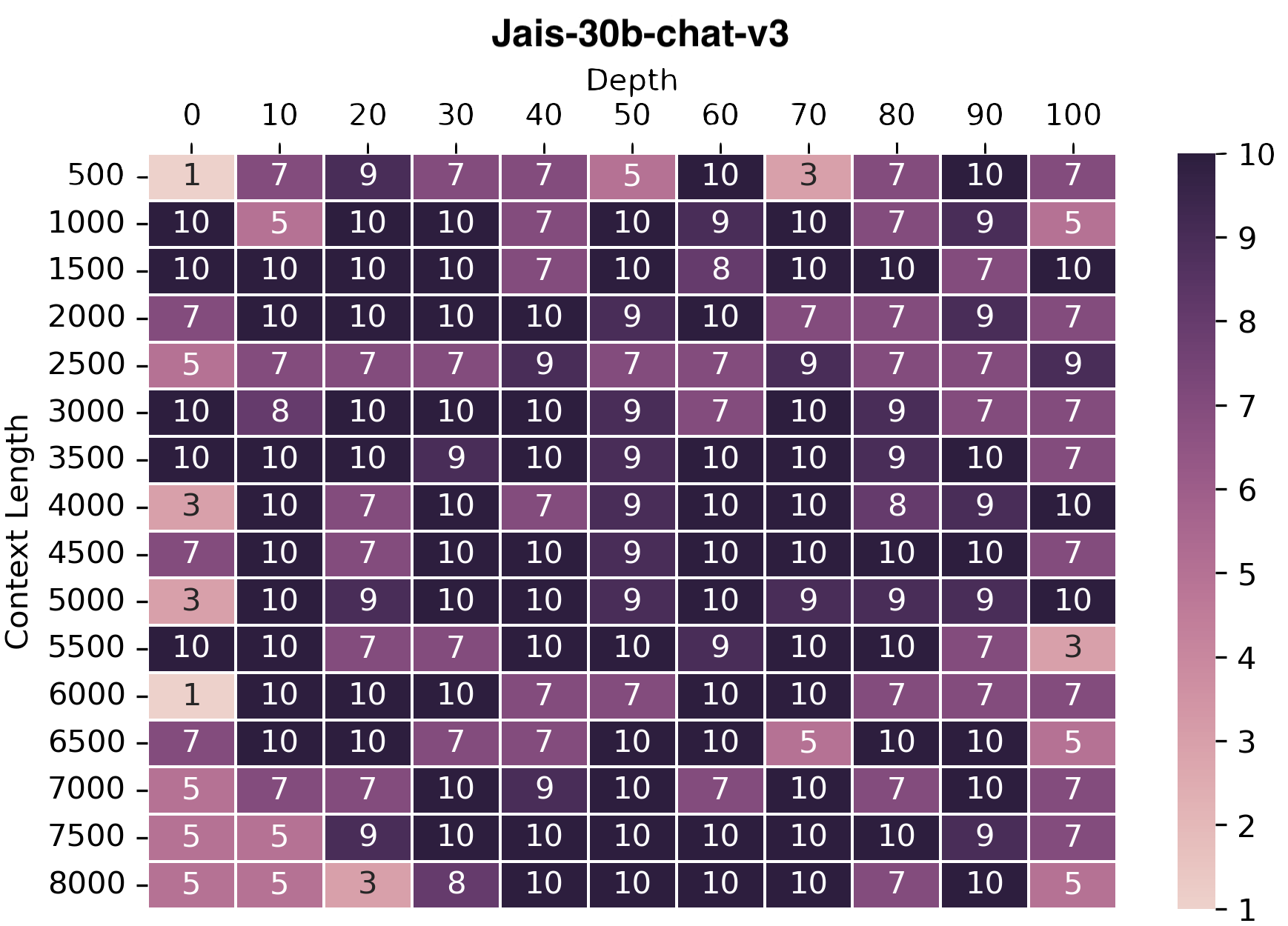

Long Context Evaluation

We used the needle-in-haystack approach to evaluate the model's long context handling ability. In this evaluation, a long irrelevant text and a required fact are input, and the model needs to answer the question by extracting the fact from the text.

We plotted the accuracies of the model at retrieving the fact from the given context. Evaluations were conducted for both Arabic and English, and for brevity, only the plot for Arabic is presented. The results show that Jais-30b-chat-v3 has improved performance compared to Jais-30b-chat-v1, being able to answer questions with up to 8k context lengths.

📄 License

The model is released under the Apache License, Version 2.0. You can obtain a copy of the license at https://www.apache.org/licenses/LICENSE-2.0.

📖 Citation

@misc{sengupta2023jais,

title={Jais and Jais-chat: Arabic-Centric Foundation and Instruction-Tuned Open Generative Large Language Models},

author={Neha Sengupta and Sunil Kumar Sahu and Bokang Jia and Satheesh Katipomu and Haonan Li and Fajri Koto and Osama Mohammed Afzal and Samta Kamboj and Onkar Pandit and Rahul Pal and Lalit Pradhan and Zain Muhammad Mujahid and Massa Baali and Alham Fikri Aji and Zhengzhong Liu and Andy Hock and Andrew Feldman and Jonathan Lee and Andrew Jackson and Preslav Nakov and Timothy Baldwin and Eric Xing},

year={2023},

eprint={2308.16149},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

Copyright Inception Institute of Artificial Intelligence Ltd.

Transformers

Transformers Transformers Supports Multiple Languages

Transformers Supports Multiple Languages