🚀 OneFormer

OneFormer is a model trained on the COCO dataset (large-sized version, Dinat backbone). It offers a unified solution for universal image segmentation tasks.

🚀 Quick Start

OneFormer is designed to handle semantic, instance, and panoptic segmentation tasks effectively. You can use this model for various image segmentation needs.

✨ Features

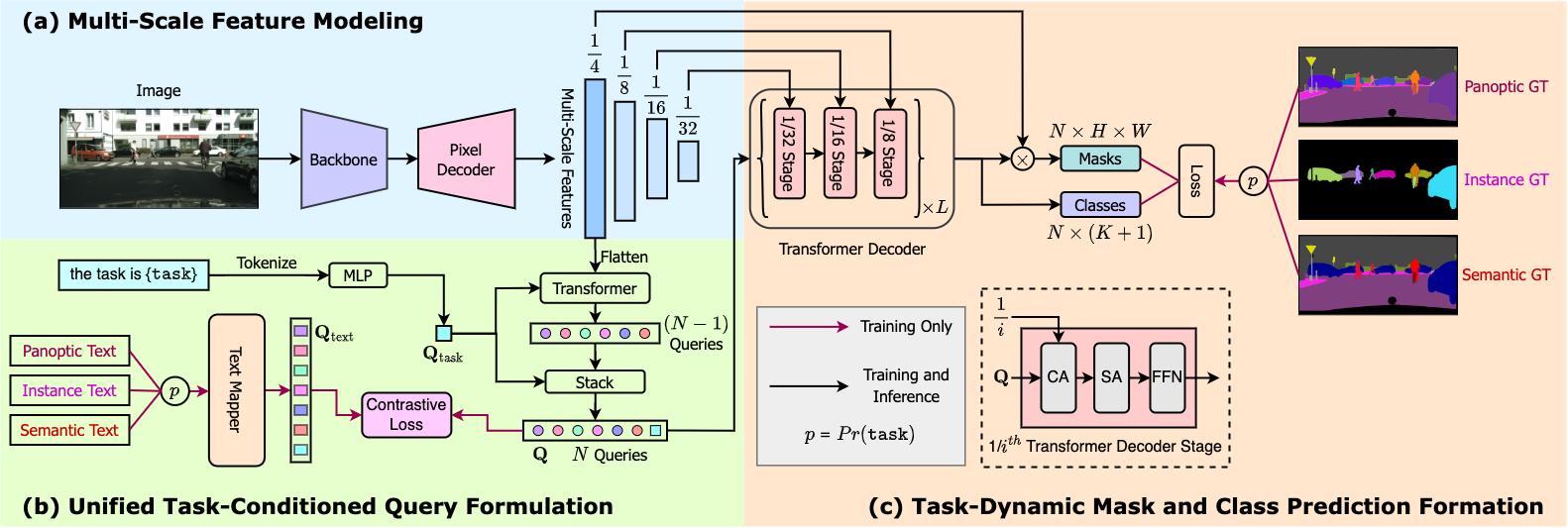

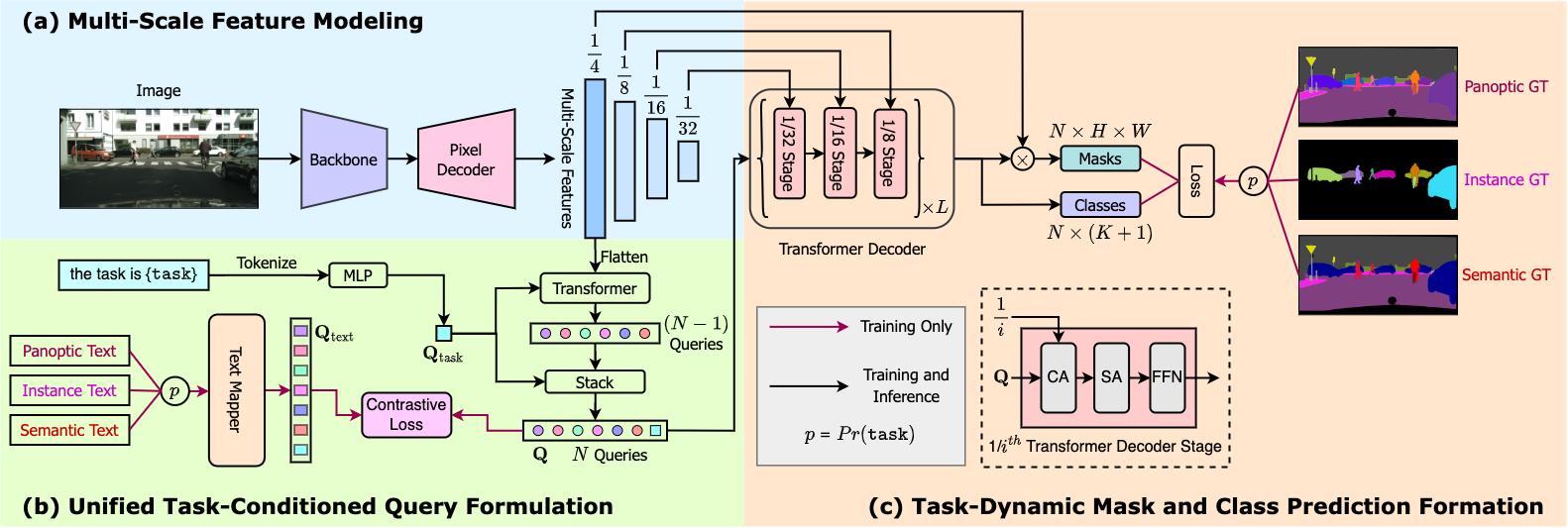

- Multi - task Universal Segmentation: OneFormer is the first multi - task universal image segmentation framework. It can outperform existing specialized models across semantic, instance, and panoptic segmentation tasks with a single model architecture trained on a single dataset.

- Task - Guided and Dynamic: It uses a task token to condition the model on the task in focus, making the architecture task - guided for training and task - dynamic for inference.

📚 Documentation

Model description

OneFormer is the first multi - task universal image segmentation framework. It needs to be trained only once with a single universal architecture, a single model, and on a single dataset, to outperform existing specialized models across semantic, instance, and panoptic segmentation tasks. OneFormer uses a task token to condition the model on the task in focus, making the architecture task - guided for training, and task - dynamic for inference, all with a single model.

Intended uses & limitations

You can use this particular checkpoint for semantic, instance and panoptic segmentation. See the model hub to look for other fine - tuned versions on a different dataset.

💻 Usage Examples

Basic Usage

from transformers import OneFormerProcessor, OneFormerForUniversalSegmentation

from PIL import Image

import requests

url = "https://huggingface.co/datasets/shi-labs/oneformer_demo/blob/main/coco.jpeg"

image = Image.open(requests.get(url, stream=True).raw)

processor = OneFormerProcessor.from_pretrained("shi-labs/oneformer_coco_dinat_large")

model = OneFormerForUniversalSegmentation.from_pretrained("shi-labs/oneformer_coco_dinat_large")

semantic_inputs = processor(images=image, task_inputs=["semantic"], return_tensors="pt")

semantic_outputs = model(**semantic_inputs)

predicted_semantic_map = processor.post_process_semantic_segmentation(outputs, target_sizes=[image.size[::-1]])[0]

instance_inputs = processor(images=image, task_inputs=["instance"], return_tensors="pt")

instance_outputs = model(**instance_inputs)

predicted_instance_map = processor.post_process_instance_segmentation(outputs, target_sizes=[image.size[::-1]])[0]["segmentation"]

panoptic_inputs = processor(images=image, task_inputs=["panoptic"], return_tensors="pt")

panoptic_outputs = model(**panoptic_inputs)

predicted_semantic_map = processor.post_process_panoptic_segmentation(outputs, target_sizes=[image.size[::-1]])[0]["segmentation"]

For more examples, please refer to the documentation.

Citation

@article{jain2022oneformer,

title={{OneFormer: One Transformer to Rule Universal Image Segmentation}},

author={Jitesh Jain and Jiachen Li and MangTik Chiu and Ali Hassani and Nikita Orlov and Humphrey Shi},

journal={arXiv},

year={2022}

}

📄 License

This project is licensed under the MIT license.

| Property |

Details |

| Model Type |

OneFormer model trained on the COCO dataset (large - sized version, Dinat backbone) |

| Training Data |

ydshieh/coco_dataset_script |