🚀 Conditional DETR model with ResNet-101 backbone (dilated C5 stage)

This Conditional DETR model, with a ResNet-101 backbone and a dilated C5 stage, is trained end - to - end on COCO 2017 object detection dataset, offering efficient object detection capabilities.

🚀 Quick Start

The Conditional DEtection TRansformer (DETR) model is trained end - to - end on COCO 2017 object detection (118k annotated images). Note that the model weights were converted to the transformers implementation from the original weights and published as both PyTorch and Safetensors weights. The original weights can be downloaded from the original repository.

✨ Features

- End - to - end Training: Trained end - to - end on COCO 2017 object detection dataset.

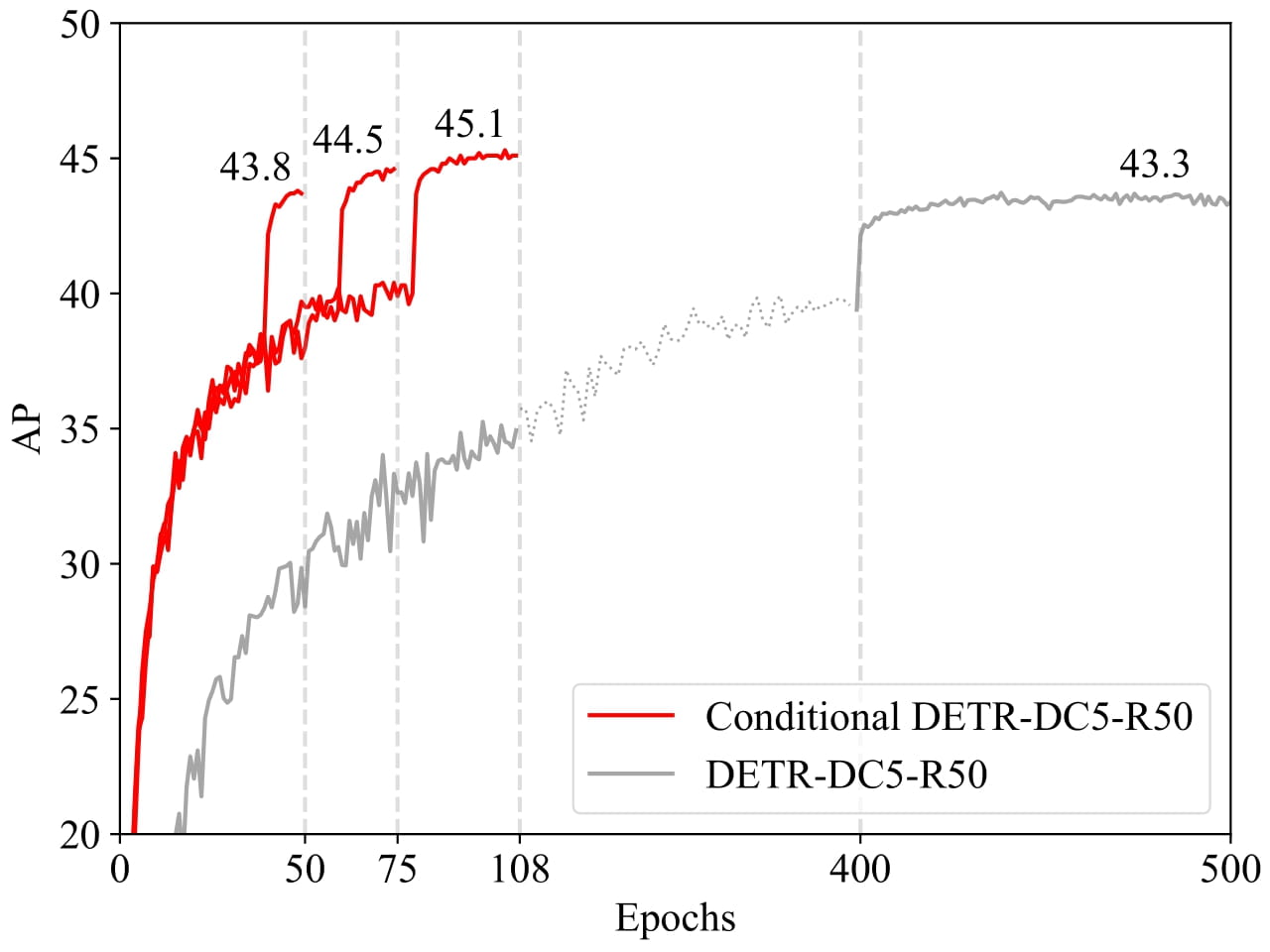

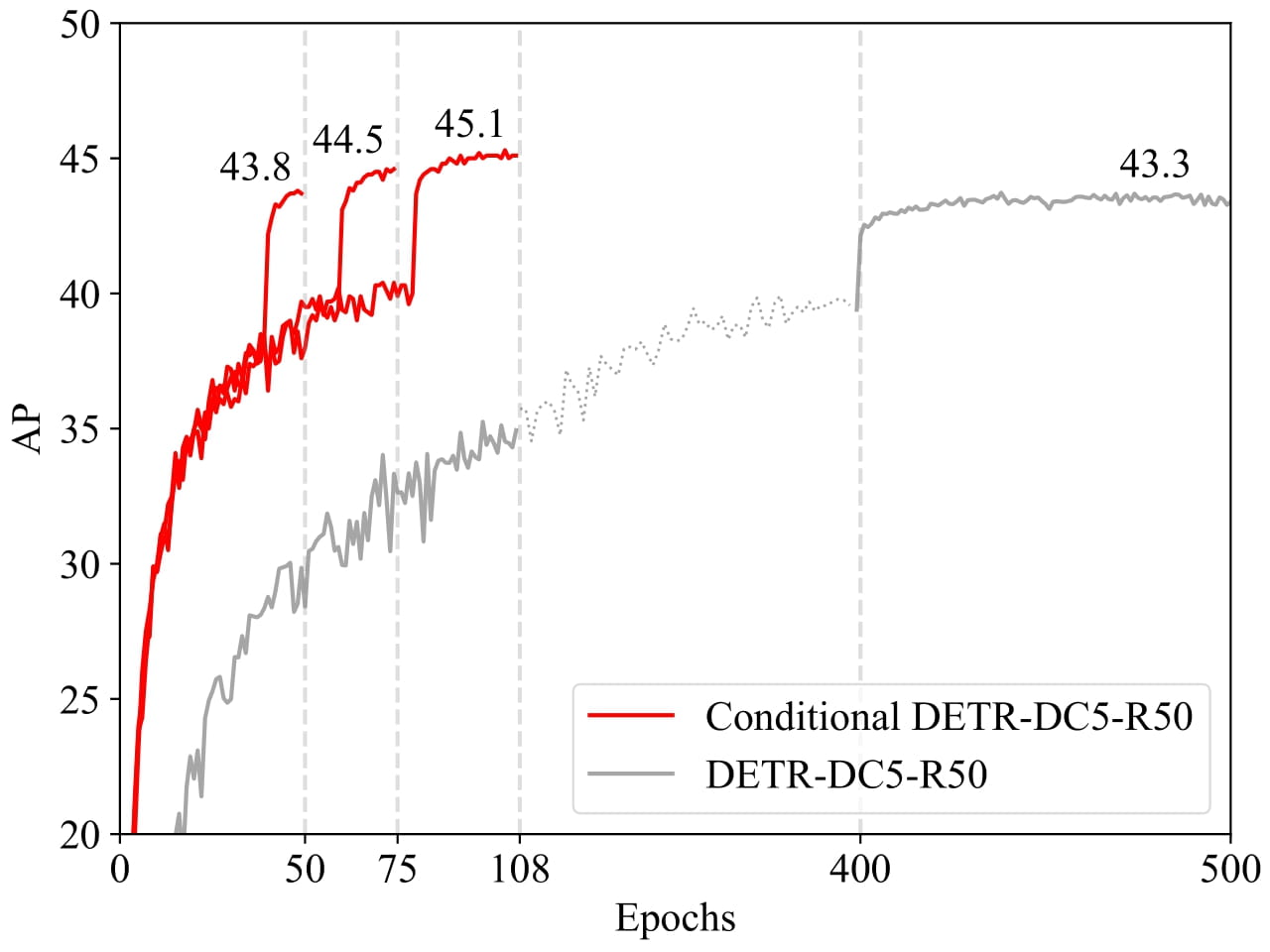

- Fast Convergence: Converges 6.7× faster for the backbones R50 and R101 and 10× faster for stronger backbones DC5 - R50 and DC5 - R101.

- Model Weights: Available in both PyTorch and Safetensors formats.

📦 Installation

No specific installation steps are provided in the original document, so this section is skipped.

💻 Usage Examples

Basic Usage

from transformers import AutoImageProcessor, ConditionalDetrForObjectDetection

import torch

from PIL import Image

import requests

url = "http://images.cocodataset.org/val2017/000000039769.jpg"

image = Image.open(requests.get(url, stream=True).raw)

processor = AutoImageProcessor.from_pretrained("Omnifact/conditional-detr-resnet-101-dc5")

model = ConditionalDetrForObjectDetection.from_pretrained("Omnifact/conditional-detr-resnet-101-dc5")

inputs = processor(images=image, return_tensors="pt")

outputs = model(**inputs)

target_sizes = torch.tensor([image.size[::-1]])

results = processor.post_process_object_detection(outputs, target_sizes=target_sizes, threshold=0.7)[0]

for score, label, box in zip(results["scores"], results["labels"], results["boxes"]):

box = [round(i, 2) for i in box.tolist()]

print(

f"Detected {model.config.id2label[label.item()]} with confidence "

f"{round(score.item(), 3)} at location {box}"

)

This should output:

Detected cat with confidence 0.865 at location [13.95, 64.98, 327.14, 478.82]

Detected remote with confidence 0.849 at location [39.37, 83.18, 187.67, 125.02]

Detected cat with confidence 0.743 at location [327.22, 35.17, 637.54, 377.04]

Detected remote with confidence 0.737 at location [329.36, 89.47, 376.42, 197.53]

📚 Documentation

Model description

The recently - developed DETR approach applies the transformer encoder and decoder architecture to object detection and achieves promising performance. In this paper, we handle the critical issue, slow training convergence, and present a conditional cross - attention mechanism for fast DETR training. Our approach is motivated by that the cross - attention in DETR relies highly on the content embeddings for localizing the four extremities and predicting the box, which increases the need for high - quality content embeddings and thus the training difficulty. Our approach, named conditional DETR, learns a conditional spatial query from the decoder embedding for decoder multi - head cross - attention. The benefit is that through the conditional spatial query, each cross - attention head is able to attend to a band containing a distinct region, e.g., one object extremity or a region inside the object box. This narrows down the spatial range for localizing the distinct regions for object classification and box regression, thus relaxing the dependence on the content embeddings and easing the training. Empirical results show that conditional DETR converges 6.7× faster for the backbones R50 and R101 and 10× faster for stronger backbones DC5 - R50 and DC5 - R101.

Intended uses & limitations

You can use the raw model for object detection. See the model hub to look for all available Conditional DETR models.

🔧 Technical Details

The model uses a conditional cross - attention mechanism to address the slow training convergence issue in traditional DETR models. By learning a conditional spatial query from the decoder embedding, it narrows down the spatial range for object classification and box regression, reducing the dependence on content embeddings and speeding up the training process.

📄 License

The model is licensed under the Apache - 2.0 license.

Information Table

| Property |

Details |

| Model Type |

Conditional DETR model with ResNet - 101 backbone (dilated C5 stage) |

| Training Data |

COCO 2017 object detection (118k/5k annotated images for training/validation respectively) |

BibTeX entry and citation info

@inproceedings{MengCFZLYS021,

author = {Depu Meng and

Xiaokang Chen and

Zejia Fan and

Gang Zeng and

Houqiang Li and

Yuhui Yuan and

Lei Sun and

Jingdong Wang},

title = {Conditional {DETR} for Fast Training Convergence},

booktitle = {2021 {IEEE/CVF} International Conference on Computer Vision, {ICCV}

2021, Montreal, QC, Canada, October 10 - 17, 2021},

}