🚀 Model Card for Model ID

This model card serves as a base template for new models, aiming to provide a comprehensive overview of the model.

📚 Documentation

📋 Model Details

📝 Model Description

- Developed by: [More Information Needed]

- Shared by [optional]: [More Information Needed]

- Model type: [More Information Needed]

- Language(s) (NLP): [More Information Needed]

- License: MIT

- Finetuned from model [optional]: [More Information Needed]

🌐 Model Sources [optional]

🛠️ Uses

📍 Direct Use

This section demonstrates the model use without fine - tuning or plugging into a larger ecosystem/app.

from typing import Dict

import numpy as np

from datasets import load_dataset

from matplotlib import cm

from PIL import Image

from torch import Tensor

from transformers import AutoImageProcessor, AutoModel

model = AutoModel.from_pretrained("RGBD-SOD/bbsnet", trust_remote_code=True)

image_processor = AutoImageProcessor.from_pretrained(

"RGBD-SOD/bbsnet", trust_remote_code=True

)

dataset = load_dataset("RGBD-SOD/test", "v1", split="train", cache_dir="data")

index = 0

"""

Get a specific sample from the dataset

sample = {

'depth': <PIL.PngImagePlugin.PngImageFile image mode=L size=640x360>,

'rgb': <PIL.PngImagePlugin.PngImageFile image mode=RGB size=640x360>,

'gt': <PIL.PngImagePlugin.PngImageFile image mode=L size=640x360>,

'name': 'COME_Train_5'

}

"""

sample = dataset[index]

depth: Image.Image = sample["depth"]

rgb: Image.Image = sample["rgb"]

gt: Image.Image = sample["gt"]

name: str = sample["name"]

"""

1. Preprocessing step

preprocessed_sample = {

'rgb': tensor([[[[-0.8507, ....0365]]]]),

'gt': tensor([[[[0., 0., 0...., 0.]]]]),

'depth': tensor([[[[0.9529, 0....3490]]]])

}

"""

preprocessed_sample: Dict[str, Tensor] = image_processor.preprocess(sample)

"""

2. Prediction step

output = {

'logits': tensor([[[[-5.1966, ...ackward0>)

}

"""

output: Dict[str, Tensor] = model(

preprocessed_sample["rgb"], preprocessed_sample["depth"]

)

"""

3. Postprocessing step

"""

postprocessed_sample: np.ndarray = image_processor.postprocess(

output["logits"], [sample["gt"].size[1], sample["gt"].size[0]]

)

prediction = Image.fromarray(np.uint8(cm.gist_earth(postprocessed_sample) * 255))

"""

Show the predicted salient map and the corresponding ground-truth(GT)

"""

prediction.show()

gt.show()

➡️ Downstream Use [optional]

[More Information Needed]

❌ Out-of-Scope Use

This section addresses misuse, malicious use, and uses that the model will not work well for.

[More Information Needed]

⚠️ Bias, Risks, and Limitations

This section is meant to convey both technical and sociotechnical limitations.

[More Information Needed]

💡 Recommendations

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

🚀 How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

🏋️ Training Details

📊 Training Data

[More Information Needed]

📋 Training Procedure

⚙️ Preprocessing [optional]

[More Information Needed]

⚖️ Training Hyperparameters

- Training regime: [More Information Needed]

⏱️ Speeds, Sizes, Times [optional]

This section provides information about throughput, start/end time, checkpoint size if relevant.

[More Information Needed]

📈 Evaluation

📝 Testing Data, Factors & Metrics

📊 Testing Data

[More Information Needed]

🔍 Factors

These are the things the evaluation is disaggregating by, e.g., subpopulations or domains.

[More Information Needed]

📏 Metrics

These are the evaluation metrics being used, ideally with a description of why.

[More Information Needed]

📊 Results

[More Information Needed]

📋 Summary

[More Information Needed]

🌱 Environmental Impact

Carbon emissions can be estimated using the Machine Learning Impact calculator presented in Lacoste et al. (2019).

- Hardware Type: [More Information Needed]

- Hours used: [More Information Needed]

- Cloud Provider: [More Information Needed]

- Compute Region: [More Information Needed]

- Carbon Emitted: [More Information Needed]

⚙️ Technical Specifications [optional]

🧠 Model Architecture and Objective

[More Information Needed]

💻 Compute Infrastructure

🖥️ Hardware

[More Information Needed]

💽 Software

[More Information Needed]

📚 Citation [optional]

BibTeX:

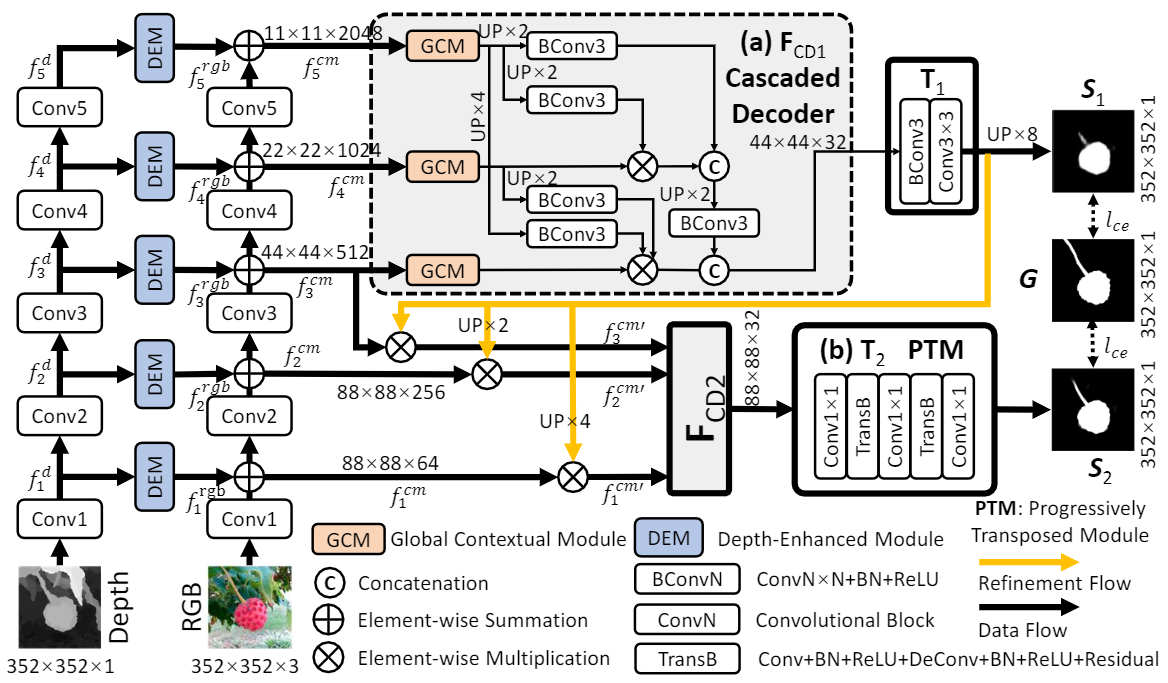

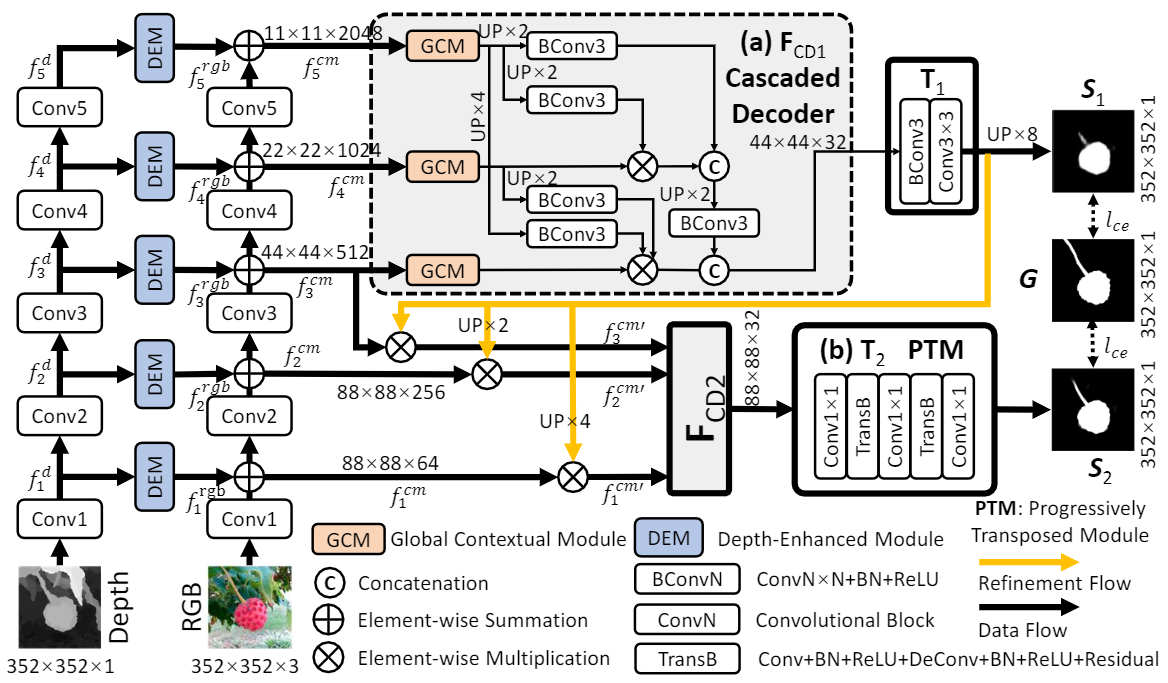

@inproceedings{fan2020bbs,

title={BBS-Net: RGB-D salient object detection with a bifurcated backbone strategy network},

author={Fan, Deng-Ping and Zhai, Yingjie and Borji, Ali and Yang, Jufeng and Shao, Ling},

booktitle={Computer Vision--ECCV 2020: 16th European Conference, Glasgow, UK, August 23--28, 2020, Proceedings, Part XII},

pages={275--292},

year={2020},

organization={Springer}

}

APA:

[More Information Needed]

📖 Glossary [optional]

[More Information Needed]

ℹ️ More Information [optional]

[More Information Needed]

📝 Model Card Authors [optional]

[More Information Needed]

📞 Model Card Contact

[More Information Needed]