🚀 WizardLM-2

WizardLM-2 is a next-generation state-of-the-art large language model. It shows improved performance in complex chat, multilingual communication, reasoning, and agent tasks.

🔗 Useful Links

🗞️ News 🔥🔥🔥 [2024/04/15]

We introduce and open-source WizardLM-2, our next-generation state-of-the-art large language models. They have improved performance on complex chat, multilingual, reasoning, and agent tasks. The new family includes three cutting-edge models: WizardLM-2 8x22B, WizardLM-2 70B, and WizardLM-2 7B.

- WizardLM-2 8x22B is our most advanced model. It demonstrates highly competitive performance compared to leading proprietary works and consistently outperforms all existing state-of-the-art open-source models.

- WizardLM-2 70B reaches top-tier reasoning capabilities and is the first choice in the same size.

- WizardLM-2 7B is the fastest and achieves comparable performance with existing 10x larger open-source leading models.

For more details of WizardLM-2, please read our release blog post and upcoming paper.

📚 Model Details

💪 Model Capacities

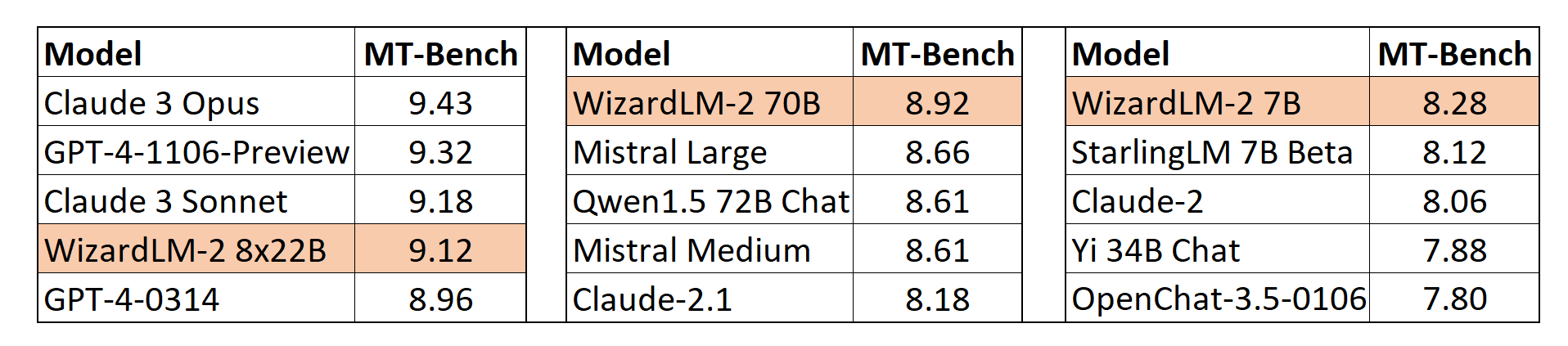

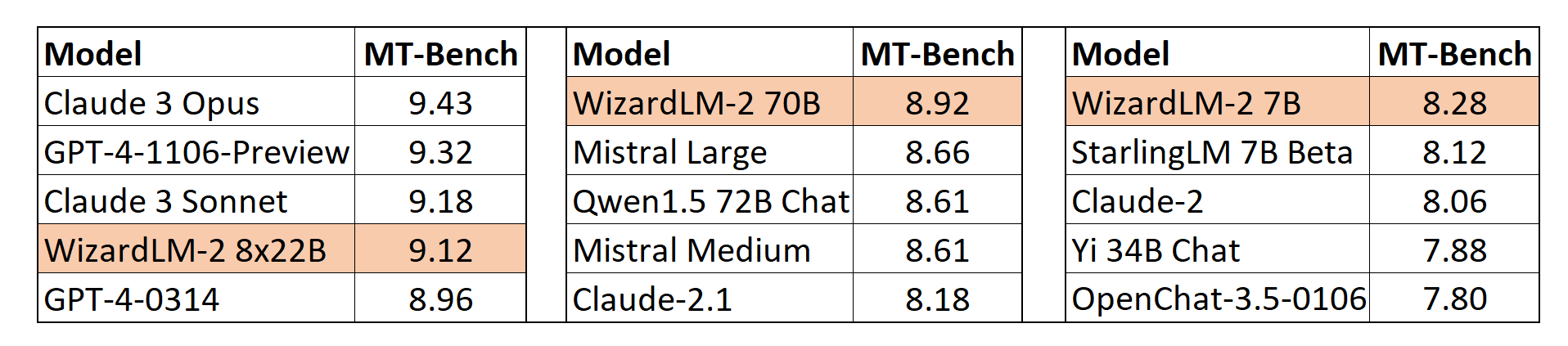

📊 MT-Bench

We also adopt the automatic MT-Bench evaluation framework based on GPT-4 proposed by lmsys to assess the performance of models. The WizardLM-2 8x22B even demonstrates highly competitive performance compared to the most advanced proprietary models. Meanwhile, WizardLM-2 7B and WizardLM-2 70B are all the top-performing models among the other leading baselines at 7B to 70B model scales.

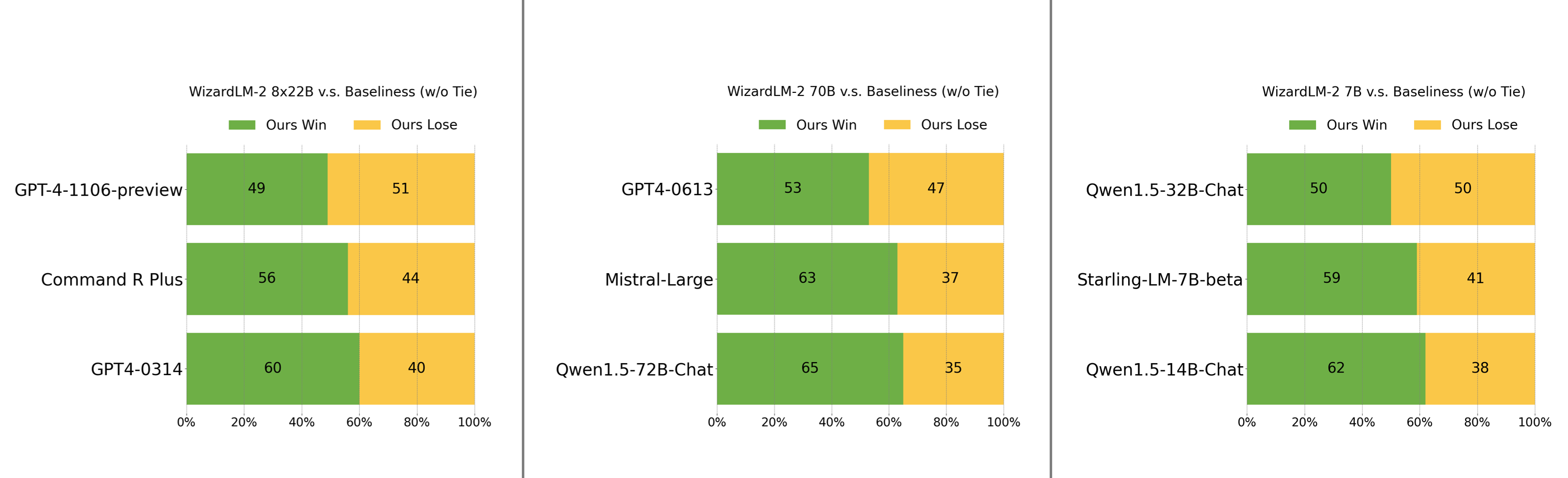

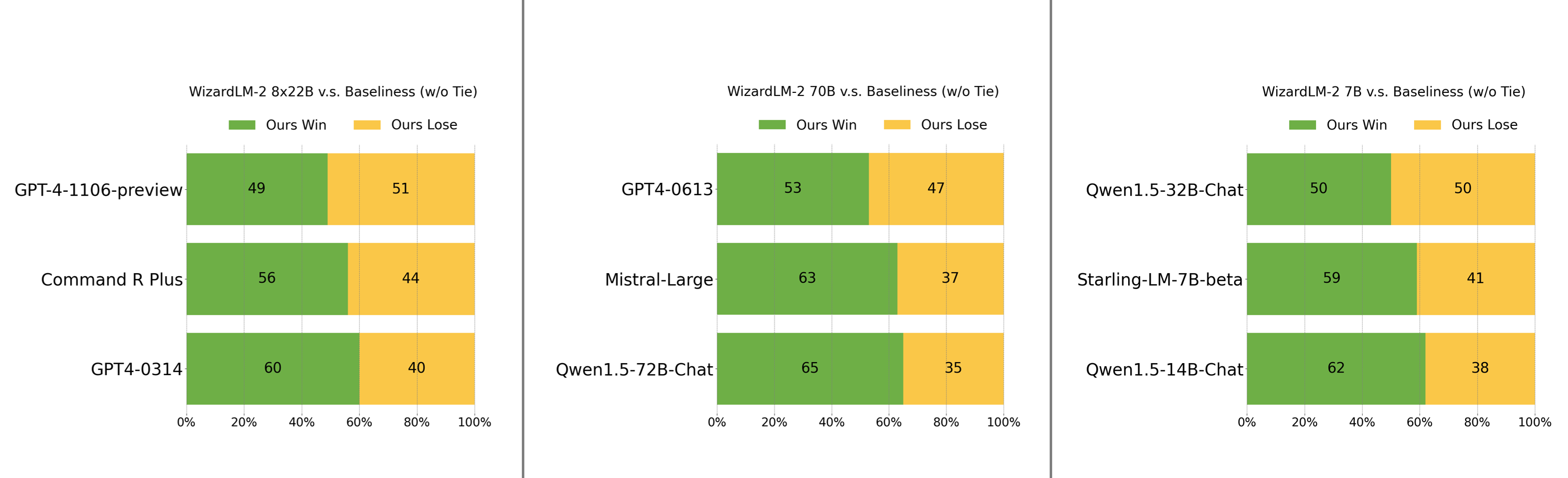

👥 Human Preferences Evaluation

We carefully collected a complex and challenging set consisting of real-world instructions, which includes main requirements of humanity, such as writing, coding, math, reasoning, agent, and multilingual. We report the win:loss rate without tie:

- WizardLM-2 8x22B is just slightly falling behind GPT-4-1106-preview and significantly stronger than Command R Plus and GPT4-0314.

- WizardLM-2 70B is better than GPT4-0613, Mistral-Large, and Qwen1.5-72B-Chat.

- WizardLM-2 7B is comparable with Qwen1.5-32B-Chat and surpasses Qwen1.5-14B-Chat and Starling-LM-7B-beta.

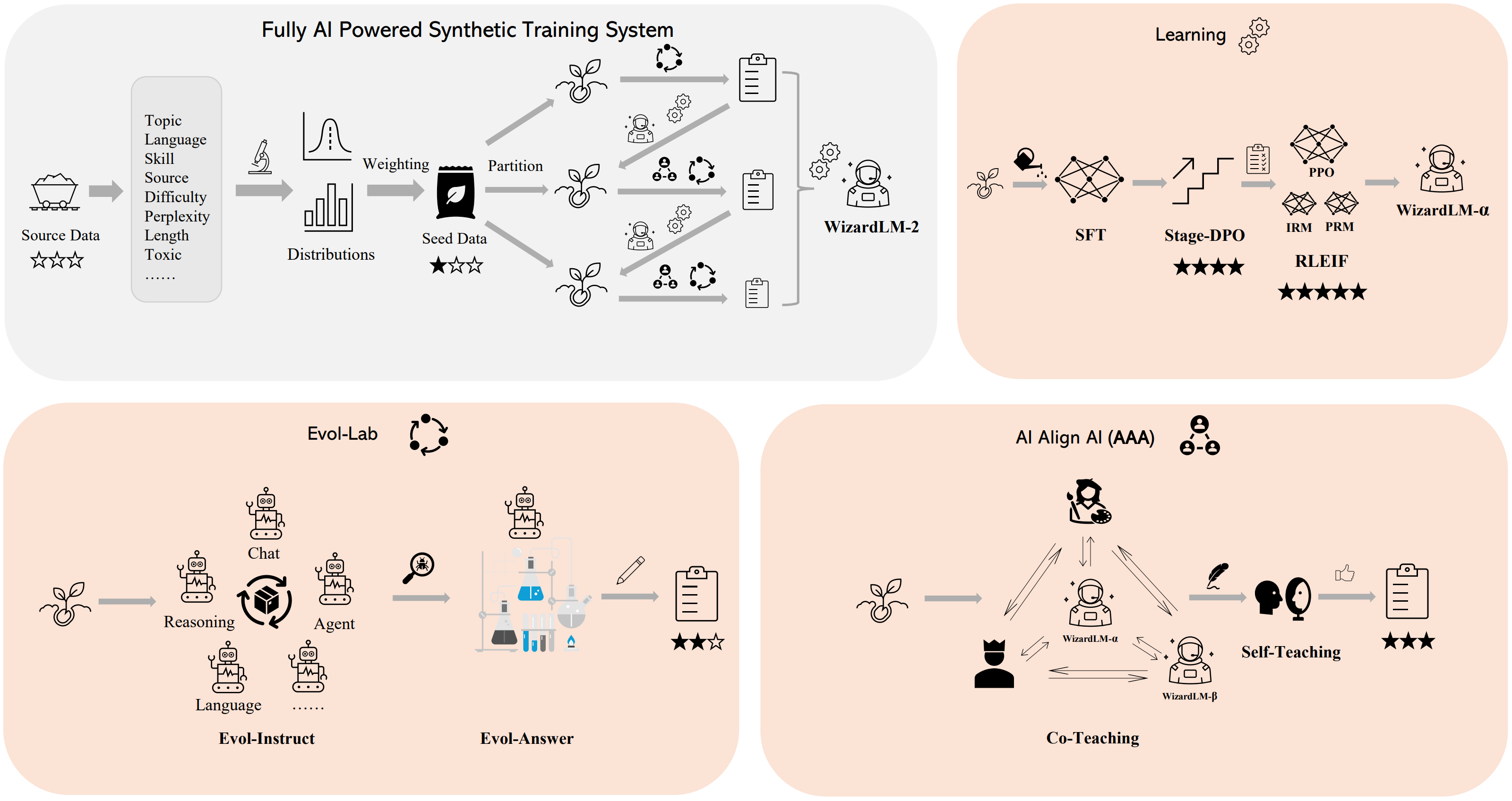

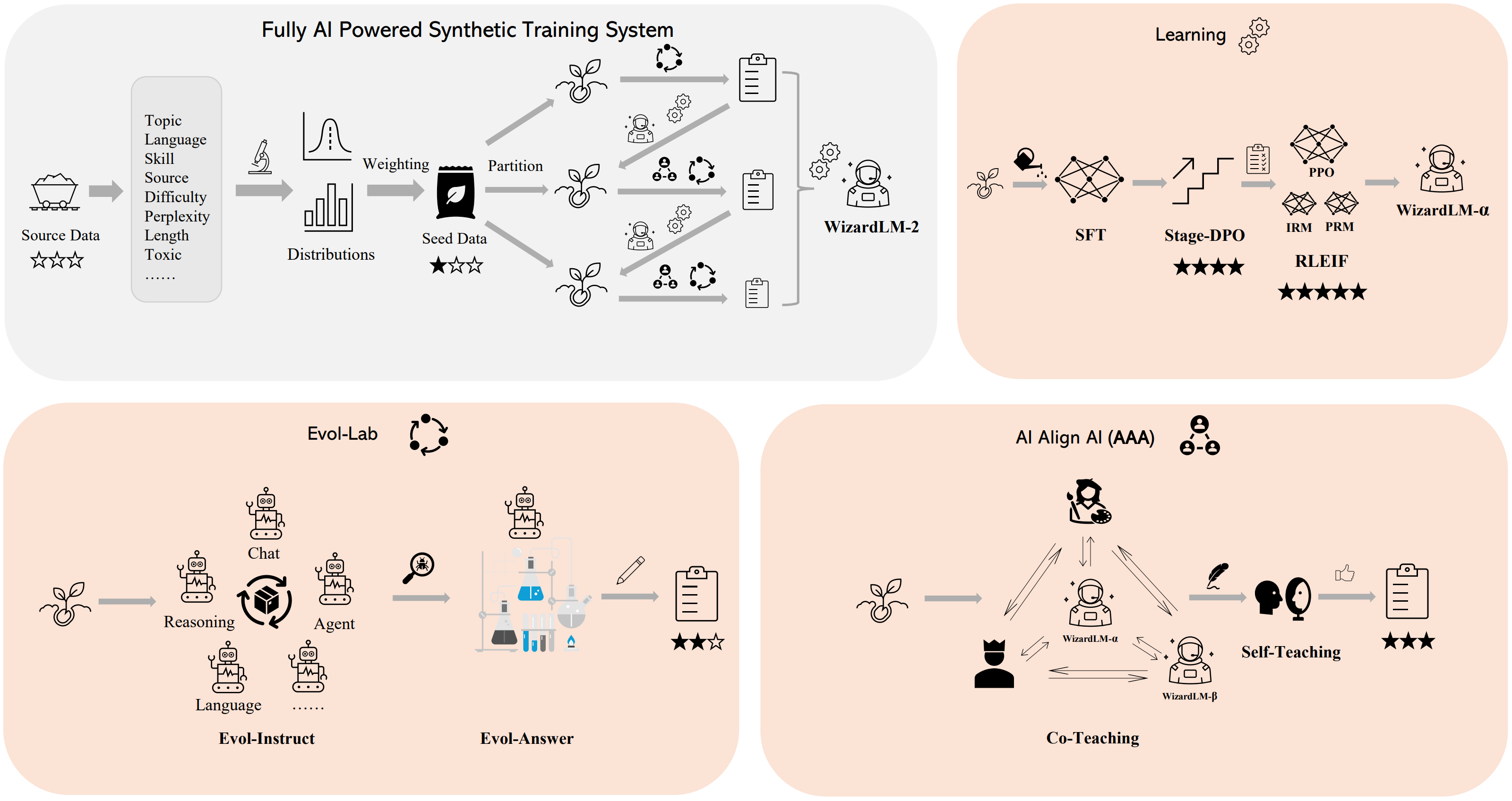

🛠️ Method Overview

We built a fully AI powered synthetic training system to train WizardLM-2 models. Please refer to our blog for more details of this system.

💻 Usage

⚠️ Important Note

Note for model system prompts usage.

WizardLM-2 adopts the prompt format from Vicuna and supports multi-turn conversation. The prompt should be as following:

A chat between a curious user and an artificial intelligence assistant. The assistant gives helpful,

detailed, and polite answers to the user's questions. USER: Hi ASSISTANT: Hello.</s>

USER: Who are you? ASSISTANT: I am WizardLM.</s>......

🚀 Inference WizardLM-2 Demo Script

We provide a WizardLM-2 inference demo code on our github.