🚀 BigVGAN: A Universal Neural Vocoder with Large-Scale Training

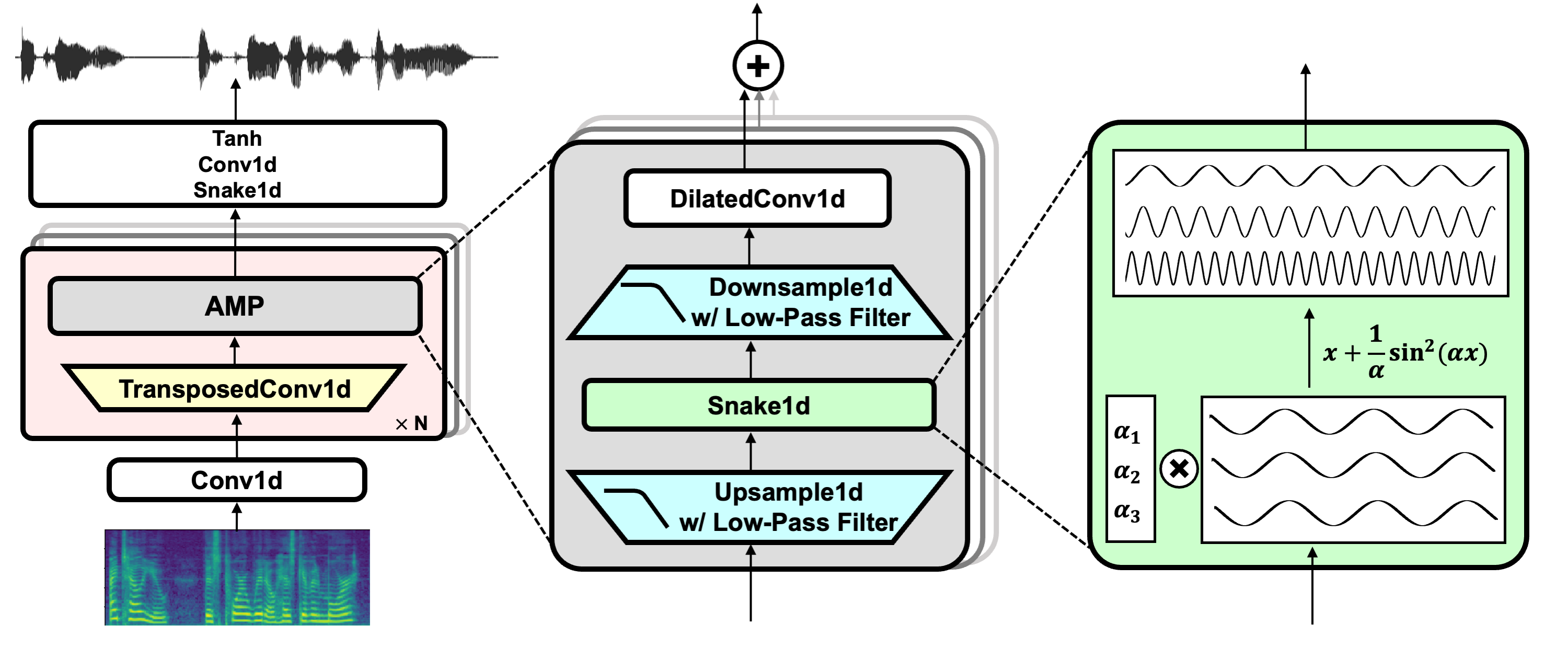

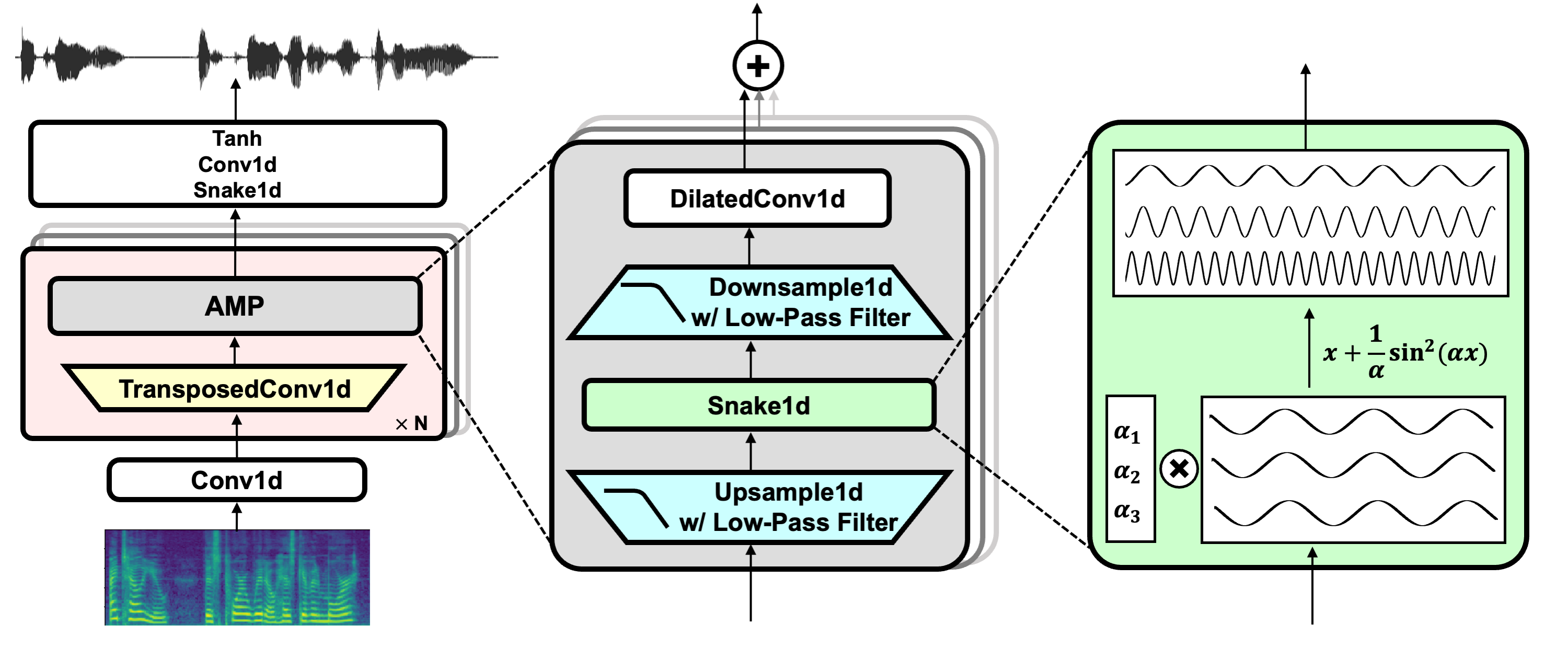

BigVGAN is a universal neural vocoder trained on a large scale, which can be used for audio generation and speech synthesis.

Sang-gil Lee, Wei Ping, Boris Ginsburg, Bryan Catanzaro, Sungroh Yoon

[Paper] - [Code] - [Showcase] - [Project Page] - [Weights] - [Demo]

🚀 Quick Start

This README provides information about BigVGAN, including news, installation, usage, and details of pretrained models.

✨ Features

- General Refactor: In July 2024 (v2.3), the code was refactored for better readability.

- Fused CUDA Kernel: A fully fused CUDA kernel of anti-alised activation was introduced with inference speed benchmark.

- Interactive Demo: An interactive local demo using gradio was added in July 2024 (v2.2).

- Hugging Face Integration: Integrated with 🤗 Hugging Face Hub in July 2024 (v2.1), providing easy access to inference and an interactive demo on Hugging Face Spaces.

- BigVGAN-v2 Release: In July 2024 (v2), BigVGAN-v2 was released with a custom CUDA kernel for faster inference, improved discriminator and loss, and larger training data.

📦 Installation

This repository contains pretrained BigVGAN checkpoints with easy access to inference and additional huggingface_hub support.

If you are interested in training the model and additional functionalities, please visit the official GitHub repository for more information: https://github.com/NVIDIA/BigVGAN

git lfs install

git clone https://huggingface.co/nvidia/bigvgan_v2_22khz_80band_fmax8k_256x

💻 Usage Examples

Basic Usage

The following example shows how to use BigVGAN: load the pretrained BigVGAN generator from Hugging Face Hub, compute the mel spectrogram from the input waveform, and generate the synthesized waveform using the mel spectrogram as the model's input.

device = 'cuda'

import torch

import bigvgan

import librosa

from meldataset import get_mel_spectrogram

model = bigvgan.BigVGAN.from_pretrained('nvidia/bigvgan_v2_22khz_80band_fmax8k_256x', use_cuda_kernel=False)

model.remove_weight_norm()

model = model.eval().to(device)

wav_path = '/path/to/your/audio.wav'

wav, sr = librosa.load(wav_path, sr=model.h.sampling_rate, mono=True)

wav = torch.FloatTensor(wav).unsqueeze(0)

mel = get_mel_spectrogram(wav, model.h).to(device)

with torch.inference_mode():

wav_gen = model(mel)

wav_gen_float = wav_gen.squeeze(0).cpu()

wav_gen_int16 = (wav_gen_float * 32767.0).numpy().astype('int16')

Advanced Usage

You can apply the fast CUDA inference kernel by using a parameter use_cuda_kernel when instantiating BigVGAN:

import bigvgan

model = bigvgan.BigVGAN.from_pretrained('nvidia/bigvgan_v2_22khz_80band_fmax8k_256x', use_cuda_kernel=True)

When applied for the first time, it builds the kernel using nvcc and ninja. If the build succeeds, the kernel is saved to alias_free_activation/cuda/build and the model automatically loads the kernel. The codebase has been tested using CUDA 12.1.

Please make sure that both are installed in your system and nvcc installed in your system matches the version your PyTorch build is using.

For detail, see the official GitHub repository: https://github.com/NVIDIA/BigVGAN?tab=readme-ov-file#using-custom-cuda-kernel-for-synthesis

📚 Documentation

News

-

Jul 2024 (v2.3):

- General refactor and code improvements for improved readability.

- Fully fused CUDA kernel of anti-alised activation (upsampling + activation + downsampling) with inference speed benchmark.

-

Jul 2024 (v2.2): The repository now includes an interactive local demo using gradio.

-

Jul 2024 (v2.1): BigVGAN is now integrated with 🤗 Hugging Face Hub with easy access to inference using pretrained checkpoints. We also provide an interactive demo on Hugging Face Spaces.

-

Jul 2024 (v2): We release BigVGAN-v2 along with pretrained checkpoints. Below are the highlights:

- Custom CUDA kernel for inference: we provide a fused upsampling + activation kernel written in CUDA for accelerated inference speed. Our test shows 1.5 - 3x faster speed on a single A100 GPU.

- Improved discriminator and loss: BigVGAN-v2 is trained using a multi-scale sub-band CQT discriminator and a multi-scale mel spectrogram loss.

- Larger training data: BigVGAN-v2 is trained using datasets containing diverse audio types, including speech in multiple languages, environmental sounds, and instruments.

- We provide pretrained checkpoints of BigVGAN-v2 using diverse audio configurations, supporting up to 44 kHz sampling rate and 512x upsampling ratio.

Pretrained Models

We provide the pretrained models on Hugging Face Collections.

One can download the checkpoints of the generator weight (named bigvgan_generator.pt) and its discriminator/optimizer states (named bigvgan_discriminator_optimizer.pt) within the listed model repositories.

📄 License

This project is licensed under the MIT License. See LICENSE for details.

Transformers

Transformers