🚀 BLIP-2, OPT-2.7b, fine-tuned on COCO

BLIP-2 model leveraging OPT-2.7b, a large language model with 2.7 billion parameters. It's introduced in a paper and first released in a specific repository.

This BLIP-2 model makes use of OPT-2.7b, a large language model boasting 2.7 billion parameters. It was introduced in the paper BLIP-2: Bootstrapping Language-Image Pre-training with Frozen Image Encoders and Large Language Models by Li et al. and first released in this repository.

Disclaimer: The team releasing BLIP-2 didn't write a model card for this model, so this one has been crafted by the Hugging Face team.

✨ Features

- Multi - task Capability: Can be used for image captioning, visual question answering (VQA), and chat - like conversations.

- Bridge between Image and Text: The Querying Transformer bridges the gap between the image encoder's embedding space and the large language model.

📚 Documentation

📋 Model description

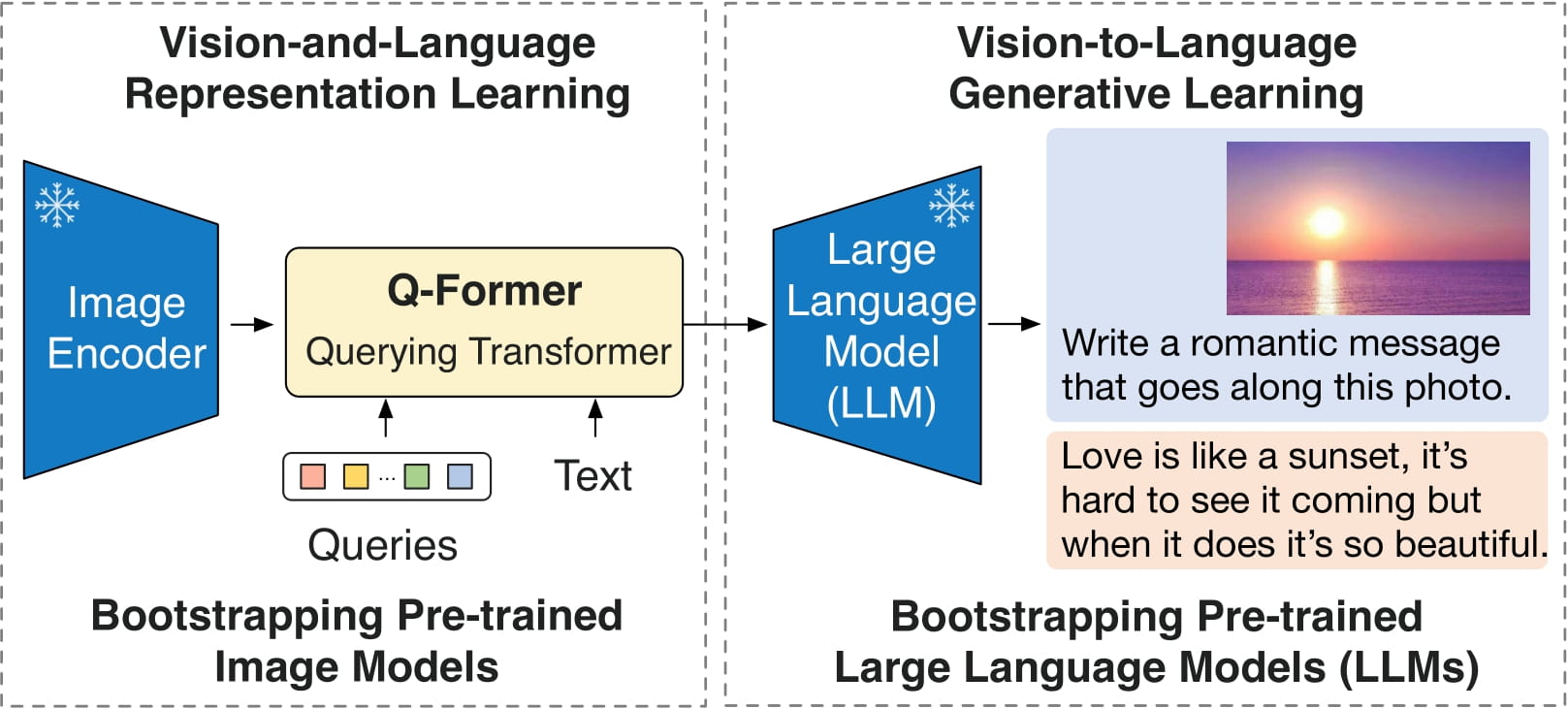

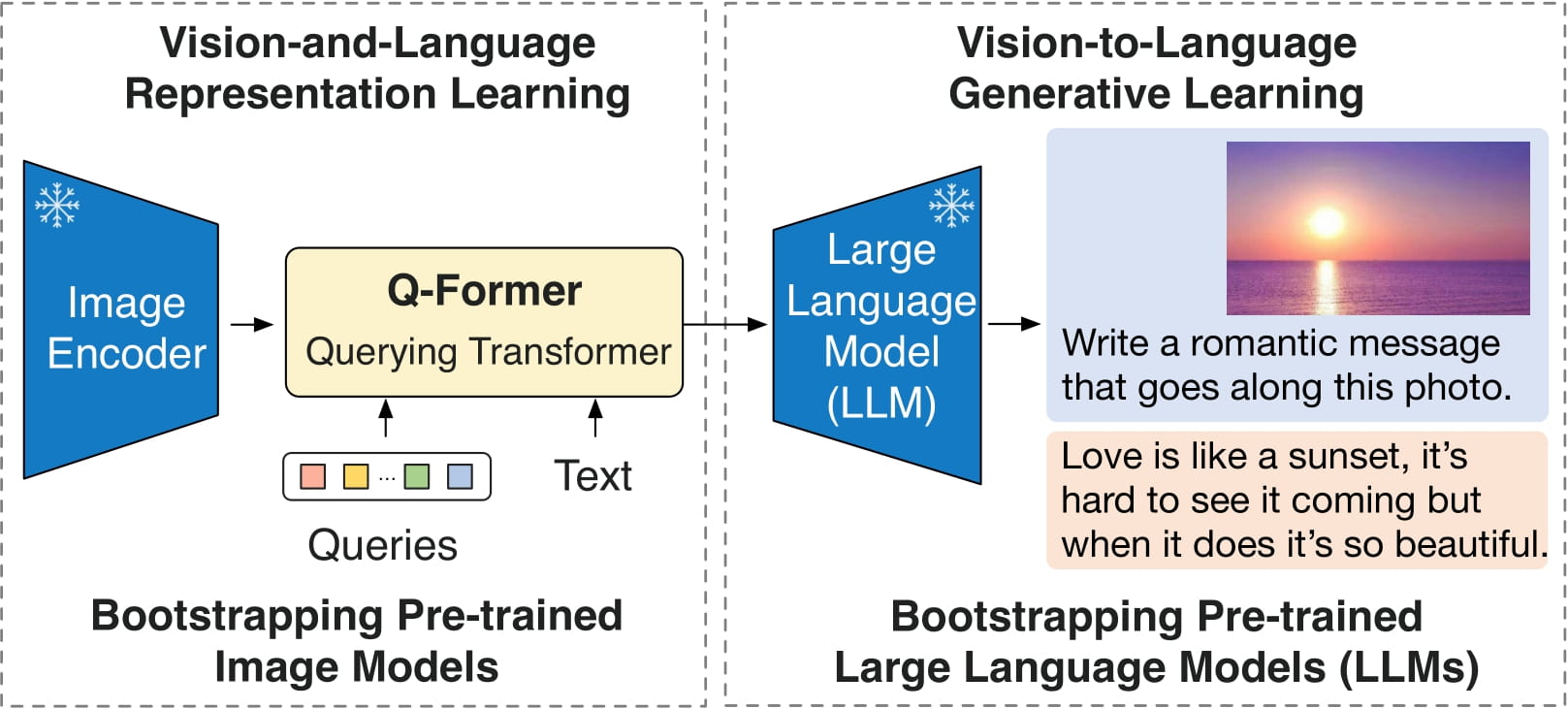

BLIP-2 is composed of 3 models: a CLIP - like image encoder, a Querying Transformer (Q - Former), and a large language model.

The authors initialize the weights of the image encoder and large language model from pre - trained checkpoints and keep them frozen while training the Querying Transformer. The Querying Transformer is a BERT - like Transformer encoder that maps a set of "query tokens" to query embeddings, which bridge the gap between the embedding space of the image encoder and the large language model.

The model's goal is to predict the next text token, given the query embeddings and the previous text.

This enables the model to be applied to tasks such as:

- Image captioning

- Visual question answering (VQA)

- Chat - like conversations by providing the image and the previous conversation as a prompt to the model

📈 Direct Use and Downstream Use

You can utilize the raw model for conditional text generation given an image and optional text. Check the model hub to find fine - tuned versions for tasks that pique your interest.

⚠️ Bias, Risks, Limitations, and Ethical Considerations

BLIP2 - OPT employs off - the - shelf OPT as the language model. It inherits the same risks and limitations as stated in Meta's model card.

Like other large language models for which the diversity (or lack thereof) of training data induces downstream impact on the quality of our model, OPT - 175B has limitations in terms of bias and safety. OPT - 175B can also have quality issues in terms of generation diversity and hallucination. In general, OPT - 175B is not immune from the plethora of issues that plague modern large language models.

BLIP2 is fine - tuned on image - text datasets (e.g., [LAION](https://laion.ai/blog/laion - 400 - open - dataset/)) gathered from the internet. As a result, the model itself may generate inappropriate content or replicate inherent biases in the underlying data.

BLIP2 has not been tested in real - world applications. It should not be directly deployed in any applications. Researchers should first carefully assess the safety and fairness of the model in the specific context of deployment.

🤔 Ethical Considerations

This release is for research purposes only to support an academic paper. Our models, datasets, and code are not specifically designed or evaluated for all downstream purposes. We strongly recommend that users evaluate and address potential concerns related to accuracy, safety, and fairness before deploying this model. We encourage users to consider the common limitations of AI, comply with applicable laws, and adopt best practices when choosing use cases, especially for high - risk scenarios where errors or misuse could significantly impact people’s lives, rights, or safety. For further guidance on use cases, refer to our AUP and AI AUP.

💻 Usage Examples

For code examples, refer to the [documentation](https://huggingface.co/docs/transformers/main/en/model_doc/blip - 2#transformers.Blip2ForConditionalGeneration.forward.example).

📄 License

This project is licensed under the MIT license.